Paper: Dapper, Google's Large-Scale Distributed Systems Tracing Infrastructure

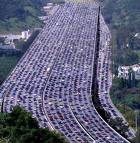

Imagine a single search request coursing through Google's massive infrastructure. A single request can run across thousands of machines and involve hundreds of different subsystems. And oh by the way, you are processing more requests per second than any other system in the world. How do you debug such a system? How do you figure out where the problems are? How do you determine if programmers are coding correctly? How do you keep sensitive data secret and safe? How do ensure products don't use more resources than they are assigned? How do you store all the data? How do you make use of it?

That's where Dapper comes in. Dapper is Google's tracing system and it was originally created to understand the system behaviour from a search request. Now Google's production clusters generate more than 1 terabyte of sampled trace data per day. So how does Dapper do what Dapper does?

Dapper is described in an very well written and intricately detailed paper: Dapper, a Large-Scale Distributed Systems Tracing Infrastructure by Benjamin H. Sigelman, Luiz Andre Barroso, Mike Burrows, Pat Stephenson, Manoj Plakal, Donald Beaver, Saul Jaspan, Chandan Shanbhag. The description of Dapper from The Datacenter as a Computer: An Introduction to the Design of Warehouse-Scale Machines is:

The Dapper system, developed at Google, is an example of an annotation-based tracing tool that remains effectively transparent to application-level software by instrumenting a few key modules that are commonly linked with all applications, such as messaging, control flow, and threading libraries. Finally, it is extremely useful to build the ability into binaries (or run-time systems) to obtain CPU, memory, and lock contention profiles of in-production programs. This can eliminate the need to redeploy new binaries to investigate performance problems.

The full paper is worth a full read and a re-read, but we'll just cover some of the highlights:

- There are so many operations going on that Google can't trace every request all the time, so in order to reduce overhead they sample one out of thousands of requests. Google found sampling provides sufficient information for many common uses of the tracing data.

- Dapper uses an annotation based scheme in which applications or middleware explicitly tag every record with a global identifier that links these message records back to the originating request.

- Tracing is laregly transparent to applications because the trace code in common libraries (threading, control flow, RPC) is sufficient to debug most problems. This indicates just one of the benefits of benefits of creating a common infrastructure and API.

- From the example code in the article tracing, the C++ code uses standard looking logging macros. Traces can include text, key-value pairs, binary messages, counters, and arbitrary data. Trace volume is restricted so the system can't be overhwhelmed.

- RPC, SMTP sessions in Gmail, HTTP requests from the outside world, and outbound queries to SQL

servers are all traced. - Traces are modeled using trees, spans, and annotations. A tree is used to establish order. Nodes in the tree are basic units of work called spans. Edges in a tree establish a causal relationship between a parent span and a span. A tree unites all the thousands if downstream work requests needed to carry out an originating request.

- A trace id is allocated to bind all the spans to a particular trace session. The trace id isn't a globally unique sequence number, it's a probabilistically unique 64-bit integer.

- Traces are collected in local log files which are then pulled and written into Google's Bigtable database. Each row is a single trace which each column mapped to a span. The median latency for sending trace data from applications to the central repository is 15 seconds, but often it can take many hours.

- There's also always the curious question of how do you trace the tracing system? It leads to an infinite loop. An out-of-bound trace mechanism is in place for that purpose.

- Security and privacy are an important part of the design. Payloads are not automatically logged, there's an opt-in mechanism that indicates if payloads should be included.

- Tracing supports a generic analysis of system properties. For example, Google can look at the trace to pinpoint: which applications are not using proper levels of authentication and encryption; and find which applications accessing sensitive data are not logging at an appropriate level so they won't see data they shouldn't see.

- The trace library is very small and efficient. If it were not then tracing would dominate execution time. In practice Dapper accounts for very little latency inside an application, on the disk, or on the network.

- Overhead is proportional to the sampling rate. A higher sampling rate means more overhead. Originally the sampling rate was uniform, they are now moving to an adaptive sampling rate that species a desired number of traces per unit of time. With this approach very high load workloads wll sample less and lower workloads will be able to sample more.

- You might worry that sampling means missing problems. Google has found that meaningful patterns reoccur so sampling will detect them.

- Sampled data is stored at a rate of 1TB a day and is kept for two weeks, allowing a long period for analysis.

- In order to reduce the amount of data that is stored into Bigtable, traces undergo another round of sampling. Entire traces are kept or discarded. If a trace is kept all spans for the trace are also kept. The two levels of sampling allow Google to tune the amount of data they keep. Application tracing is at a higher level than they wish to store, but with the second level of sampling they can easily control how much they keep depending on conditions. Controlling the sampling rate at the application level across all their clusters doesn't seem feasible.

- DAPI is an API ontop of the trace data which makes it possible to write trace applications and analysis tools. Data can be accessed by trace id, in bulk by MapReduce, or by index. By creating an API and opening up the data to developers, an open-source like set of tools has grown up around DAPI that has made it much more valuable.

- A UI is available to display trace in useful ways: by service and time window; a table of performance summaries; by single execution patter; by plot of a metric, for example, log normal distribution of latencies; root cause analysis using a global time line that can be explored.

- During development the trace information is used to characterize performance, determine correctness, understand how an application is working, and as a verification test to determine if an application is behaving as expected.

- Developers have parallel debug logs that are outside of the trace system. So trace is more for a system perspective. When you are debugging specific problems you need to go into much more detail.

- Dapper can be used to identify systems that undergoing high latency or having other problems. Then the data collection daemons on those hosts can be contacted directly to gather more data that can be used to debug live problems.

- It's often hard to identify which subsystems are using what percentage of resources. Using the trace data it's possible to generate charge back data based on actual usage.

- As a system evolves and more stages are added to a computation, this sort of information becomes invaluable when characterising end-to-end latencies. Some interesting bits:

- Network performance hiccups along the critical path create higher latencies but doesn't effect system throughput.

- Using the system view provided by the trace data they were able to identify unintended service interactions and fix them.

- A list of examples queries that are slow for each individual subsystem could be generated.

- Dapper has a few downsides too:

- Work that is batched together for efficiency is not correctly mapped to the trace ids inside the batch. A trace id might get blamed for work it is not doing.

- Offline workloads like MapReduce are not integrated in.

- Dapper is good at finding system problems, but not drilling down why a part of the system is slow. It's not including context information like queue depths that are part of a deep debug dive.

- Kernel level information is not included.

As you might expect Google has produced and elegant and well thought out tracing system. In many ways it is similar to other tracing systems, but it has that unique Google twist. A tree structure, probabilistically unique keys, sampling, emphasising common infrastructure insertion points, technically minded data exploration tools, a global system perspective, MapReduce integration, sensitivity to index size, enforcement of system wide invariants, an open API—all seem very Googlish.

The largest apparent weakness in my mind is that developers have to keep separate logs. This sucks for developers trying to figure out what the heck is going on in a system. All the same tools should be available to developers trying to drill down as the people who are trying to look across. This same bias is evident in the lack of detailed logging about queue depths, memory, locks, task switching, disk, task priorities and other detailed environmental information. When things are slow it's often these details that are the root cause.

Despite those in-the-trenches issues, Dapper seems like a very cool system that any organization can learn from.

Related Articles

- Google Architecture

- Log Everything All the Time

- Product: Scribe - Facebook's Scalable Logging System

- Strategy: Sample to Reduce Data Set

- The Datacenter as a Computer: An Introduction to the Design of Warehouse-Scale Machines by Luiz André Barroso

and Urs Hölzle

of Google Inc.