Stuff The Internet Says On Scalability For December 19th, 2014

Hey, it's HighScalability time:

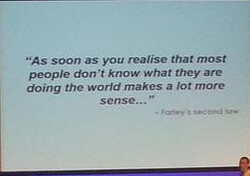

Brilliant & hilarious keynote to finish the day at #yow14 (Matt)

- 1985: more Star Wars figures on the planet than US citizens; 10,000: number loops found in human DNA; terabyte on a postage stamp: RRAM is almost here; 1.2 billion datapoints per minute: Netflix's Primary Telemetry Platform

- James Urquhart asks an interesting question: Is AWS already “too big to fail”? What would happen if Amazon ran into a serious financial crisis?

- What do Philip K. Dick and Open Source have in common? They made lots of money for other people. Dick sometimes had to eat cat food and couldn't afford a doctor for his children, yet movies like Blade Runner, Minority Report, and Total Recall made fortunes. In Open Source this shouldn't be news: What's App Founder donates $1 million dollars to FreeBSD.

- Every project wants to capture the zeitgeist of the Skunk Works, created by Kelly Johnson, whose team created the fabled SR-71 Blackbird. Good discussion of The inventor of the SR-71's rules for project management.

- The Network is Reliable. Not! paraphrases Bailis and Kingsbury. In particular the idea that the "Topology doesn’t change" is more of a fallacy than ever in the cloud as the topology can and does change all the time.

- Nicely done. Clearly written. Final Root Cause Analysis and Improvement Areas: Nov 18 Azure Storage Service Interruption: a change on the Azure Blob storage Front-Ends exposed a bug which resulted in some Azure Blob storage Front-Ends entering an infinite loop and unable to service requests. < aHaving pushed out changes that broke all the things, it's easy to relate.

- Parse is a very popular Backend as a Service, used primarily on mobile apps. It saves a lot of time and energy in getting an app running, but is it as useful when you actually get to scale? Ante Dagelić in All the limits of Parse says probably not: 160 API requests per minute for ENTIRE app; limited number of COUNT operations; maximum of 2 concurrent jobs; log system remembers only 100 last logs; push notifications have delay; uptime of parse system is also an issue; no mutex/lock/semaphore logic. And many more.

- Fred T-H uses some well done plumbing pictures to explain why Queues Don't Fix Overload: So when I rant about/against queues, it's because queues will often be (but not always) applied in ways that totally mess up end-to-end principles for no good reason. It's because of bad system engineering, where people are trying to make an 18-wheeler go through a straw and wondering why the hell things go bad. In the end the queue just makes things worse.

- How does Cassandra avoid performing thousands of disk seeks on every read request? Bloom filters, Minimum & maximum clustering keys, Minimum & maximum timestamps, (Hashed) partition key ranges. But there is another. Compaction, which Björn Hegerfor explains in Date-Tiered Compaction in Apache Cassandra.

- Adrian Cockcroft reveals his favorite videos from AWS Re:Invent 2014.

- Knewton shouts Eureka! Why You Shouldn’t Use ZooKeeper for Service Discovery: for service discovery it’s better to have information that may contain falsehoods than to have no information at all. It is much better to know what servers were available for a given service five minutes ago than to have no idea what things looked like due to a transient network partition. The guarantees that ZooKeeper makes for coordination are the wrong ones for service discovery, and it hurts you to have them.

- Videos from Big Data Everywhere 2014 are now available on InfoQ.

- Backblaze tests 6TB hard drives. And when they test something they really test it. Conclusion: Today the Western Digital hard drives are first in line to be our choice for 6 TB drives.

- Is it wise to use off heap memory in Java? Peter Lawrey answers in On heap vs off heap memory usage: Off heap memory can have challenges but also come with a lot of benefits. Where you see the biggest gain and compares with other solutions introduced to achieve scalability. Off heap is likely to be simpler and much faster than using partitioned/sharded on heap caches, messaging solutions, or out of process database...The biggest gain however, can be your startup time, giving you a production system which restarts much faster. e.g. mapping in a 1 TB data set can take 10 milli-seconds.

- Weaver is a new distributed graph-store store that shards the graph over multiple servers. Weaver has a rich, node-oriented query model, is transactional, scales linearly, is fast.

- Ivan Pepelnjak with a nice overview of Load Balancing in Google Network.

- If comparing it to CPU-based processing (i.e. pure Swift-code) the performance gains can be even higher (up to 75 times faster on iPhone 6)...GPGPU Performance of Swift/Metal vs Accelerate on iPhone 6 & 5S, iPad Air.

- Defcon videos are now available.

- The 80/20 rule… for storage systems: Nonuniform distributions are everywhere. Thanks to observations such as Pareto’s, system design at all scales benefit from focussing on serving the most popular things as efficiently as possible. Designs like these lead to the differences between highways and rural roads, hub cities in transport systems, core internet router designs, and most of the Netflix Original Series titles.

- A really nice deep dive on Scalability Techniques for Practical Synchronization Primitives.

- Benchmark tests shed light on Google, AWS SSD claims. Not all may be as it seems.

- In your Daily Docker hit, here's How Docker Simplifies Distributed Systems Development at VoltDB: Docker has simplified my distributed system development and debugging process tremendously; And here's 10x: Docker at Clay.io.

- Nice set of lessons learned on Syncing Postgres to Elasticsearch from GoCardless. The simple idea of pushing changes out to multiple services doesn't work without transactional guarantees.

- The Distributed Post Office: Instant hierarchy for mesh networks: Our results show that the Distributed Post Office works, but not very efficiently: A few nodes (The "highest" node with respect to every hash function) have the responsibility of routing messages for the whole network.

- 16 NoSQL, NewSQL Databases To Watch. Seems like an accurate list.

- Efficient Virtual Memory for Big Memory Servers: Our analysis shows that many “big-memory” server workloads, such as databases, in-memory caches, and graph analytics, pay a high cost for page-based virtual memory. To remove the TLB miss overhead for big-memory workloads, we propose mapping part of a process’s linear virtual address space with a direct segment.

- Kiln: Closing the Performance Gap Between Systems With and Without Persistence Support: Our goal is to design a persistent memory system with performance very close to that of a native system. We propose Kiln, a persistent memory design that adopts a nonvolatile cache and a nonvolatile main memory to enable atomic in-place updates without logging or copy-on-write. Our evaluation shows that Kiln can achieve 2× performance improvement compared with NVRAM-based persistent memory with write-ahead logging.