Stuff The Internet Says On Scalability For March 14th, 2014

Hey, it's HighScalability time:

- Quotable Quotes:

- The Master Switch: History shows a typical progression of information technologies: from somebody’s hobby to somebody’s industry; from jury-rigged contraption to slick production marvel; from a freely accessible channel to one strictly controlled by a single corporation or cartel—from open to closed system.

- @adrianco: #qconlondon @russmiles on PaaS "As old as I am, a leaky abstraction would be awful..."

- @Obdurodon: "Scaling is hard. Let's make excuses."

- @TomRoyce: @jeffjarvis the rot is deep... The New Jersey pols just used Tesla to shake down the car dealers.

- @CompSciFact: "The cheapest, fastest and most reliable components of a computer system are those that aren't there." -- Gordon Bell

- @glyph: “Eventually consistent” is just another way to say “not consistent right now”.

- @nutshell: LinkedIn is shutting down access to their APIs for CRMs (unless you’re Salesforce or Microsoft). Support open APIs!

- Tim Berners-Lee: I never expected all these cats.

- @muratdemirbas: "Simple clear purpose&principles give rise to complex&intelligent behavior.

Complex rules®ulations give rise to simple&stupid behavior."

-

@BonzoESC: “Duplication is far cheaper than the wrong abstraction.” @sandimetz @rbonales

-

@BenedictEvans: Umeng: there are 700m active smartphones and tablets in China.

- Scale matters object lesson number infinity: HBO Go Crashes During True Detective Finale. Perhaps make HBO Go available without a cable package and maybe you'll have money to scale the service? Think peak. But wait, Dan Rayburn says bandwidth was not the problem, it's other parts of the system, which is why Internet TV will never be as reliable as broadcast TV. Still, I'd like to cut the cord.

- Turns out ecommerce over messaging works well...quite well. Retailers Are Striking Gold with Instagram: Fox and Fawn, items often sell out within minutes of the picture being posted on Instagram.

- Even Facebook's infrastructure struggles when a new feature becomes an unexpected hit. That's the situation described in an engaging story: Looking back on “Look Back” videos. Look Back's are one minute videos generated from a user's pics and posts. For the release they planned on 187 Gbps more bandwidth and 25 petabytes of disk. To get the rendering done they highly parallelized the pipeline. CDNs were alerted. Internal tests on employees found a few bugs. Less storage was actually needed because the video could be regenerated so a high replication factor wasn't needed. Go time! The videos were an unexpected hit with a 40% reshare instead of the projected 10% reshare. It seems people like themselves...a lot. Overnight 30 teams cooperated to move tens of thousands of machines over to rendering. Good story. Though I'm disappointed it didn't have its own Look Back video. Stories are people too.

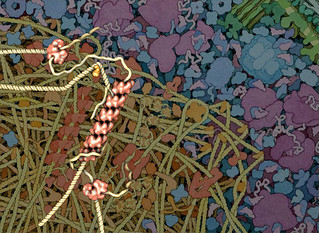

- Why Google's services aren't really free. We all help train the beast. A Glimpse of Google, NASA & Peter Norvig + The Restaurant at the End of the Universe: Algorithms behave differently as they churn thru more data. For example in the figure, the Blue algorithm was better with a million training dataset. If one had stopped at that scale, one would think of optimizing that algorithm for better performance. But as the scale increased the purple algorithm started showing promise – in fact the blue one starts deteriorating at larger scale; In general, Google prefers algorithms that get better with data. Not all algorithms are like that, but Google likes to after the ones with this type of performance characteristic.

- Great look at performance in the small: Fixing The Chrome DevTools - A tale about going from 6 to 60 frames per second. Removing some surprisingly small costs made huge paint time improvements. The process is as interesting as the results.

- Spotify on How to shuffle songs? What people mean by random is different than what mathematicians think of as random. People don't want an artist to have multiple songs within a certain window and math doesn't care. The solution is related to dithering to even out black and white spots on pictures. Good reddit thread.

- Balanced's Architecture for payment security. Or why security is so hard to do correctly.

- Age old discussion of where to run calculations. In the database or in the app? Please, Run That Calculation in Your RDBMS makes a reasonable case. But as you might expect there are some rebutals. The modern take is to run calculations in apps which can be appropriately distributed to scale.

- Great series. Replication in network games: Bandwidth (Part 4). Reduce bandwidth by reducing the number of players in a game; reducing update frequency which also increases latency; improving the serialization isn't usually a big win; compression; patch based updates; replicate fewer objects using rules based, static, and geometric strategies.

- LOL. How to speed up your computer using Google Drive as extra RAM.

- Huge discussion on Programmers Shortage Claims and Facebook’s $19 Billion Acquisition of WhatsApp. If Brian Acton and Jan Koum both can't get jobs then you know the system is fubared.

- If you can fit all your data inside a single UDP packet then you've done a lot to win the latency and bandwidth game. In Oodle Packet Compression for UDP you learn how to create a static model compression where "you pre-train some model based on a capture of typical network data."

- Couchbase View Engine Internals. The title is no lie. Lots of juicy details and good supporting graphics. Definitely a good source for learning otherwise hidden how-to secrets.

- The Truth About MapReduce Performance on SSDs: SSDs have up to 70 percent higher performance, for 2.5x higher $ per performance (average performance divided by cost). This is far lower than the 50x difference in $ per TB computed in the table below. Customers can consider paying a premium cost to obtain up to 70 percent higher performance.

- Sync Hacks: How Angie’s List Reduced Their Web Deployment Time to Seconds. Interesting solution based on BitTorrent Sync API used to update hundreds of servers with new code. Code can now be deployed daily which could never have happened before. Unfortunately they don't say why it's so fast nor does the web site.

- Nicely done: A Brief History of Databases. As we've almost certainly reached the End of Database History this is all you ever need to know.

- Adam Rothschild: Despite Comcast-Netflix deal, settlement-free peering is alive and well: While we have not witnessed a change in peering dynamics as a result of the Netflix/Comcast transaction, a trend we have seen over the past few years is the degrading quality of bandwidth from conventional “tier 1” ISPs, where peering edges have become congested due to the games described above. Network operators commonly discuss on mailing lists how the big four access shops all maintain edges which are boiling hot unless you pay them, or buy from an intermediary paying them. Where it was once possible for an enterprise or content shop to enjoy “good enough” connectivity purchasing from these providers directly, one now must enter the complex game of multi-homing to a half dozen or more providers, or purchase from a route-optimized “tier 2” like an Internap, in order to enjoy a positive and congestion-free user experience.

- Trends in Steganography: These facts indicate that in today's world of digital technologies, it is easily imaginable that the carrier, in which secret data is embedded, was not necessarily an image or Web page source code, but may have been any other file type or organizational unit of data—for example, a packet or a frame—that naturally occurs in computer networks. However, we emphasize the process of embedding secret information into an innocent-looking carrier is not some recent invention—it has been known and used for ages by humankind. This process is called steganography and its origins can be traced back to ancient times. Moreover, its importance has not decreased since its birth.