Stuff The Internet Says On Scalability For December 9th, 2016

Hey, it's HighScalability time:

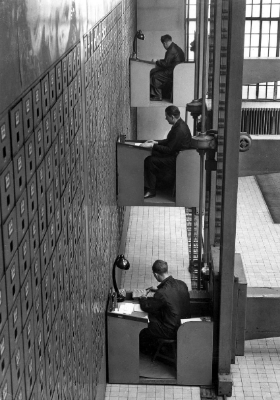

Here's a 1 TB hard drive in 1937. Twenty workers operated the largest vertical letter file in the world. 4000 SqFt. 3000 drawers, 10 feet long. (from @BrianRoemmele)

If you like this sort of Stuff then please support me on Patreon.

- 98%~ savings in green house gases using Gmail versus local servers; 2x: time spent on-line compared to 5 years ago; 125 million: most hours of video streamed by Netflix in one day; 707.5 trillion: value of trade in one region of Eve Online; $1 billion: YouTube's advertisement pay-out to the music industry; 1 billion: Step Functions predecessor state machines run per week in AWS retail; 15.6 million: jobs added over last 81 months;

- Quotable Quotes:

- Gerry Sussman~ in the 80s and 90s, engineers built complex systems by combining simple and well-understood parts. The goal of SICP was to provide the abstraction language for reasoning about such systems...programming today is more like science. You grab this piece of library and you poke at it. You write programs that poke it and see what it does. And you say, ‘Can I tweak it to do the thing I want?

- @themoah: Last year Black Friday weekend: 800 Windows servers with .NET. This year: 12 Linux servers with Scala/Akka. #HighScalability #Linux #Scala

- @swardley: If you're panicking over can't find AWS skills / need to go public cloud - STOP! You missed the boat. Focus now on going serverless in 5yrs.

- @jbeda: Nordstrom is running multitenant Kubernetes cluster with namespace per team. Using RBAC for security.

- Tim Harford: What Brailsford says is, he is not interested in team harmony. What he wants is goal harmony. He wants everyone to be focused on the same goal. He doesn’t care if they like each other and indeed there are some pretty famous examples of people absolutely hating each other.

- @brianhatfield: SUB. MILLISECOND. PAUSE. TIME. ON. AN. 18. GIG. HEAP. (Trying out Go 1.8 beta 1!)

- haberman: If you can make your system lock-free, it will have a bunch of nice properties: - deadlock-free - obstruction-free (one thread getting scheduled out in the middle of a critical section doesn't block the whole system) - OS-independent, so the same code can run in kernel space or user space, regardless of OS or lack thereof

- Neil Gunther: The world of performance is curved, just like the real world, even though we may not always be aware of it. What you see depends on where your window is positioned relative to the rest of the world. Often, the performance world looks flat to people who always tend to work with clocked (i.e., deterministic) systems, e.g., packet networks or deep-space networks.

- @yoz: I liked Westworld, but if I wanted hours of watching tech debt and no automated QA destroy a virtual world, I’d go back to Linden Lab

- @adrianco: I think we are seeing the usual evolution to utility services, and new higher order (open source) functionality emerges /cc @swardley

- Neil Gunther: a buffer is just a queue and queues grow nonlinearly with increasing load. It's queueing that causes the throughput (X) and latency (R) profiles to be nonlinear.

- Juho Snellman: I think [QUIC] encrypting the L4 headers is a step too far. If these protocols get deployed widely enough (a distinct possibility with standardization), the operational pain will be significant.

- @Tobarja: "anyone who is doing microservices is spending about 25% of their engineering effort on their platform" @jedberg

- @cdixon: 2016 League of Legends finals: 43M viewers 2016 NBA finals: 30.8M viewers

- @mikeolson: 7 billion people on earth; 3 billion images shared on social media every day. @setlinger at #StrataHadoop

- @swardley: When you think about AWS Lambda, AWS Step Functions et al then you need to view this through the lens of automating basic doctrine i.e. not just saying it and codifying in maps and related systems but embedding it everywhere. At scale and at the speed of competition that I expect us to reach then this is going to be essential.

- Jakob Engblom: hardware accelerators for particular common expensive tasks seems to be the right way to add performance at the smallest cost in silicon area and power consumption.

- Joe Duffy: The future for our industry is a massively distributed one, however, where you want simple individual components composed into a larger fabric. In this world, individual nodes are less “precious”, and arguably the correctness of the overall orchestration will become far more important. I do think this points to a more Go-like approach, with a focus on the RPC mechanisms connecting disparate pieces

- @cmeik: AWS Lambda is cool if you never had to worry about consistency, availability and basically all of the tradeoffs of distributed systems.

- prions: As a Civil Engineer myself, I feel like people don't realize the amount of underlying stuff that goes into even basic infrastructure projects. There's layers of planning, design, permitting, regulations and bidding involved. It usually takes years to finally get to construction and even then there's a whole host of issues that arise that can delay even a simple project.

- Netflix: If you can cache everything in a very efficient way, you can often change the game.

- The Attention Merchants: One [school] board in Florida cut a deal to put the McDonald’s logo on its report cards (good grades qualified you for a free Happy Meal). In recent years, many have installed large screens in their hallways that pair school announcements with commercials. “Take your school to the digital age” is the motto of one screen provider: “everyone benefits.” What is perhaps most shocking about the introduction of advertising into public schools is just how uncontroversial and indeed logical it has seemed to those involved.

-

Just how big is Netflix? The story of the tape is told in Another Day in the Life of a Netflix Engineer. Netflix runs way more than 100K EC2 instances and more than 80,000 CPU cores. They use both predictive and reactive autoscaling, aiming for not too much or too little, just the right amount. Of those 100K+ instances they will autoscale up and down 20% of that capacity everyday. More than 50Gbps ELB traffic per region. More than 25Ggps is telemetry data from devices sending back customer experience data. At peak Netflix is responsible over 37% of Internet traffic. The monthly billing file for Netflix is hundreds of megabytes with over 800 million lines of information. There's a hadoop cluster at Amazon whose only purpose is to load Netflix's bill. Netflix considers speed of innovation to be a strategic advantage. About 4K code changes are put into production per day. At peak over 125 million hours of video were streamed in a day. Support for 130 countries was added in one day. That last one is the kicker. Reading about Netflix over all these years you may have got the idea Netflix was over engineered, but going global in one day was what it was all about. Try that if you are racking and stacking.

-

Oh how I miss stories that began Once upon a time. The start of so many stories these days is The attack sequence begins with a simple phishing scheme. This particular cautionary tale is from Technical Analysis of Pegasus Spyware, a very, almost lovingly, detailed account of the total ownage of the "secure" iPhone. The exploit made use of three zero-day vulnerabilities: CVE-2016-4657: Memory Corruption in WebKit, CVE-2016-4655: Kernel Information Leak, CVE-2016-4656: Kernel Memory corruption leads to Jailbreak. Do not read if you would like to keep your Security Illusion cherry intact.

- Composing RPC calls gets harder has the graph of calls and dependencies explodes. Here's how Twitter handles it. Simplify Service Dependencies with Nodes. Here's their library on GitHub. It's basically just a way to setup a dependency graph in code and have all the RPCs executed to the plan. It's interesting how parallel this is to setting up distributed services in the first place. They like it: We have saved thousands of lines of code, improved our test coverage and ended up with code that’s more readable and friendly for newcomers. Also, AWS Step Functions.

-

Love posts like this. Building The Buffer Links Service. An easy to understand problem that explores multiple implementations in detail. The problem: track the number of posts containing a URL. They tried three solutions. Amazon RDS (Aurora) + Node + Redis. Problem: MySQL queries too slow with a lot of data. Elasticsearch. Problem: too expensive at scale. Redis + S3. The winner: even with 100% of production traffic, average response times stayed below one millisecond.

-

Videos from SICS Software Week 2016 - Multicore Day are now available. And here's a review from Jakob Engblom. Interesting: each power of two of cores required a re-architecting and tackling a new set of problems. It seems there is a similar effect at play in large systems – maybe each power of 10 you have to rethink radically how you do things.

-

Humans so clever in their iniquity. Or why interpreters can never be trusted. For two years, criminals stole sensitive information using malware hidden in individual pixels of ad banners: The javascript sent by the attackers would run through the pixels in the banners, looking for ones with the telltale alterations, then it would turn that tweaked transparency value into a character. By stringing all these characters together, the javascript would assemble a new program, which it would then execute on the target's computer.

-

Joe Duffy, his amazing Midori series is a true geek pleasure, dispenses the wisdom in 15 Years of Concurrency: The real big miss, in my opinion, was mobile. This was precisely when the thinking around power curves, density, and heterogeneity should have told us that mobile was imminent, and in a big way. Instead of looking to beefier PCs, we should have been looking to PCs in our pockets. Instead, the natural instinct was to cling to the past and “save” the PC business. This is a classical innovator’s dilemma although it sure didn’t seem like one at the time. And of course PCs didn’t die overnight, so the innovation here was not wasted, it just feels imbalanced against the backdrop of history. Anyway, I digress.

-

Relational database still rule in this DB-Engines Ranking. #1: Oracle. #2: MySQL. #3: Microsoft SQL Server. #4: PostgreSQL. #5 MongoDB. Over the years the rankings have been remarkably stable.

- Microprocessors have so many transistors these days parts of chips must be turned off to dissipate heat. Not all the transistors can be used at the same time. This is called Dark silicon. It turns out the brain uses a similar strategy. Why? We don't know yet. Portions of the brain fall asleep and wake back up all the time: It’s as if tiny portions of the brain are independently falling asleep and waking back up all the time. What’s more, it appears that when the neurons have cycled into the more active, or “on,” state they are better at responding to the world. The neurons also spend more time in the on state when paying attention to a task.

- BMW remotely locks a thief inside a car. What does ownership mean these days? Our things are no longer just our things. They are jointly controlled, which means they are also jointly owned.

- Instance stacking is installing multiple instances of a product on the same server. I can save a lot of money in licensing costs, but it's harder to tune performance. Rudy Panigas has some good advice on how to instance stack SQL server: Only same versions of SQL server; Properly allocate memory (MAX and MIN settings) and CPUs per instance; Have them on the same patching levels; Assign different disk per each instance.

- Videos from GTAC (Google Test Automation Conference) 2016 are now available.

- Finding similarities between texts is something required for spam detection, for example. How to calculate 17 billion similarities is an exploration of how to determine how similar recipes are to one another. It's a juicy topic. Good discussion on reddit. Here's how Google does it: Detecting Near-Duplicates for Web Crawling. jones1618 explains: The basic idea is that you generate an N-bit "feature hash" for each document (or in your case a recipe). You already have a nice feature set for that. If you numerically sort your recipes by this SimHash, more similar documents are always near each other in the sort order. The only trick is that if you have an N-bit SimHash, you need to repeat the sort for N bitwise rotations of the hash. Still calculating F hashes once for all your recipes and then sorting them N times has got to be much faster than 400M floating point multiplies of your method.

- Some interesting stats on GitHub diffs. Most are small. How we made diff pages three times faster: 81% of viewed diffs contain changes to fewer than ten files; 52% of viewed diffs contain only changes to one or two files; 80% of viewed diffs have fewer than 20KB of patch text; 90% of viewed diffs have fewer than 1000 lines of patch text.

- It turns out async scales better than sync after all. Why My Synchronous API Scaled Better Than My Asynchronous API. A good demonstration of the differences between async and sync approaches to parallelization. One observation is that with a thread pool approach you can limit the number of simultaneous requests so as not to overwhelm a target service. Firing off 200 simulatenous operations might bring a service to its knees and it could spike your memory use quite a bit and cause swapping/paging.

- Eve Online has their own monthly Economic Report. Intriguing, but there's no dang explanation! The Internet Provides. Brendan ‘Nyphur’ Drain in EVE EVOLVED: ANALYSING EVE’S NEW ECONOMIC REPORTS brings the graphs to life, explaining just of some of the intricacies of this dynamic economy. And the reddit group has extensive discussions. Fascinating how real is the virtual. Someday this is the way a democracy may govern.

- Batching requests, giving performance improvements since....forever? 10x speedup utilizing Nagle Algorithm in business application: What this ends up doing is to reduce the network costs. Instead of going to the server once for every request, we’ll go to the server once per 200 ms or every 256 requests. That dramatically reduce network traffic. And the cost of actually sending 256 requests instead of just one isn’t really that different. Note that this gives us a higher latency per request, since we may have to wait longer for the request to go out to the server, but it also gives me much better throughput, because I can process more requests in a shorter amount of time.

- Great summary. Observations from 2016 AWS re:Invent.

- James Hamilton: Vertically-integrated networking equipment, where the ASICs, the hardware, these protocol stacks [were] supplied by single companies, is [like] the way the mainframe used to dominate servers. If you look at where the networking world is, it’s sort of where the server world was 20 or 30 years ago. It started out with, you buy a mainframe. . . and that’s it. And it comes from all one company. The networking world is the same place. And we know what happened in the server world: As soon as you chop up these vertical stacks, you’ve got companies focused on every layer, and they’re innovating together, and they’re all competing. You can get great things happening.

- Just in case you thought we were lacking for distributed queue options. Cherami: Uber Engineering's Durable and Scalable Task Queue in Go: a competing-consumer messaging queue that is durable, fault-tolerant, highly available and scalable. We achieve durability and fault-tolerance by replicating messages across storage hosts, and high availability by leveraging the append-only property of messaging queues and choosing eventual consistency as our basic model. Cherami is also scalable, as the design does not have single bottleneck.

- Very cool story of how AI helped solve a problem that wasn't solvable with earlier approaches. They had been trying since 2001. Deep Learning Reinvents the Hearing Aid. And it doesn't just help the hearing impaired. We'll all have super hero hearing in the bright bright future.

- Sometimes life just fits together like a jigsaw puzzle. Pieces slide together of their own accord. A while ago I read The Master of Disguise: My Secret Life in the CIA, good book BTW, which talked a lot about the crucial role make-up artistry played in the spy game. They had these amazing sounding make-up kits and I was just burning to know what looked like. And here it is: Peter Jackson and Adam Savage Open John Chambers' CIA Make-Up Kit!

- A fun list. 52 things I learned in 2016. I like the one about the Australian musicians have performed with a synthesiser controlled by a petri dish of live human neurons.

- Here's the database version of "Fast, Good or Cheap. Pick two." The SNOW theorem and latency-optimal read-only transactions. S is for Strict Serializability. N is for Non-blocking. O is for One response per read. W is for Write transactions. No, you can't have it all: in every combination of three out of the four properties is possible. You can have read-only transaction algorithms that satisfy S+O+W, N+O+W, and S+N+O.

- Would you like a better internet? BBR: Congestion-Based Congestion Control: Rethinking congestion control pays big dividends. Rather than using events such as loss or buffer occupancy, which are only weakly correlated with congestion, BBR starts from Kleinrock's formal model of congestion and its associated optimal operating point...BBR is deployed on Google's B4 backbone, improving throughput by orders of magnitude compared with CUBIC. It is also being deployed on Google and YouTube Web servers, substantially reducing latency on all five continents tested to date, most dramatically in developing regions. BBR runs purely on the sender and does not require changes to the protocol, receiver, or network, making it incrementally deployable. It depends only on RTT and packet-delivery acknowledgment, so can be implemented for most Internet transport protocols.

- An interesting List of Tech Migrations, companies that have switch technologies. Lots of switching away from X to Go. PostgreSQL and MySQL are also common switch targets.

- Wayfair with an extensive analysis of early flushing and switching to http/2: Bottom line, we got a 15-20% decrease in the metrics we care about, from early flush, 5-10% from h2.

- Programmers on hearing this just nod sagely. It's always about powers of two. Brain Computation Is Organized via Power-of-Two-Based Permutation Logic: the origin of intelligence is rooted in a power-of-two-based permutation logic (N = 2i–1), producing specific-to-general cell-assembly architecture capable of generating specific perceptions and memories, as well as generalized knowledge and flexible actions. We show that this power-of-two-based permutation logic is widely used in cortical and subcortical circuits across animal species and is conserved for the processing of a variety of cognitive modalities including appetitive, emotional and social information.

- The devil is in the interaction between the details. How Complex Systems Fail: complex systems are always ridden with faults, and will fail when some of these faults conspire and cluster. In other words, complex systems constantly dwell on the verge of failures/outages/accidents.

- Oh great. Now I'm going to have nightmares. Meet the World’s First Completely Soft Robot.

- You can have your very own open source research processor: OpenPiton - an expandable manycore platform which includes RTL,thousands of tests, and implementation scripts. This work: rethinks the design of microprocessors specifically for data center use along with how microprocessors are affected by the novel economic models that have been popularized by IaaS clouds

- github/orchestrator: a MySQL replication topology management and visualization tool, allowing for: Discovery, Refactoring, Recovery.

- donnemartin/awesome-aws: A curated list of awesome AWS libraries, open source repos, guides, blogs, and other resources.

- deepmind/lab: a 3D learning environment based on id Software's Quake III Arena via ioquake3 and other open source software.

- OpenHFT: Open Source components originating from the HFT world.

- stevenringo/reinvent-2016-youtube.md: Links to YouTube recordings of AWS re:Invent sessions

- anywhichway/reasondb: A 100% native JavaScript automatically synchronizing object database: SQL like syntax, swapable persistence engines, asynchronous cursors, streaming analytics, 18 built-in plus in-line fat arrow predicates, predicate extensibility, indexed computed values, joins, nested matching, statistical sampling and more.

- Service-Oriented Sharding with Aspen: a sharded blockchain protocol designed to securely scale with increasing number of services. Aspen shares the same trust model as Bitcoin in a peer-to-peer network that is prone to extreme churn containing Byzantine participants. It enables introduction of new services without compromising the security, leveraging the trust assumptions, or flooding users with irrelevant messages.