Stuff The Internet Says On Scalability For July 22nd, 2016

Hey, it's HighScalability time:

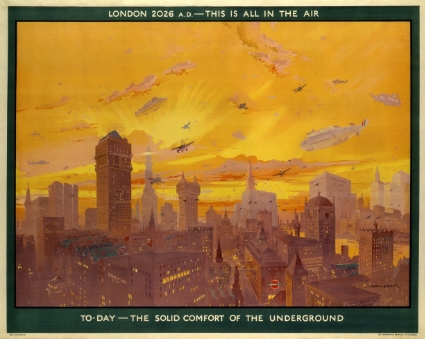

It's not too late London. There's still time to make this happen. If you like this sort of Stuff then please support me on Patreon.

- 40%: energy Google saves in datacenters using machine learning; 2.3: times more energy knights in armor spend than when walking; 1000x: energy efficiency of 3D carbon nanotubes over silicon chips; 176,000: searchable documents from the Founding Fathers of the US; 93 petaflops: China’s Sunway TaihuLight; $800m: Azure's quarterly revenue; 500 Terabits per square inch: density when storing a bit with an atom; 2 billion: Uber rides; 46 months: jail time for accessing a database;

- Quotable Quotes:

- Lenin: There are decades where nothing happens; and there are weeks where decades happen.

- Nitsan Wakart: I have it from reliable sources that incorrectly measuring latency can lead to losing ones job, loved ones, will to live and control of bowel movements.

- Margaret Hamilton~ part of the culture on the Apollo program “was to learn from everyone and everything, including from that which one would least expect.”

- @DShankar: Basically @elonmusk plans to compete with -all vehicle manufacturers (cars/trucks/buses) -all ridesharing companies -all utility companies

- @robinpokorny: ‘Number one reason for types is to get idea what the hell is going on.’ @swannodette at #curryon

- Dan Rayburn: Some have also suggested that the wireless carriers are seeing a ton of traffic because of Pokemon Go, but that’s not the case. Last week, Verizon Wireless said that Pokemon Go makes up less than 1% of its overall network data traffic.

- @timbaldridge: When people say "the JVM is slow" I wonder to what dynamic, GC'd, runtime JIT'd, fully parallel, VM they are comparing it to.

- @papa_fire: “Burnout is when long term exhaustion meets diminished interest.” May be the best definition I’ve seen.

- Sheena Josselyn: Linking two memories was very easy, but trying to separate memories that were normally linked became very difficult

- @mstine: if your microservices must be deployed as a complete set in a specific order, please put them back in a monolith and save yourself some pain

- teaearlgraycold: Some people, when confronted with a problem, think “I know, I'll use regular expressions.” Now they have two problems.

- Erik Duindam: I bake minimum viable scalability principles into my app.

- Hassabis: It [DeepMind] controls about 120 variables in the data centers. The fans and the cooling systems and so on, and windows and other things. They were pretty astounded.

- @WhatTheFFacts: In 1989, a new blockbuster store was opening in America every 17 hours.

- praptak: It [SRE] changes the mindset from "Failure? Just log an error, restore some 'good'-ish state and move on to the next cool feature." towards "New cool feature? What possible failures will it cause? How about improving logging and monitoring on our existing code instead?"

- plusepsilon: I transitioned from using Bayesian models in academia to using machine learning models in industry. One of the core differences in the two paradigms is the "feel" when constructing models. For a Bayesian model, you feel like you're constructing the model from first principles. You set your conditional probabilities and priors and see if it fits the data. I'm sure probabilistic programming languages facilitated that feeling. For machine learning models, it feels like you're starting from the loss function and working back to get the best configuration

- Isn't it time we admit Dark Energy and Dark Matter are simply optimizations in the algorithms running the sim of our universe? Occam's razor. Even the Eldritch engineers of our creation didn't have enough compute power to simulate an entire universe. So they fudged a bit. What's simpler than making 90 percent of matter in our galaxy invisible?

- Do you have one of these? Google has a Head of Applied AI.

- Uber with a great two article series on their stack. Part uno; Part deux: Our business runs on a hybrid cloud model, using a mix of cloud providers and multiple active data centers...We currently use Schemaless (built in-house on top of MySQL), Riak, and Cassandra...We use Redis for both caching and queuing. Twemproxy provides scalability of the caching layer without sacrificing cache hit rate via its consistent hashing algorithm. Celery workers process async workflow operations using those Redis instances...for logging, we use multiple Kafka clusters...This data is also ingested in real time by various services and indexed into an ELK stack for searching and visualizations...We use Docker containers on Mesos to run our microservices with consistent configurations scalably...Aurora for long-running services and cron jobs...Our service-oriented architecture (SOA) makes service discovery and routing crucial to Uber’s success...we’re moving to a pub-sub pattern (publishing updates to subscribers). HTTP/2 and SPDY more easily enable this push model. Several poll-based features within the Uber app will see a tremendous speedup by moving to push....we’re prioritizing long-term reliability over debuggability...Phabricator powers a lot of internal operations, from code review to documentation to process automation...We search through our code on OpenGrok...We built our own internal deployment system to manage builds. Jenkins does continuous integration. We combined Packer, Vagrant, Boto, and Unison to create tools for building, managing, and developing on virtual machines. We use Clusto for inventory management in development. Puppet manages system configuration...We use an in-house documentation site that autobuilds docs from repositories using Sphinx...Most developers run OSX on their laptops, and most of our production instances run Linux with Debian Jessie...At the lower levels, Uber’s engineers primarily write in Python, Node.js, Go, and Java...We rip out and replace older Python code as we break up the original code base into microservices. An asynchronous programming model gives us better throughput. And lots more.

- Does Google have an Android strategy tax? That worked out for Microsoft. Google had an Oculus competitor in the works — but it nixed the project: different VR project was germinated inside the X research lab with around 50 employees working on it...more critically, that project was creating a separate operating system for the device, unique from Android.

- What is Serverless Computing and Why is it Important. Travis Reader with a great description of serverless technology, a technology Iron.io helped to create. Some nuggets: The trend is pretty clear, the unit of work is getting smaller and smaller. We’ve gone from monoliths to microservices to functions...PaaS could be considered the first iteration of serverless, where you still have to think a little bit about the servers (how many vms do you need?) but you don’t have to manage them...There will probably always be a place for both microservices and FaaS. Some things you just can’t do with functions, like keep an open websocket connection for a bot for instance...Serverless is not all roses...Complexity increases. The smaller we take things, the more complex the entire system becomes...lack of tooling. There isn’t much out there that can help you manage and monitor your functions.

- Videos for COLT 16 (Conference on Learning Theory) are now available.

- How long does it take to test 25 Billion web pages: I’m not saying that it isn’t possible to test 25,000,000,000 pages. In fact massive companies could perform such a task in no time at all. But I also think doing it is a ridiculous idea. And when I say "ridiculous" I mean it in the strictest sense of the word. No matter how they performed a project such as using 351 instances across a decade or 3,510 instances for less than a year, or something in between, doing so is an ignorant, uninformed, and useless pursuit. It indicates a woeful lack of knowledge and experience in development, accessibility, and statistics.

- Whenever we think something is fixed in place we are almost always wrong. The earth and the sun of course, but not long ago the idea of plate tectonics was laughed at, the surface of the earth is fixed, so wrong. Zoologists once assumed animal numbers largely remained steady, no we know they can fluctuate dramatically. People like their cognitive ease.

- Broadband != mobile. Skype is Transitioning to a More Modern, Mobile-Friendly Architecture. Skype began as an innovative peer-to-peer solution that worked wellish over broadband. In the mobile world that model doesn't work as well. Connectivity is transient. CPU is constrained. Battery life is at a premium. So Skype has been transitioning to a cloud-based solution. A benefit looks to be agility. Centralizing control makes it easier to release new features. Clients shrink in size and complexity as functionality moves to the cloud. And some users report it's faster.

- Interesting interview. Peter Bailis on the Data Community’s Identity Crisis. Why work in academia? Solving problems is often not a number of engineers or number of resources thing. It's the number of years spent going deep into a problem. Getting to that aha moment.

- Creating a minimum viable product doesn't mean being blind to scalability. How I built an app with 500,000 users in 5 days on a $100 server. Erik Duindam on what happens if you do: "One person who won’t make this mistake again is Jonathan Zarra, the creator of GoChat for Pokémon GO. The guy who reached 1 million users in 5 days by making a chat app for Pokémon GO fans. Last week, as you can read in the article, he was talking to VCs to see how he could grow and monetize his app. Now, GoChat is gone. At least 1 million users lost and a lot of money spent. A real shame for a genius move." And he tells how he build GoSnaps: "I took a NodeJS boilerplate project for hackathons and used a MongoDB database without any form of caching. No Redis, no Varnish, no fancy Nginx settings, nothing. The actual iOS app was built in native Objective-C code, with some borrowed Apple Maps-related code from Unboxd, our main app. So how did I make it scalable? By not being lazy."

- A clean and clear approach. Handling iOS app states with a state machine.

- It's often the smallest of things. Looking at this regular expression would you think it could bring a site down? ^[\s\u200c]+|[\s\u200c]+$. Not likely. But it did. Stack Exchange Outage Postmortem: The direct cause was a malformed post that caused one of our regular expressions to consume high CPU on our web servers. The post was in the home page list, and that caused the expensive regular expression to be called on each home page view. This caused the home page to stop responding fast enough. Since the home page is what our load balancer uses for the health check, the entire site became unavailable since the load balancer took the servers out of rotation.

- Are you creative? The Mind of an Architect. All kinds of creative people follow a similar pattern: interesting and arresting personality; non-conforming; high aspirations for self; prefer complexity and ambiguity over simplicity and order; capacity to make unexpected connections and see patterns in daily life; independent; courageous; self-centered. What isn't all that important? Intelligence. Average intelligence will do.

- The End of Microservices. Seems like a picture of the future formed by projecting the past forward. Henry Ford's faster horse instead of the car. That's usually not how it works. Hopefully we can do better.

- A fascinating indepth history that I knew very little about. How Charles Bachman Invented the DBMS, a Foundation of Our Digital World: Fifty-three years ago a small team working to automate the business processes of the General Electric Company built the first database management system. The Integrated Data Store—IDS—was designed by Charles W. Bachman, who won the ACM's 1973 A.M. Turing Award for the accomplishment.

- My god, it's full of tiers! Nginx: we believe it’s crucial to adopt a four-tier (client, delivery, aggregation, services) application architecture in which applications are developed and deployed as sets of microservices. Some best practices from Netflix: Create a Separate Data Store for Each Microservice; Keep Code at a Similar Level of Maturity; Do a Separate Build for Each Microservice; Deploy in Containers; Treat Servers as Stateless.

- Hotels with three or even two stars have a better value for money overall. That's the kind of insight you can learn from Machine Learning over 1M hotel reviews: After scraping more than 1 million of reviews from TripAdvisor...we created a pipeline that combines both classifiers (aspect and sentiment)...We then indexed the results with Elasticsearch and loaded them into Kibana in order to generate beautiful visualizations.

- Good discussion. Is server-side rendering now obsolete? should I only be making "single page applications"? If you expected SPAs to be the winner you might be surprised.

- Building a Rails Notification Queue 3: Queue Processing: Using it [notification solution], we have processed millions of notifications without any loss of code quality or tracking. In this short series, we will look at how to build a great notification queue that you can use in your systems.

- An unusual business model. How we generated $712,076.64 in revenue with two people in a little over two years: We charge $100 per employee, one-time, for life. That’s it. So if you’ve got 20 employees, it’s $2,000. You pay that once and that’s it. No recurring costs, maintenance fees, etc. The only time you ever pay again is if you hire someone new. Then it’s $100 for that new person.

- Rob Ewaschuk with an awesome exploration of My Philosophy on Alerting: Pages should be urgent, important, actionable, and real; They should represent either ongoing or imminent problems with your service; Err on the side of removing noisy alerts; You should almost always be able to classify the problem into one of: availability & basic functionality; latency; correctness (completeness, freshness and durability of data); and feature-specific problems; Symptoms are a better way to capture more problems more comprehensively and robustly with less effort; Include cause-based information in symptom-based pages or on dashboards, but avoid alerting directly on causes; The further up your serving stack you go, the more distinct problems you catch in a single rule; If you want a quiet oncall rotation, it's imperative to have a system for dealing with things that need timely response.

- Videos from SREcon are available. Lots of great content.

- If you would like to compare the cost of Lambda, Azure functions, Google functions, and IBM OpenWhisk, well you can't. Only Lambda currently has pricing. But when you can there's a Serverless Cost Calculator.

- Orleans and Akka Actors: A Comparison: Orleans offers a programming model that integrates seamlessly into non-distributed methodology and programmer skills, it allows scaling out beyond the limits of a single computer without having to deal with the difficulties of writing a distributed application...Akka provides a very simple and effective abstraction for modeling distributed systems—the Actor Model—and offers this low-level tool together with higher abstractions to the user. The philosophy is that the user must understand distributed programming in order to make their own choices regarding implementation trade-offs

- Analyzing Genomics Data at Scale using R, AWS Lambda, and Amazon API Gateway: Calculating survival statistics on every gene is an example of an “embarrassingly parallel” problem. SparkR on Amazon EMR is one possible implementation solution, but Lambda provides significant advantages for GenePool in this use case: as scientists run analyses in GenePool, Lambda is able to scale up dynamically in real time and meet the spike in requests to calculate statistics on tens of thousands of genes, and millions of variants. In addition, you do not have to pay for idle compute time with Lambda and you do not have to manage servers.

- Is natural language processing the one thing missing from your take over the world bot? Google has put all their machine learning to work in a new API: CLOUD NATURAL LANGUAGE API. Not sure of the pricing. And if you build around this API and Google pulls it you are screwed if it's your only option.

- A primer on particle accelerators: What follows is a primer on three different types of particle accelerators: synchrotrons, cyclotrons and linear accelerators, called linacs. Cyclotrons accelerate particles in a spiral pattern, starting at their center. Synchrotrons typically feature a closed pathway that takes particles around a ring. While circular accelerators may require many turns to accelerate particles to the desired energy, linacs get particles up to speed in short order.

- Preprocessing arrays for fast sorted-subarray queries: The time to preprocess the input is O(n log n), unsurprising since we have to comparison sort somewhere. But the space for the resulting data structure is only O(n), and it allows sorted-subarray queries in time linear in the length of the subarray.

- Voxels for audio? Soon you'll be able to program sound like you program light. Researchers use acoustic voxels to embed sound with data: using a computational approach to inversely design acoustic filters that can fit within an arbitrary 3D shape while achieving target sound filtering properties. Led by Computer Science Professor Changxi Zheng, the team designed acoustic voxels, small, hollow, cube-shaped chambers through which sound enters and exits, as a modular system. Like Legos, the voxels can be connected to form an infinitely adjustable, complex structure.

- Fixing Coordinated Omission in Cassandra Stress: Coordinated Omission...describe a common measurement anti pattern where the measuring system inadvertently coordinates with the system under measurement. This results in greatly skewed measurements...The change is mostly tedious, but importantly it introduces the notion of split response/service/wait histograms...So we got 3 load generating modes, and we've broken up latency into 3 components, the numbers are in histograms...it seems as if the server was coping alright with the load to begin with, but hit a bump (GC? compaction?) after 225 seconds, and an even larger bump slightly later. That's lovely to know.

- Excellent explanation of How Cassandra’s inner workings relate to performance.

- Riposte: An Anonymous Messaging System Handling Millions of Users: a new system for anonymous broadcast messaging. Riposte is the first such system, to our knowledge, that simultaneously protects against traffic-analysis attacks, prevents anonymous denial-of-service by malicious clients, and scales to million-user anonymity sets. To achieve these properties, Riposte makes novel use of techniques used in systems for private information retrieval and secure multi-party computation. For latency-tolerant workloads with many more readers than writers (e.g. Twitter, Wikileaks), we demonstrate that a three-server Riposte cluster can build an anonymity set of 2,895,216 users in 32 hours.

- A Wait-Free Stack: In this paper, we describe a novel algorithm to create a con- current wait-free stack. To the best of our knowledge, this is the first wait-free algorithm for a general purpose stack...The crux of our wait-free implementation is a fast pop operation that does not modify the stack top; instead, it walks down the stack till it finds a node that is unmarked. It marks it but does not delete it. Subsequently, it is lazily deleted by a cleanup operation.

- lambci/lambci: a package you can upload to AWS Lambda that gets triggered when you push new code or open pull requests on GitHub and runs your tests (in the Lambda environment itself) – in the same vein as Jenkins, Travis or CircleCI.

- BuntDB: a low-level, in-memory, key/value store in pure Go. It persists to disk, is ACID compliant, and uses locking for multiple readers and a single writer. It supports custom indexes and geospatial data. It's ideal for projects that need a dependable database and favor speed over data size.

- Unlocking Ordered Parallelism with the Swarm Architecture: Swarm is a parallel architecture that exploits ordered parallelism. it executes tasks speculatively and out of order and can scale to large core counts and speculation windows. the authors evaluate swarm on graph analytics, simulation, and database benchmarks. at 64 cores, swarm outperforms sequential implementations of these algorithms by 43 to 117 times and state-of-the-art software-only parallel algorithms by 3 to 18 times.