UGFydCAxIG9mIFRoaW5raW5nIFNlcnZlcmxlc3PigIrigJTigIpIb3cgTmV3IEFwcHJvYWNoZXMg QWRkcmVzcyBNb2Rlcm4gRGF0YSBQcm9jZXNzaW5nIE5lZWRz4oCK

This is a guest repost by Ken Fromm, a 3x tech co-founder — Vivid Studios, Loomia, and Iron.io.

First I should mention that of course there are servers involved. I’m just using the term that popularly describes an approach and a set of technologies that abstracts job processing and scheduling from having to manage servers. In a post written for ReadWrite back in 2012 on the future of software and applications, I described “serverless” as the following.

The phrase “serverless” doesn’t mean servers are no longer involved. It simply means that developers no longer have to think that much about them. Computing resources get used as services without having to manage around physical capacities or limits. Service providers increasingly take on the responsibility of managing servers, data stores and other infrastructure resources…Going serverless lets developers shift their focus from the server level to the task level. Serverless solutions let developers focus on what their application or system needs to do by taking away the complexity of the backend infrastructure.

At the time of that post, the term “serverless” was not all that well received, as evidenced by the comments on Hacker News. With the introduction of a number of serverless platforms and a significant groundswell on the wisdom of using microservices and event-driven architectures, that backlash has fortunately subsided.

A Sample Use Case

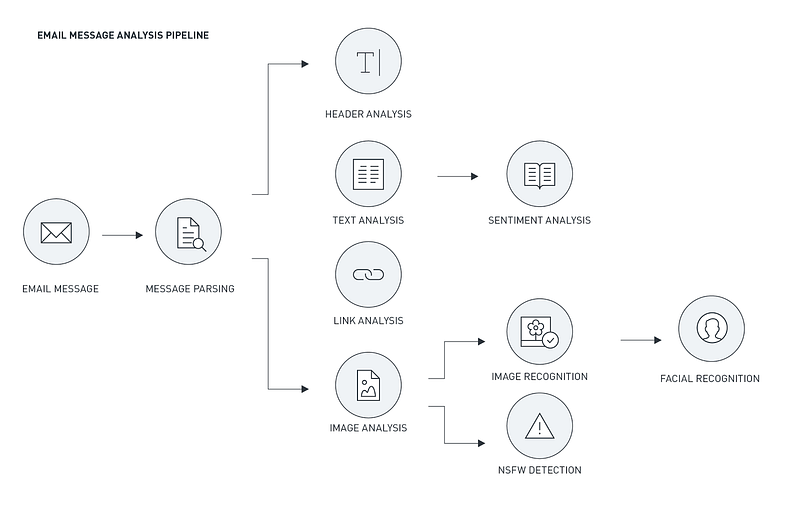

Since it is useful to have an example in mind as I discuss issues and concerns in developing a serverless app, I will use the example of a serverless pipeline for processing email and detecting spam. It is event-driven in that when an email comes in, it will spawn a series of jobs or functions intended to operate specifically on that email.

In this pipeline, you may have tasks that perform parsing of text, images, links, mail attributes, and other items or embedded objects in the email. Each item or element might have different processing requirements which in turn would entail one or more separate tasks as well as even its own processing pipeline or sequence. An image link, for example, might be analyzed across several different processing vectors to determine the content and veracity of the image. Depending on the message scoring and results — spam or not — various courses of actions will then be taken, which would likely, in turn, involve other serverless functions.

Thinking at the Task Level

The unit of scale within a serverless environment is the task or job. It is an instantiation and execution of a finite amount of processing around a particular workload. Task processing has existed since the beginning of programming and so to some, it may seem as if not a lot is new. But given the highly distributed nature and abstracted manner in which workloads are processed, it is useful to have a broad understanding across many levels of the process.

Synchronous vs Asynchronous

While the nature of processing of a task — whether it’s synchronous or asynchronous—is more of a platform issue, it is an important element to consider at the task level. Traditional worker and job processing systems have largely been asynchronous, meaning that calling process does not maintain a persistent connection to the entity or component performing the task processing. Jobs will be queued up and, as such, they may not run instantly. The only specific connection between calling function and processor is queuing the task up for running. (Note that certain platforms may allow for introspection of tasks to obtain status but via API calls and not direct/persistent connections.)

Many of the new serverless platforms allow for synchronous processing whereby the connection is maintained and the client waits while the function is processing. The advantage of synchronous processing is that a result may be obtained directly from the processing platform whereas with asynchronous processing, obtaining results has to be done as an independent effort. I’ll go into more detail in the platform section although a general rule is that synchronous processing is appropriate for lightweight functions (similar to an API call to obtain weather information) whereas asynchronous processing is better for longer, more involved processing jobs (audio transcription or processing of a set of events as a small batch processing job) as well as where the application/component/function initiating the process is not the one to process the results.

Stateless

One of the core tenets in developing microservices and/or serverless functions, regardless of the processing approach, is that each service or function should be considered stateless. Stateless refers to each task being a separate and distinct processing request containing enough information on its own to fulfill this request. Services and functions should not store any unique software configuration or state within them. Any configuration data should come from outside the function, commonly as part of the task payload or via a config capability within the platform. The function should be used solely for its computational resources, persisting only for the processing of a singular workload.

Additionally, there should be a distinct beginning state and end state, and the service or function should process each payload in the same manner. Borrowing from the principles of clean code, bad code — and bad microservices and serverless functions — tries to do too much. Clean code — and likewise clean microservices and functions — should be focused and largely conform to the Single Responsibility Principle (SRP). A good way at thinking about serverless functions is that each function should have one and only one dimension or vector of change. In other words, if there are multiple ways that a function might be extended (image analysis that would check for multiple characteristics, for example), then it’s likely that there should be two or more distinct functions for each vector.

In the use case we’re using, each email is a separate event, and so each would have a separate sequence of tasks. Each task would bear a payload that provides the data for each task or function to process.

Ephemeral

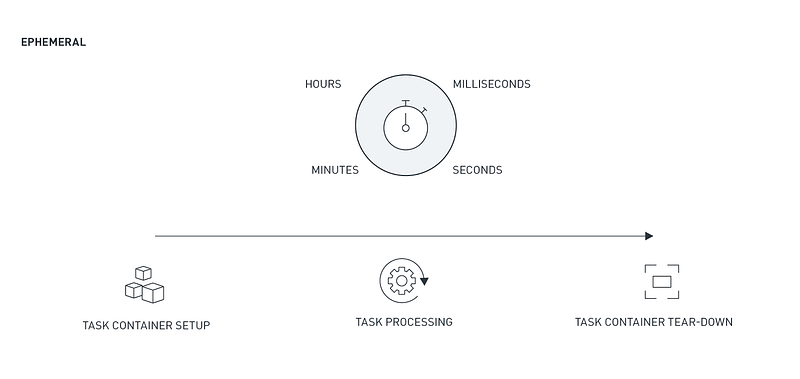

Serverless functions are also ephemeral — meaning that they persist for a limited period of time. The basis of a serverless application is largely around event handling and the task processing that takes place in service of these events. The advent of powerful container technology makes it so that tasks can be processed in a distributed environment with the decisions on where to run made at runtime.

In other words, the task processing essentially becomes container processing with the containers set up and removed on a task by task basis. (For greater insights into containers and how they fit within a serverless platform, you can read several past articles I co-wrote on the topic. They go into great detail on why containers and highly scalable task processing go hand in hand. You can find the posts here and here.) By way of example, each task in the email processing example only persists to perform a particular action for a specific email. After it completes, the task and the container should terminate.

There may be situations where persistent or long running processes are needed — as in the case of application servers or API servers, but these fall outside of a serverless paradigm. In most cases where you feel you may need long-running tasks, it is more than likely that there are ways to avoid this overhead. For example, serverless platforms, message queues, or other components might be able to accommodate any routing needs. Likewise, scheduled tasks might be able to provide regular status checks or processing cycles. An example here is consolidating and processing streaming data from a various number of IoT devices. If data is collected in one or more queues or databases, scheduled jobs can run on periodic (and frequent) bases, look at data in a queue or in a database and initiate one or more sub tasks to perform the consolidation and processing of each data slice.

Note that in synchronous and/or real-time serverless processing scenarios, the container may not terminate after each task, largely for performance reasons. The containers may persist from task to task but their state and storage will be erased and reset such that each task or event-processing cycle is isolated and ephemeral.

Idempotent

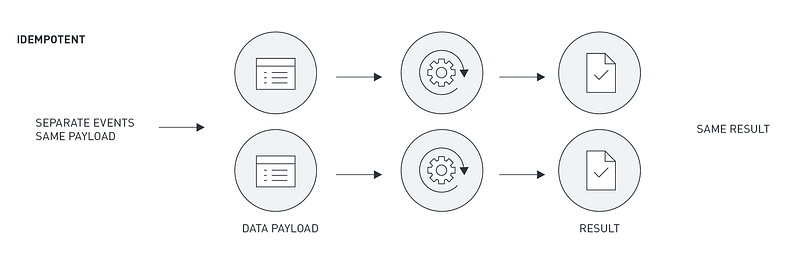

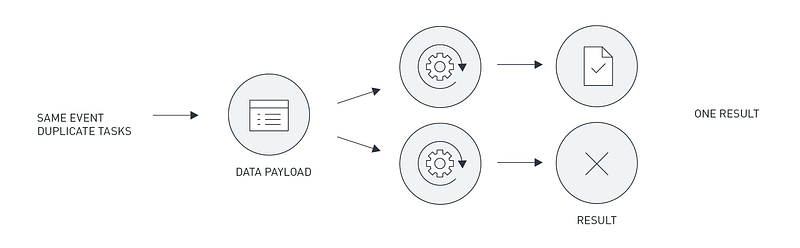

Idempotence is a critical attribute to build into microservices and serverless functions. At the base level, it is the ability to be able to run the same task and get the same result. It is also the ability to make it so that multiple identical requests have the same effect as a single request. It’s this second definition that is critical to design for when tasks are operating a highly concurrent and asynchronous manner. In any job processing environment, tasks may not complete for any number of reasons — server crashes, resource limits, third party service timeouts, task timeouts, and more.

In other cases, a task may complete but a duplicate processing request for the same payload might have been invoked. An example of this is a queue registering a time-out of a message because the task might still be processing a request (and therefore didn’t delete or unreserve the message in time). As a result, the queue might trigger another processing request for that message/payload.

If a task just goes ahead and processes the payload — puts it on a queue or writes it to a database, it may have bad effects, especially in a transactional situation. For example, two orders may go out. It is paramount then to make sure that only one request gets processed for the same payload. It is for this reason that developers working in a serverless platform (as in most other processing worlds) put effort into performing checks prior to processing and/or prior to writing or outputting the results.

Another way of thinking about this, in the words of one developer friend, is “Think about it as if a server crashed while the task is in mid-processing and the task was retried. Or if it just gets queued or scheduled twice. What do you need to do to make sure you’re not overwriting data, adding a duplicate transaction, or generally mucking with things because it ran again.” There, simple. Check and validate at the appropriate points in the processing cycle to make sure the work hasn’t already been performed.

Polyglot

Polyglot programming refers to programming in multiple languages. In the case of serverless programming, it refers to the ability to write and execute tasks in multiple languages. Although each function likely to be just one language, the right serverless platform should be able to handle a multitude of languages. Which means that it should also provide a significant level of code independence such that developers can work transparently and not have to concern themselves with OS and server level dependencies.

The advantage of course, is the ability to use the right tool for the right job. Or alternatively, to use the right team for the right job. Too often in development, the adage rings true that if all you have is a hammer, you see everything as a nail. With a single language, an approach to a problem can be constrained. Developers may struggle to adapt code packages in that language to fit their need, when in fact are other libraries in other languages can better serve the purpose.

For calculating Bayesian statistics or doing machine learning, you may want to make use of packages that are written in C, C++, Python, or Java. Likewise, a development team that you work with may be proficient in a particular web-crawling package that is written for a particular language (Nokogiri written in Ruby for example). Being able to make use of this knowledge and experience can eliminate development cycles as well as reduce the risk of a late or failed project.

Note that a number of the newer serverless platforms support only a handful of languages at present but I expect that to change quickly over the course of this year. (IronWorker, for example, is able to handle most popular languages and executable code.)

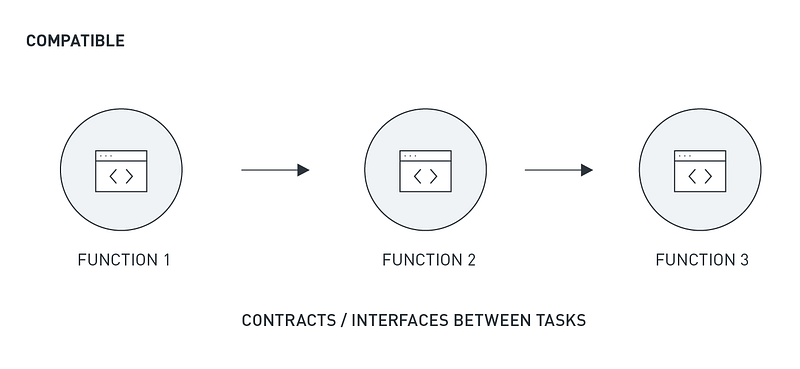

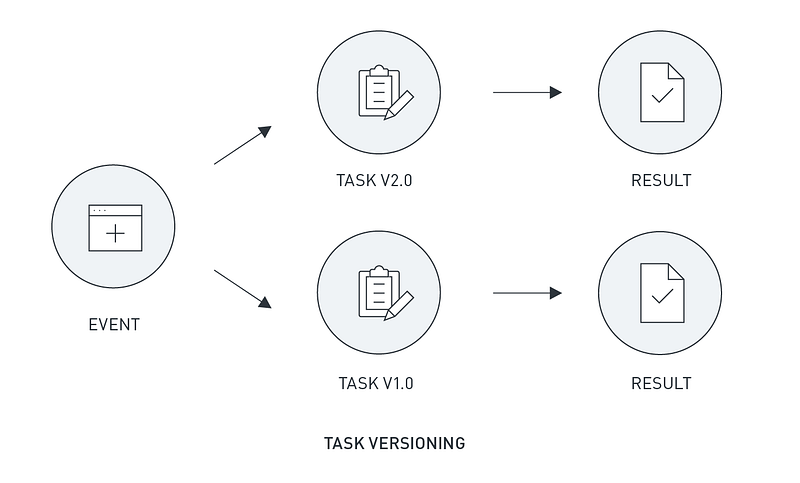

Compatible

Serverless tasks need designed with two orders of compatibility in mind. The first is compatibility among tasks and the second is across versions. It goes without saying that when you split things up — as you do with microservices and serverless functions — you need strong contracts and specifications between components. Solid API formats can solve a part of the issue via common auth and transfer protocols but with custom functions and services, developers are still tasked with defining input and output schemas that make sense and are easy to understand.

In addition to clean interfaces, developers need to address versioning needs. For example, suppose that function X is running within a platform and it invokes function Y. If function Y has been updated without function X knowing about it and function Y is not designed correctly, it could fail when processing or it could produce an incorrect result (or it cause downstream failure in a task that is expecting the original result).

As with code packages, changes and updates within a serverless task can ripple through applications. In the case of code packages, these conflicts might be caught early via packaging and compilation tools. With microservices and serverless programming, however, it might only be through running services (preferably in test or staging) that issues become known.

Which means that with all this statelessness and task and service independence, teams need to be careful about not only designing well-constructed interfaces but also addressing backwards compatibility and rolling out updates in a mindful manner. One help can be to use semantic versioning in describing/versioning serverless tasks. It’s not a panacea but with the propagation of this versioning convention, it does provide some grounding for other developers who may be using tasks you create.

This need for compatibility and consistency also means that testing of tasks used in production should be ongoing. Fortunately with serverless processing, that capability is largely baked in. Because serverless tasks are, by nature, executable, this capability provides a nearly self-contained framework for continuous testing. Setting up regular tests with various inputs is then the only other element that needs to be added to the mix. If a serverless platform provides for schedules jobs, then boom, that need is solved.

To Be Continued…

But this is not all the issues when working with serverless platforms–just some as they relate to the task level. Over the course of the next two weeks, I’ll be posting other sections on serverless processing. Here’s the breakdown. Stay tuned.