Stuff The Internet Says On Scalability For October 20th, 2017

Hey, it's HighScalability time:

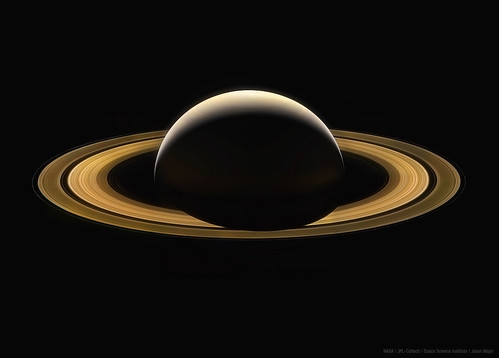

Cassini's last image of Saturn, stitched together from 11 color composites, each a stack of three images taken in red, green, and blue channels. (Jason Major)

If you like this sort of Stuff then please support me on Patreon.

- 21 million: max bitcoins ever; #2: Alibaba's cloud?; 1M MWh: Amazon Wind Farm Texas with 100 Turbines is live; $1000: cost to track someone with mobile ads; 20%: ebook sales of total; 17: qubit chip; 30%: Uber deep learning speedup using RDMA;

- Quoteable Quotes:

-

Tim O'Reilly: So what makes a real unicorn of this amazing kind? 1. It seems unbelievable at first. 2. It changes the way the world works. 3. It results in an ecosystem of new services, jobs, business models, and industries.

-

@rajivpant: AlphaGo has already beaten two of the world's best players. But the new AlphaGo Zero began with a blank Go board and no data apart from the rules, and then played itself. Within 72 hours it was good enough to beat the original AlphaGo by 100 games to zero!

-

@swardley: Containers aka winning battle but losing the war. AMZN joining CNCF is misdirection. Lambda is given a free pass to entire software industry

-

vlucas: Event-Driven architecture sounds like somewhat of a panacea with no worries about hard-coded or circular dependencies, more de-coupling with no hard contracts, etc. but in practice it ends up being your worst debugging nightmare. I would much rather have a hard dependency fail fast and loudly than trigger an event that goes off into the ether silently.

-

@xaprb: Monitoring tells you whether the system works. Observability lets you ask why it's not working.

-

Brenon Daly: Startups are increasingly stuck. The well-worn path to riches – selling to an established tech giant – isn’t providing nearly as many exits as it once did. In fact, based on 451 Research calculations, 2017 will see roughly 100 fewer exits for VC-backed companies than any year over the past half-decade. This current crimp in startup deal flow, which is costing billions of dollars in VC distributions, could have implications well beyond Silicon Valley.

-

@krishnan: The battle lines are IaaS+ with Kubernetes vs Platforms like OpenShift or Pivotal CF Vs Serverless. No one is a winner yet

-

@fredwilson: "We now have 30,000 data scientists .... 100x more than any other hedge fund ... we are not yet two years old"

-

Elon Musk: Our goal is get you there and ensure the basic infrastructure for propellant production and survival is in place. A rough analogy is that we are trying to build the equivalent of the transcontinental railway. A vast amount of industry will need to be built on Mars by many other companies and millions of people.

-

Samburaj Das: ‘Dubai Blockchain Strategy’, which aims to record and process 100% of all documents and transactions on a blockchain by the year 2020. The sweeping blockchain mandate was announced by Hamdan bin Mohammed, the crown prince of Dubai, in October 2016.

-

@danielbryantuk: "Defence in depth can add 15% to dev cost. Some think this is a lot, but does it compare to data being exposed?" @eoinwoodz #OReillySACon

-

@cmeik: It's solving the issues around gossip floods, it's providing a more reliable infrastructure, it's alleviating head of line blocking. These are the problems that when solved *enable* large clusters.

-

Benedict Evans: the four leading tech companies of the current cycle (outside China), Google, Apple, Facebook and Amazon, or ‘GAFA’, have together over three times the revenue of Microsoft and Intel combined (‘Wintel’, the dominant partnership of the previous cycle), and close to six times that of IBM. They have far more employees, and they invest far more.

-

Alan Andersen: At Canopy Tax we have been using dockerized micro-serviced Java containers using vertx with RxJava and have found it to be highly performant and memory efficient.

-

David Gerard: as of June 2017 the Bitcoin network was running 5,500,000,000,000,000,000 (5.5×1018, or 5.5 quintillion) hashes per second, or 3.3×1021 (3.3 sextillion) per ten minutes

-

@CodeWisdom: "One accurate measurement is worth a thousand expert opinions." - Grace Hopper

-

Hiten Shah: if you wan’t to build the next $1B+ SaaS app, you have to remember to constantly evolve on top of your product. Don’t build around any one single feature that the competition can rip out. Alternatively, make that one single feature so deeply integrated into everyone else’s product that it’s pointless for anyone else to copycat it.

-

@fmanjoo: Every number in Netflix’s earnings report is just extraordinary. $8 billion on content, 80 movies by next year!

-

Bloomberg: self-driving technology is a huge power drain. Some of today’s prototypes for fully autonomous systems consume two to four kilowatts of electricity -- the equivalent of having 50 to 100 laptops continuously running in the trunk

-

@swardley: I view that once again, Amazon has been given too long to freely invade a space .. and Lambda is gunning for the entire software industry.

-

Greg Ferro: First, Just about everything in this submission [from Oracle] sounds self serving and protecting their own revenue. Its hard to see this as a genuine attempt to add value to the process. Second, the author of the letter has adopted a tone that is approximately like a father berating a child for uppity behaviour.

-

Daniel Lemire: What I am really looking forward, as the next step, is not human-level intelligence but bee-level intelligence. We are not there yet, I think.

-

Richard Branson: People used to raid banks and trains for smaller amounts - it’s frightening to think how easy it is becoming to pull off these crimes for larger amounts.

-

tbarbugli: Disclaimer: CTO of Stream here. We experimented writing Cython code to remove bottlenecks, it worked for some (eg. make UUID generation and parsing faster) and think that’s indeed good advice to try that before moving to a different language. We still decided to drop Python and use Go for some parts of our infrastructure

-

Paul Johnston: The Serverless conversation is shifting from Functions to Analytics and Monitoring.

-

Gui Cavalcanti: Eagle Prime has modular weapons systems. The chainsaw is absolutely my favorite. I had no idea it could do as much damage as it does

-

@sheeshee: I have a web app with a postgres database running in a kubernetes cluster. I am very hip now.

-

otakucode: Why stop with microwaves? Why not make platters out of pure gold and the read/write heads out of synthetic diamond? Maybe integrate a cryogenic cooling system and store the data in a Bose-Einstein condensate? At this point it seems people will believe even that is cheaper than some NAND chips run off a line like printouts.

-

@adrianhosey: Swagger/WSDL/CORBA IDL/ONC RPC ... They all come out the same color in the end.

-

@sustrik: do we have an equivalent of embryonic development in software, something that could serve as a window to software product's history?

-

hosh: Someone said it. ... K8S, not Docker, is the Linux of the cloud native platform.

-

Natalie Wolchover: The scientists found that, layer by layer, the networks converged to the information bottleneck theoretical bound: a theoretical limit derived in Tishby, Pereira and Bialek’s original paper that represents the absolute best the system can do at extracting relevant information. At the bound, the network has compressed the input as much as possible without sacrificing the ability to accurately predict its label.

-

Narew: Unlike Keras and Tensorflow, Gluon is Define-by-run Deeplearning framework like Pytorch, Chainer. Network definition/debugging/flexibility are really better with dynamic network (define-by-run). That's why Facebook seem to use Pytorch for research and caffe2 for deployment. Gluon/Mxnet can do both define-by-run with Gluon API and "standard" define-and-run with it's Module API.

-

Russell O’Connor: By dividing data structures up into layers of functors, one can create a separation of concerns that does not occur in traditional functional programming. With functor oriented programming, polymorphism is not necessarily about using functions polymorphically. Instead, polymorphism provides correctness guarantees by ensuring that a particular function can only touch the specific layers of functors in its type signature and is independent of the rest of the data structure. One benefits from polymorphism even when a function is only ever invoked at a single type.

-

Gotebe: attention: unsubstantiated, poorly thought-out babbling ahead. The need for standards in microservices... And here goes another standardization cycle... Yes, we need the way to describe interfaces (IDL of some sort, with a proxy (client) generation tooling for various languages). Yes, we need a way to discover services (WSDL of some sort). Yes, we need a way to handle authentication and authorization (OAuth something something). Some people will want to secure the conversation. Some will want transactions. Some will want... Nineties called, they want their CORBA back!

-

tw1010: Man, software engineering at the cutting edge is getting harder and harder by the day. Not only are you expected to master coding but also math heavy ML and economics. I guess a consequence of software eating the world is that more and more fields of human knowledge is folded into the engineering world. The consequence being that engineers really aught to be very broad in their reading if they want to take advantage of all the low hanging fruit the octopus arms stumbles into.

-

David Gerard: The ensuing evolutionary arms race, as miners desperately try for enough of an edge to turn a profit, is such that Bitcoin’s power usage is on the order of the entire power consumption of Ireland. This electricity is literally wasted for the sake of decentralisation; the power cost to confirm the transactions and add them to the blockchain is around $10-20 per transaction. That’s not imaginary money – those are actual dollars, or these days mostly Chinese yuan, coming from people buying the new coins and going to pay for the electricity. An ordinary centralised database could calculate an equally tamper-evident block of transactions on a 2007 smartphone running off USB power. Even if Bitcoin could replace conventional currencies, it would be an ecological disaster.

-

David Rosenthal: I've been writing abut the slowing of Kryder's Law from 30-40%/yr to 10-20%/yr since at least 2014, and that projection has been verified. Remembering that industry projections have a history of optimism, WDC's projection of a 15% Kryder rate going forward on the assumption that they can get a new recording technology into the market in 2020 should be treated skeptically. I would expect that the future Kryder rate will be more like 10% than 15%. I would also expect that the cost factor between disk and flash in the 2020s would be less than 10x rather than greater than 10x, but still more than enough to maintain hard disk's dominance of the bulk storage market.

-

Don Chamberlin: It’s very gratifying to me that, 43 years after Ray Boyce and I published the first SEQUEL paper, SQL is still the world’s most widely used database query language. I’m also very gratified to see that SQL is being used in a vast variety of exciting applications, far more than Ray and I ever anticipated. When we started work on SQL, the internet did not exist, but nevertheless, SQL has turned out to be an essential tool for commerce on the internet. Several open-source SQL systems are available, so anyone who wants to can use SQL for free.

-

richie5um: I liken software methodologies to cooking recipes. On one end of the scale you have a highly skilled and experienced chef, who can craft a great (requested) dish with a variety of ingredients and equipment. Knowing how to adapt and what to add and when. This works well, but requires a heavy cost (experience) and is, from the outside, difficult to follow and control. On the other end of the scale you have fast food. A strict process and fixed ingredients that ‘just works’. Those involved in cooking have very little knowledge of why, and are almost certainly not able to adapt. This works well for scenarios where you exactly know and control both the input and output.

-

whipoodle: I work for one of those fancy startups. Everything is chaos all the time, you have all these extremely smart people in the small doing actually kinda dumb things in the large. This worked better when the company was small and is causing more and more problems as we grow. Anyway, the point being that there’s lots of smart individuals and little process. I mentioned this during a meeting with my team, and so a process was devised for the next project we embarked upon. The process was waterfall, except nobody referred to it that way (most of the people are like 25, and weren’t working back when it was the norm). Everything old is new again. It wasn’t important anyway; we didn’t follow the process.

-

jhgg: It's worth noting that the instance migration basically null-routed the redis VM for a good 30 minutes, until we manually intervened and restarted it. The instance was completely disconnected from the internal network immediately following the migration. From what we could gather from instance logs, the routing table on the VM was completely dropped and it could not even connect to the magic metadata service (metadata.internal - we saw "no route to host" errors for that). This is a pretty serious bug within GCP and we've already opened a case with them hoping they can get a fix. I think this is the 4th or 5th major bug we've encountered with their live migration system that could have, or has led to an outage or internal service degradation. GCP team has seriously investigated and fixed every bug we've reported to them so far, so props to them for that! Live migration is incredibly difficult to get right.

-

- Seeing some creative internet style thinking in the movie business. Movie Pass let's you see one movie a day, at a theatre, for a flat fee of $10 a month. Theatres have tons of unused inventory in empty seats. They make money selling crappy popcorn and sugary treats. Packing theatres should be the goal, shouldn't it? Maybe put theatre seats on Airbnb?

- Here's everything that happened at Facebook's Mobile @Scale 2017. Topics include: The future of multi-modal interfaces; Evolution of WhatsApp within Facebook's data centers; Modern server-side rendering with React; Runkeeper: Scaling to get the whole world running.

- Microservices in Java? Never. && Microservices in Java — A Second Look. The idea is if you run a lot of microservices, which one tends to do, if they use a lot of RAM you're wasting money. In this example, the Java 8 + SpringBoot version cost $5K more per year to run than the go version. Pushback included SpringBoot is a kitchen sink framework so the comparison is unfair. Use Java 9 and include only what you need. $5K is nothing, what are you worried about? Will go really save RAM in the full application? SpringBoot isn't your only option.

- So, you think formally verified means bug free? Nah. Falling through the KRACKs: how did this attack slip through, despite the fact that the 802.11i handshake was formally proven secure?...One of the problems with IEEE is that the standards are highly complex and get made via a closed-door process of private meetings...The second problem is that the IEEE standards are poorly specified. As the KRACK paper points out, there is no formal description of the 802.11i handshake state machine...The critical problem is that while people looked closely at the two components — handshake and encryption protocol — in isolation, apparently nobody looked closely at the two components as they were connected together. I’m pretty sure there’s an entire geek meme about this.

- Dear Postgres. A love letter.

- Insightful interview with Chuck McManis on Containers and Distributed Systems: Where They Came From and Where They’re Going: Early on there was a war between multiprocessing and distributing processing, supercomputing versus network computing, and this has been an ongoing battle even to today. A distributed system requires a network fabric between nodes and this is always much, much less bandwidth than what’s internal to a single node...We ran into a lot of problems that plague distributed systems even today, about naming, time synchronization, logging, and making new transactions...battle between parallel processing systems and distributed systems. Within Sun Microsystems there were two efforts: Beowulf clusters and typical multiprocessing machines...The key needs were better resource utilization, process isolation, and the need to prevent information leakage. It turned out that about twenty years earlier IBM did sort of solve that problem with partition isolation, but they did that on custom mainframe hardware and architecture. It turned out that everyone wanted that too, but on low-cost commodity hardware. And it was at that particular insight that Google had internalized in early 2000 and gotten really good at...Google was very famous for having a policy where every node was uniformly the same, so that you could just schedule things on top of them. I brought in a somewhat heretical notion that perhaps storage can be a first-class service running on equipment that did nothing but provided a storage API to the network...Computers are so fast today, disks are so dense, memory is so cheap, that it takes a fairly big problem for you to actually want to partition it...Much of this revolves around how cheap processors have gotten. We can build clouds of cheap processors connected to very inexpensive sensors and readers at the edge. They’ll be a self-contained cloud. You could have tens of thousands of these in a single location. They may not even need to be connected to the Internet as they can function on their own. They’re their own cloud. It’s similar to the technology we were building at the very beginning of distributed computing.

- It's as cool as you think it would be. Watch the World’s First Giant Robot Fight: The only way this project was possible was by using as many COTS [commercial off-the-shelf] parts as possible, and by verifying each part’s functionality as it came in. As best we could, we tested each part as it arrived, made sure it worked, and tested each subsystem before it was assembled into the full system. This let us catch errors like suppliers sending us the wrong part, suppliers sending us parts that didn’t work as advertised (for example, we lost a month of controls work by debugging a firmware issue with one of our sensors), and manufacturing errors. If I had to pass on a piece of advice, it would be to assemble in small pieces and test early and often.

- Baidu: Supporting 50M Inserts a Day with CockroachDB in Production: Baidu’s DBA team is now running CockroachDB in production to support two new applications that would have previously used MySQL. These applications access 2TB of data with 50M inserts a day, taking advantage of SQL features like secondary indexes and distributed SQL queries. Baidu’s deployment of CockroachDB is simple with ten nodes installed on bare metal servers. A load balancer sits above the ten nodes to distribute traffic.

- Why PostgreSQL is better than MySQL. Reason: a MySQL bug took 14.5 years to fix. In the highly unlikely event you haven't already developed a position on this issue that you'll defend to the boredom of anyone who will listen, some good comments on HackerNews.

- 5 things we learned from Waymo’s big self-driving car report. Interesting that they are pitching safety. What does that mean for software? Waymo is sandboxing safety-critical systems and building in a lot of redundancy...Waymo says it has done extensive work to make sure that computer crashes don't lead to car crashes. All of the key systems on its cars—the computer, brakes, steering systems, and batteries—have backups ready to take over if the main system fails...A secondary computer in the vehicle is always running in the background and is designed to bring the vehicle to a safe stop if it detects a failure of the primary system...all of these systems have separate power sources, so the failure of power to the main computer won't knock out the backup computer or other critical systems...Safety-critical aspects of Waymo's vehicles—e.g. steering, braking, controllers—are isolated from outside communication...both the safety-critical computing that determines vehicle movements and the onboard 3D maps are shielded from, and inaccessible from, the vehicle's wireless connections and systems.

- Adding a dash of parallelism and a pinch of math makes everything faster. Wundawuzi: Amabrush can brush your teeth in just 10 seconds, because all your teeth are cleaned simultaneously. Even in this 10 seconds, every tooth surface is cleaned longer compared with common toothbrushes. If you brush your teeth for the recommended 120 seconds with a regular toothbrush (manual or electric), every surface gets brushed for just 1.25 seconds (given the fact that you have 32 teeth and every teeth has three surfaces). Amabrush brushes all your surfaces for whole 10 seconds. This means: every tooth surface gets brushed 8x longer and the total toothbrushing duration is 12x quicker.

- When 500% Faster is Garbage: On my local machine, hapi v16 handled 3,500 requests/second when using the Fastify benchmark scripts. A recent build of v17 handled 17,800 requests/second. That’s a 508% improvement. Almost a tie with a bare Express benchmark at 18,500 requests/second. Garbage...It might be a nice number to brag about but it still means nothing.

- Adding Kubernetes support in the Docker platform: The Docker platform is getting support for Kubernetes. This means that developers and operators can build apps with Docker and seamlessly test and deploy them using both Docker Swarm and Kubernetes. amouat: This really helps with the dev-to-production story for containers. hosh: I see the same thing too: a struggle for relevance. The center of gravity has already moved onto k8s, even if it will takw a while for the rest of the developer community to catch up. InTheArena: Great news for everyone except for VMWare (this is a simple compelling operating system for data centers that spans both windows and mac) and Openshift (which was one of the few viable ways of actually purchasing Kubernetes support). A lot of egos on both sides had to be suppressed to make this happen. grabcocque: This seems a lot to me like Docker Inc. caving in to what has been painfully obvious for a while: K8s won and Swarm/Mesos lost the battle for hearts and minds in container orchestration. We can argue about why it happened, but I got the impression Docker Inc. were desperately trying to wish it away. ealexhudson: Have to say, I don't see the value in being able to have Swarm and k8s in the same cluster. erikb: Kubernetes currently has a lot of traction in Enterprise world. Even the CEOs of the big corps currently know what Kubernetes is. That's why you need to support it in some way if you want to continue to compete. At least for the time being.

- Actually thoughtful and balanced. Why we switched from Python to Go: Reason 1 – Performance: Go is typically 30 times faster than Python. Reason 2 – Language Performance Matters: Python is a great language but its performance is pretty sluggish for use cases such as serialization/deserialization, ranking and aggregation. We frequently ran into performance issues where Cassandra would take 1ms to retrieve the data and Python would spend the next 10ms turning it into objects. Reason 3 – Developer Productivity & Not Getting Too Creative: Go forces you to stick to the basics. This makes it very easy to read anyone’s code and immediately understand what’s going on. Reason 4 – Concurrency & Channels: Go’s approach to concurrency is very easy to work with. Reason 5 – Fast Compile Time: Our largest micro service written in Go currently takes 6 seconds to compile. Reason 6 – The Ability to Build a Team: We’ve found it easier to build a team of Go developers compared to many other languages. Reason 7 – Strong Ecosystem: Go has great support for the tools we use. Solid libraries were already available for Redis, RabbitMQ, PostgreSQL, Template parsing, Task scheduling, Expression parsing and RocksDB. Reason 8 – Gofmt, Enforced Code Formatting: Gofmt avoids all of this discussion by having one official way to format your code. Reason 9 – gRPC and Protocol Buffers: Go has first-class support for protocol buffers and gRPC. So, no more ambiguous REST endpoints for internal traffic, that you have to write almost the same client and server code for every time. Lots of good comments on Lobsters and on reddit. Needless to say, there's lots of disagreement.

- There's always a push to move computation down to be as close as possible to the data. We've seen this same proposal for disk drives, NICs, ASICs, etc. Making the Case for Feature-Rich Memory Systems: this article has made the argument that memory devices can be much more than “dumb” units that store rows of bits. They can be augmented to perform parts of the application or other auxiliary system-level features. This can directly impact overall system performance and energy. While this approach will no doubt increase the cost per bit for memory devices, it offers an opportunity for value addition in an era where customers will likely pay more for specialized systems.

- Nice code example. Engaging your users with AWS Step Functions.

- How does Apple recognize "Hey, Siri?" They have millions of people listening to every word you say, waiting for the right key word phrase. Nah, it's ML of course. Hey Siri: An On-device DNN-powered Voice Trigger for Apple’s Personal Assistant: The “Hey Siri” detector uses a Deep Neural Network (DNN) to convert the acoustic pattern of your voice at each instant into a probability distribution over speech sounds. It then uses a temporal integration process to compute a confidence score that the phrase you uttered was “Hey Siri”. If the score is high enough, Siri wakes up...Most of the implementation of Siri is “in the Cloud”, including the main automatic speech recognition...The microphone in an iPhone or Apple Watch turns your voice into a stream of instantaneous waveform samples, at a rate of 16000 per second. A spectrum analysis stage converts the waveform sample stream to a sequence of frames, each describing the sound spectrum of approximately 0.01 sec. About twenty of these frames at a time (0.2 sec of audio) are fed to the acoustic model, a Deep Neural Network (DNN) which converts each of these acoustic patterns into a probability distribution over a set of speech sound classes: those used in the “Hey Siri” phrase, plus silence and other speech, for a total of about 20 sound classes...On iPhone we use two networks—one for initial detection and another as a secondary checker. The initial detector uses fewer units than the secondary checker...To avoid running the main processor all day just to listen for the trigger phrase, the iPhone’s Always On Processor (AOP) (a small, low-power auxiliary processor, that is, the embedded Motion Coprocessor) has access to the microphone signal (on 6S and later). We use a small proportion of the AOP’s limited processing power to run a detector with a small version of the acoustic model (DNN). When the score exceeds a threshold the motion coprocessor wakes up the main processor, which analyzes the signal using a larger DNN. In the first versions with AOP support, the first detector used a DNN with 5 layers of 32 hidden units and the second detector had 5 layers of 192 hidden units.

- Good Breaking down ServerlessConf NYC 2017.

- Lots of great insight on syncing time in a game. Managing time always sucks. Determinism in League of Legends: Unified Clock. But that's not the hard part. Rick Hoskinson: Probably the scariest part of the process is actually rolling out the changes themselves. There were many subtleties and edge cases to deal with. The full roll-out happened over the course of months, gradually adding in more environments/regions and adjusting or fixing bugs as necessary. We had a really excellent team of Quality Assurance experts working with us though, which definitely was the secret ingredient for mitigating player pain.

- Free eBook: Becoming a Cloud Native Organization. 25 pages. Joe Beda knows his stuff. Requires sign-up, but it's a good overview, so it's worth it.

- An unusual combination. Scalable and serverless media processing using BuckleScript/OCaml and AWS Lambda/API Gateway: In order to make Javascript more suitable for large-scale industrial applications, the BuckleScript framework has recently emerged, originally supported by Bloomberg. BuckleScript interfaces Javascript native code with OCaml. You start by defining bindings from Javascript to OCaml, then write OCaml code using those bindings. Finally, the BuckleScript compiler creates Javascript code from your OCaml code that you can deploy as native Javascript.

- Not a lot of details. Evolution of GitHub's data centers: We’ve got four facilities, two of which are transit hotels which we call points of presence (POPs) and two of which are data centers. To give an idea of our scale, we’ve got petabytes of Git data stored for users of GitHub.com and do around 100Gb/s across transit, internet exchanges, and private network interfaces in order to serve thousands of requests per second. Our network and facilities are built using a hub and spoke design. We operate our own backbone between Seattle and northern Virginia POPs which provides more consistent latencies and throughput via protected fiber.

- Wisdom from Guerrilla Capacity Planning's @DrQz: By "trade-off," I'm referring to the notion that proper performance analysis is about optimization of one kind or another. Basically, you are varying one performance metric against another, or several others, to achieve an optimal goal. Identifying the appropriate performance metrics to vary is one thing, but not the most important thing. The more important point is that all performance metrics are related ... and related nonlinearly. That's what makes performance analysis both interesting and difficult. Queueing theory provides an appropriate rigorous framework by which to characterize those nonlinearities: including Little's law and the USL. By way of a very simple example, if you are more concerned about user impatience when they're interacting with an application, you might tend to focus on optimizing the user waiting-time metric as a (nonlinear) function of message queue-lengths or resource utilization or whatever other metrics. Conversely, if you were a weather forecaster and needed to crunch a lot of simulation data on a supercomputer, you might tend to focus on optimizing the throughput metric as a function of the number of cores or memory size or whatever other relevant metrics. All the metric relationships are nonlinear. Those two performance goals, represented respectively by the waiting time and throughput, can be thought of as being at opposite ends of the performance spectrum. And then there's the matter of the cost of your selected performance solution. The "best" (optimal) choice mathematically might not be affordable so, you have to fall back to a goal that may be technically sub-optimal but less expensive. In general, there is no single "right way" or one size fits all. It depends on your performance goals.

- Don't be caught with your S3 pants down. Here's your belt and suspenders. S3 Bucket Security: More Than ACLs and Policies.

- How Wallaroo Scales Distributed State: Wallaroo provides in-memory application state. That means that in our word count example, you don’t need to make calls out to an external system...Wallaroo breaks down application state into discrete state entities that act as boundaries for atomic transactions (see Pat Helland’s paper, which inspired our work in this area)...When a Wallaroo application starts up, state entities are distributed over the workers in the cluster. Each worker has routing information that allows us to route data to the correct state, whether that state exists locally or on another worker.

- A great role for AI would be in maintenance. All the glory and easy stuff for humans. Kurt Vonnegut: Another flaw in human character is that everybody wants to build and nobody wants to do maintenance.

- Excellent overview of Event-Driven Architecture and From CQS to CQRS. There are three cases in which to use events: To decouple components. To perform async tasks. To keep track of state changes (audit log). Command Query Separation: asking a question should not change the answer and doing something should not give back an answer. Command Pattern: instead of having a user execute a “CreateUser” action, followed by an “ActivateUser” action, and a “SendUserCreatedEmail” action, we would have the user simply execute a “RegisterUser” command which would execute the previously mentioned three actions as an encapsulated business process. Command Bus: evolve the Command Invoker into something capable of receiving a Command and figure out what handler can handle it. Command Query Responsibility Segregation: By putting together the concepts of CQS, Command, Query and CommandBus we finally reach CQRS. By using CQRS we can completely separate the read model from the write model, allowing us to have optimised read and write operations. This adds to performance, but also to the clarity and simplicity of the codebase, to the ability of the codebase to reflect the domain, and to the maintainability of the codebase. Again, it’s all about encapsulation, low coupling, high cohesion, and the Single Responsibility Principle.

- makerbot/labs: Documentation and utilities for experimental usage of MakerBot 3D printing solutions

- github.com/Grafeas: A Component Metadata API. Also, How Shopify Governs Containers at Scale with Grafeas and Kritis, Introducing Grafeas: An open-source API to audit and govern your software supply chain.

- haydenjameslee/networked-aframe: A web framework for building multi-user virtual reality experiences. Write full-featured multi-user VR experiences entirely in HTML.

- twitter/torch-decisiontree: This project implements random forests and gradient boosted decision trees (GBDT). The latter uses gradient tree boosting. Both use ensemble learning to produce ensembles of decision trees (that is, forests).

- Facebook's Infer : RacerD (article): RacerD statically analyzes Java code to detect potential concurrency bugs. This analysis does not attempt to prove the absence of concurrency issues, rather, it searches for a high-confidence class of data races.

- Efficient Methods and Hardware for Deep Learning: to efficiently implement "Deep Compression" in hardware, we developed EIE, the "Efficient Inference Engine", a domain-specific hardware accelerator that performs inference directly on the compressed model which significantly saves memory bandwidth. Taking advantage of the compressed model, and being able to deal with the irregular computation pattern efficiently, EIE improves the speed by 13x and energy efficiency by 3,400x over GPU.

- DyNet: The Dynamic Neural Network Toolkit: a toolkit for implementing neural network models based on dynamic declaration of network structure. In the static declaration strategy that is used in toolkits like Theano, CNTK, and TensorFlow, the user first defines a computation graph (a symbolic representation of the computation), and then examples are fed into an engine that executes this computation and computes its derivatives. In DyNet’s dynamic declaration strategy, computation graph construction is mostly transparent, being implicitly constructed by executing procedural code that computes the network outputs, and the user is free to use different network structures for each input. Dynamic declaration thus facilitates the implementation of more complicated network architectures

- Is Parallel Programming Hard, And, If So, What Can You Do About It?: The purpose of this book is to help you program sharedmemory parallel machines without risking your sanity. We hope that this book’s design principles will help you avoid at least some parallel-programming pitfalls. That said, you should think of this book as a foundation on which to build, rather than as a completed cathedral. Your mission, if you choose to accept, is to help make further progress in the exciting field of parallel programming—progress that will in time render this book obsolete

- Growing a Language: A language design can no longer be a thing. It must be a pattern—a pattern for growth—a pattern for growing the pattern for defining the patterns that programmers can use for their real work and their main goal. My point is that a good programmer in these times does not just write programs. A good programmer builds a working vocabulary. In other words, a good programmer does language design, though not from scratch, but by building on the frame of a base language.

- Serverless computing: economic and architectural impact: It’s a case study on how serverless computing changes the shape of the systems that we build, and the (dramatic) impact it can have on the cost of running those systems. There’s also a nice section at the end of the paper summarising some of the current limitations of the serverless approach.

Hey, just letting you know I've written a new book: Explain the Cloud Like I'm 10. It's pretty much exactly what the title says it is. If you've ever tried to explain the cloud to someone, but had no idea what to say, send them this book.

I've also written a novella: The Strange Trial of Ciri: The First Sentient AI. It explores the idea of how a sentient AI might arise as ripped from the headlines deep learning techniques are applied to large social networks. Anyway, I like the story. If you do too please consider giving it a review on Amazon.

Thanks for your support!