Stuff The Internet Says On Scalability For March 3rd, 2017

Hey, it's HighScalability time:

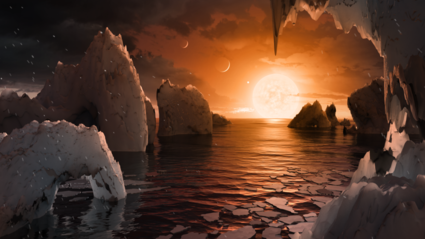

Only 235 trillion miles away. Engage. (NASA)

If you like this sort of Stuff then please support me on Patreon.

- $5 billion: Netflix spend on new content; $1 billion: Netflix spend on tech; 10%: bounced BBC users for every additional second page load; $3.5 billion: Priceline Group ad spend; 12.6 million: hours streamed by Pornhub per day; 1 billion: hours streamed by YouTube per day; 38,000 BC: auroch carving; 5%: decrease in US TV sets;

- Quotable Quotes:

- Fahim ul Haq: Rule 1: Reading High Scalability a night before your interview does not make you an expert in Distributed Systems.

- @Pinboard: Root cause of outage: S3 is actually hosted on Google Cloud Storage, and today Google Cloud Storage migrated to AWS

- Matthew Green: ransomware currently is using only a tiny fraction of the capabilities available to it. Secure execution technologies in particular represent a giant footgun just waiting to go off if manufacturers get things only a little bit wrong.

- dsr_: This [S3 outage] is analogous to "we needed to fsck, and nobody realized how long that would take".

- @elkmovie: Quarterly iPad revenue - used to be huge (> Android in fact), harder and harder these days to justify putting effort into iPad optimization.

- tptacek: Uber isn't the driver's employer. Uber is a vendor to the driver. The driver is complaining that its vendor made commitments, on which the driver depended, and then reneged. The driver might be right or might be wrong, but in no discussion with a vendor in the history of the Fortune 500 has it ever been OK for the vendor to accuse their customer of "not taking responsibility for their own shit".

- @felixsalmon: Hours of video served per day: Facebook: 100 million Netflix: 116 million YouTube: 1 billion

- @Geek_Manager: "Everybody wants to write reusable code. Nobody wants to reuse anyone else's code." @eryno #leaddev

- @ellenhuet: a private South Bay high school 1) having a growth fund and 2) being early in Snap is the most silicon valley thing ever

- @_ginger_kid: I speak from experience as a cash strapped startup CTO. Would love to be multi region, just cannot justify it. V hard.

- @Objective_Neo: SpaceX, $12 billion valuation: Launches 70m rockets into space and lands them safely. Snapchat, $20 billion valuation: Rainbow Filters.

- @neil_conway: (2/4): MTTR (repair time) is AT LEAST as important as MTBF in determining service uptime and often easier to improve.

- John Hagel: we’re likely to see a new category of gig work emerge – let’s call it “creative opportunity targeting.”...we anticipate that more and more of the workforce will be pulled into this arena of creative gig workgroups

- Seyi Fabode: The constraint is that the broker model, even with new technology, is not value additive.

- Robert Kolker: From his experience with the Gary police, Hargrove learned the first big lesson of data: If it’s bad news, not everyone wants to see the numbers

- gamache: A piece of hard-earned advice: us-east-1 is the worst place to set up AWS services. You're signing up for the oldest hardware and the most frequent outages.

- Dan Sperber: we each have a great many mental devices that contribute to our cognition. There are many subsystems. Not two, but dozens or hundreds or thousands of little mechanisms that are highly specialized and interact in our brain. Nobody doubts that something like this is the case with visual perception. I want to argue that it’s also the case for the so-called central systems, for reasoning, for inference in general.

- Joaquin Quiñonero Candela: Facebook today cannot exist without AI. Every time you use Facebook or Instagram or Messenger, you may not realize it, but your experiences are being powered by AI.

- alicebob: Sometimes keeping things simple is worth more than keeping things globally available.

- Sveta Smirnova: Both MySQL and PostgreSQL on a machine with a large number of CPU cores hit CPU resources limits before disk speed can start affecting performance.

- @jamesiry: Using many $100,000’s of compute, Google collided a known weak hash. Meanwhile one botched memcpy leaked the Internet’s passwords.

- @david4096: teaching engineers to say no is cheaper than Haskell

- @cgvendrell: #AI will be dictated by Google. They're 1 order of magnitude ahead, they understood key = chip level of stack (TPU) + training data @chamath

- @antirez: There are tons of more tests to do, but the radix trees could replace most hash tables inside Redis in the future: faster & smaller.

- DHH: So it remains mostly our fault. Our choice, our dollars. Every purchase a vote for an ever more dysfunctional future. We will spend our way into the abyss.

- @jamesurquhart: This is why I write about data stream processing and serverless—lessons I learned at @SOASTAInc about the value of real time and BizOps.

- twakefield: The brilliance of open sourcing Borg (aka Kubernetes) is evident in times like these. We[0] are seeing more and more SaaS companies abstract away their dependencies on AWS or any particular cloud provider with Kubernetes.

- flak: password hashes aren’t broken by cryptanalysis. They’re rendered irrelevant by time (hardware advancements). What was once expensive is now cheap, what was once slow is now fast. The amount of work hasn’t been reduced, but the difficulty of performing it has.

- @darkuncle: biz decisions again ... gotta weigh cost/frequency of AWS single-region downtime vs. cost/complexity of multi-region & GSLB.

- @nantonius: Reducing network latencies are a key enabler for moving away from monolith towards serverless. @adrianco:

- tbrowbdidnso: These companies that all run their own hardware exclusively are telling everyone that it's stupid to run your own hardware... Why are we listening?

- jasonhoyt: "People make mistakes all the time...the problem was that our systems that were designed to recognize and correct human error failed us."

- @chuhnk: Bob: Service Discovery is a SPOF. You should build async services. Me: How do you receive messages? Bob: A message bus Me: ...

- @JoeEmison: These articles on serverless remind me of articles on NoSQL from a few years ago. FaaS may have low adoption b/c of the req'd architectures.

- @Jason: We have 30-60% open rates for http://inside.com emails vs 1% for app downloads!

- @adrianco: Split brain syndrome: half your brain thinks message buses are reliable. Other half is wondering how to recover from split brain syndrome.

- @dbrady: The older I get, the less I care about making tech decisions right and the more I care about retaining the ability to change a wrong one.

- @littleidea: "Automation code, like unit test code, dies when the maintaining team isn’t obsessive about keeping the code in sync with the codebase."

- @adulau: I don't ask for bug bounties, fame, cash or even tshirt. I just want a good security point of contact to fix the issues.

- StorageMojo: most of the SSD vendors don’t make AFAs [all flash arrays]. They have little to lose by pushing NVMe/PCIe SSDs for broad adoption.

- cookiecaper: I mean, that's not really AWS's problem, is it? Outages happen. If you have a mission-critical service like health care, you really shouldn't write systems with single points of failure like this, especially not systems that depend on something consumer-grade like S3.

- plgeek: To me his main point is there is a spectrum of what you might consider evidence/proof. However, in Software Engineering their have been low standards set, and it's really not acceptable to continue with low standards. He is not saying the only sort of acceptable evidence is a double blind study.

- n00b101: I asked an Intel chip designer about this and his opinion was that asynchronous processors are a "fantasy." His reasoning was that an asynchronous chip would still need to synchronize data communication within the chip. Apparently global clock synchronization accounts for about 20% of the power usage of a synchronous chip. In the asynchronous case, if you had to synchronize every communication, then the cost of communication is doubled.

- Anti-virus software uses fingerprinting as a detection technique. Surprise, nature got there first. Update: CRISPR. Bacteria grab pieces of DNA from viri and store them. This lets them recognize a virus later. When a virus enters a bacteria the bacteria will send out enzymes to combat the invader. Usually the bacteria dies. Sometimes the bacteria wins. The bacteria sends out enzymes to find stray viruses and cut the enemy DNA into small pieces. Those enzymes take the little bits of DNA and splice them into the bacteria's own DNA. DNA is used as a memory device. Next time the virus shows up the bacteria creates molecular assassins that contain a copy of the virus DNA. If there's a pattern match then kill it. The protein looks something like a clam shell. It has a copy of the virus DNA. Whenever it bumps into some virus DNA it pulls apart the DNA, unzips it, reads it, if it's not the right one it moves on. If the RNA has the same sequence then molecular blades come out and chop. Like smart scissors. This is CRISPR.

- Videos from microXchg 2017 are now available.

- A natural disaster occurred. S3 went down. Were you happy with how your infrastructure responded? @spire was. Mitigating an AWS Instance Failure with the Magic of Kubernetes: "Kubernetes immediately detected what was happening. It created replacement pods on other instances we had running in different availability zones, bringing them back into service as they became available. All of this happened automatically and without any service disruption, there was zero downtime. If we hadn’t been paying attention, we likely wouldn’t have noticed everything Kubernetes did behind the scenes to keep our systems up and running." How do you make this happen?: Distribute nodes across multiple AZs; Nodes should have capacity to handle at least one node failure; Use at least 2 pods per deployment; Use readiness and liveness probes.

- The fickle fat finger of fate struck S3. Note how a person is not blamed. The tools are to blame. Nice. Summary of the Amazon S3 Service Disruption in the Northern Virginia (US-EAST-1) Region: an authorized S3 team member using an established playbook executed a command which was intended to remove a small number of servers for one of the S3 subsystems that is used by the S3 billing process. Unfortunately, one of the inputs to the command was entered incorrectly and a larger set of servers was removed than intended...Removing a significant portion of the capacity caused each of these systems to require a full restart. While these subsystems were being restarted, S3 was unable to service requests...We have modified this tool to remove capacity more slowly and added safeguards to prevent capacity from being removed when it will take any subsystem below its minimum required capacity level.

- Maybe Skynet could have been prevented if there was some sort of phone support? With all the ferocious weather in the Bay Area many roads close in an instant. Drivers desperate to find a way home are blocked at every turn. It's raining, the territory is unfamiliar, Google Maps has found a route! Unfortunately Google Maps decided to redirect a full commute of cars down a local person's driveway, much to the dismay of all involved. No problem, all you have to do is call Google...

- Turn about is fair play. Seems pros are dropping Macs. QuickBooks Desktop for Mac is being terminated. Mac 2016 will be the last version available. No link because this was from an email. Is this part of a general move to online everything? Will Windows be dropped soon? Or is this just a Mac thing?

- Modern tech interviews suck by design. I haven't seen the content for this course, because it costs $$$, but it looks like it might help. Grokking the System Design Interview

- blantonl: It took me about 15 minutes to spin up the instances on Google Cloud that archive these objects and upload them to Google Storage. While we didn't have access to any of our existing uploaded objects on S3 during the outage, I was able to mitigate not having the ability to store any future ongoing objects. (our workload is much more geared towards being very very write heavy for these objects). It it turns out this cost leveraging architecture works quite well as a disaster recovery architecture.

- How do you create a site that handles Olympic level loads? Here's seven techniques the BBC uses to create sites and apps that can handle millions of users simultaneously: Caches are your best friend; Use a CDN; Add more servers; Optimise page generation; Split the work; Find your limit; Compromise, but not on speed. Nothing revolutionary, but perhaps that's the point.

- Riot Games Messaging Service: Once we have the initial state, how do we receive updates?... Riot Messaging Service (RMS for short)...a backend service that allows other services to publish messages and enables clients to receive them...similar to a mobile push notification service...RMS consists of 2 main tiers: RMS Edge and RMS Routing...RMS Edge (RMSE) tier is a collection of independent servers responsible for hosting player client connections...League clients connect to an RMSE node sitting behind a load balancer using an encrypted WebSocket connection. The connection is established after successful authentication, persists throughout the player’s session, and is terminated on logout...Because RMSE servers don't know about each other, they are 100% linearly scalable...RMS Routing (RMSR) tier in turn is a layer of clustered servers responsible for a global view of all client sessions across all RMSE servers...hold a global, distributed table mapping player identifiers to RMSE nodes that keep their sessions...also processes incoming published messages from other services and routes them to proper RMSE nodes...keep track of the health of RMSE tier nodes and perform necessary cleanups whenever something bad happens to one of them...we haven't had a single failure or dropped message.

- For your consideration, a new database with a different approach. 120,000 distributed consistent writes per second with Calvin: Unlike other distributed databases that rely on hardware clocks or multi-phase commits, FaunaDB’s transaction consistency algorithm is based on Calvin. Calvin is designed for high throughput regardless of network latency, and was the work of Alexander Thomson and others from Daniel Abadi’s lab at Yale. Calvin’s primary trade-off is that it doesn’t support session transactions, so it’s not well suited for SQL. Instead, transactions must be submitted atomically. Session transactions in SQL were designed for analytics, specifically human beings sitting at a workstation. They are pure overhead in a high-throughput operational context.

- The Real Difference Between Google and Apple: The most notable difference we see is the presence of the group of highly connected, experienced ‘super inventors’ at the core of Apple compared to the more evenly dispersed innovation structure in Google. This seems to indicate a top-down, more centrally controlled system in Apple vs. potentially more independence and empowerment in Google.

- Challenges of Serverless 2017: 1. Problem, not Service focused documentation and content; 2. Better low level tooling without additional abstractions; 3. Make it easier to include new features and services into your infrastructure; 4. Testing, Monitoring and Operations; 5. Ignore Multi Provider Compatibility. So start breaking old habits. Realize one tool does not fit all for infrastructure and deployment. And we need a better onboarding and deployment experience.

- Meetup with an early positive experience report of using Google Cloud Functions. Securing container borders with cloud functions: Google Cloud Functions provided a great way to implement a sustainable, reliable, and managed solution to secure our container borders. We care as much about development velocity as we do security.

-

New Studies Illustrate How Gamers Get Good: people who played the most matches per week (more than 64) had the greatest increase in skill over time. With "StarCraft 2," the researchers studied hundreds of matches to see what elite players did different from less-skilled players; they learned elite players used hotkeys, or custom key shortcuts, to save time. Skilled gamers also warmed up their reflexes before gameplay by scrolling rapidly through their hotkeys. The results of both studies indicate skill acquisition derives from frequent but not excessive practice and unique, consistent rituals.

-

Yes, polling works, but the service you are polling still needs a queue. Then you'll find you need faster notification so you'll end up fat pushing events. Then events can be dropped, out of order, etc, so you end up with a complex syncing service. Another reason why Messaging Queues Suck: A better way is for the customer service to poll the Order service periodically. Every 1/2 hour, a REST GET of ‘Give me a list of customers who have made orders since xxxx’. can be issued to the Order service.

-

This simple algorithm has been found 5 times and forgotten and has formed the basis of algorithms in machine learning, optimization, game theory, economics, biology, and more. The Reasonable Effectiveness of the Multiplicative Weights Update Algorithm: Set all the object weights to be 1.For some large number of rounds: { Pick an object at random proportionally to the weights Some event happens Increase the weight of the chosen object if it does well in the event Otherwise decrease the weight }

-

Maybe green goo can take over the world? Scientists reveal new super-fast form of computer that ‘grows as it computes’: But our new computer doesn’t need to choose, for it can replicate itself and follow both paths at the same time, thus finding the answer faster. This ‘magical’ property is possible because the computer’s processors are made of DNA rather than silicon chips. All electronic computers have a fixed number of chips.

-

upspin/upspin: A framework for naming everyone's everything. nzhenry provides some context: IPFS is a distributed file system while Upspin is a decentralized one. My understanding is the main benefit of IPFS is reducing load on servers and allowing content to live on after the server dies (assuming the content is sufficiently interesting) in a similar way to BitTorrent. It does not provide access control.Upspin doesn't aim to solve the problem of performance. It is aimed at individuals and organisations who want to share content while retaining full control of their content and who has access.

-

MySQL Ransomware: Open Source Database Security Part 3: Don’t use a publicly accessible IP address with no firewall configured; Don’t use a root@% account, or other equally privileged access account, with poor MySQL isolation; Don’t configure those privileged users with a weak password, allowing for brute force attacks against the MySQL service

-

Nice deep dive. Lots of colorful diagrams. Redis Pub/Sub under the hood: This system may surprise you: multiple clients subscribed to the same pattern do not get grouped together! If 10,000 clients subscribe to food.*, you will get a linked list of 10,000 patterns, each of which is tested on every publish! This design assumes that the set of pattern subscriptions will be small and distinct.

- It's good to know when humans have shuffled off this mortal coil our solar powered, self-repairing proxies will continue the fight. Even good bots fight: The case of Wikipedia: We find that, although Wikipedia bots are intended to support the encyclopedia, they often undo each other’s edits and these sterile “fights” may sometimes continue for years. Unlike humans on Wikipedia, bots’ interactions tend to occur over longer periods of time and to be more reciprocated. Yet, just like humans, bots in different cultural environments may behave differently. Our research suggests that even relatively “dumb” bots may give rise to complex interactions, and this carries important implications for Artificial Intelligence research

- facebookincubator/prophet: a procedure for forecasting time series data. It is based on an additive model where non-linear trends are fit with yearly and weekly seasonality, plus holidays. It works best with daily periodicity data with at least one year of historical data. Prophet is robust to missing data, shifts in the trend, and large outliers.

- skarupke/flat_hash_map: I think I wrote the fastest hash table there is. Details in I Wrote The Fastest Hashtable.

- INTEGER DIVISION BY CONSTANTS: In this section we consider some methods for dividing by constants that do not use the multiply high instruction, or a multiplication instruction that gives a doubleword result. We show how to change division by a constant into a sequence of shift and add instructions, or shift, add, and multiply for more compact code.

- Calvin: Fast Distributed Transactions for Partitioned Database Systems: Calvinis a practical transaction scheduling and data replication layer that uses a deterministic ordering guarantee to significantly reduce the normally prohibitive contention costs associated with distributed transactions. Unlike previous deterministic database system prototypes, Calvin supports disk-based storage, scales near-linearly on a cluster of commodity machines, and has no single point of failure. By replicating transaction inputs rather than effects, Calvin is also able to support multiple consistency levels—including Paxosbased strong consistency across geographically distant replicas—at no cost to transactional throughput.

- Algorithms and Data Structures for Efficient Free Space Reclamation in WAFL: We analyze how modern distributed storage systems behave in the presence of file-system faults such as data corruption and read and write errors. We characterize eight popular distributed storage systems and uncover numerous bugs related to file-system fault tolerance. We find that modern distributed systems do not consistently use redundancy to recover from file-system faults: a single file-system fault can cause catastrophic outcomes such as data loss, corruption, and unavailability.

- More quick links from Greg Linden.