Stuff The Internet Says On Scalability For November 23rd, 2018

Wake up! It's HighScalability time:

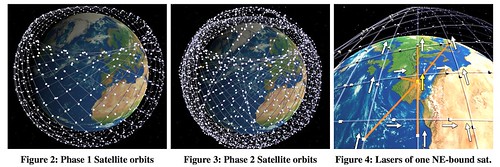

Curious how SpaceX's satellite constellation works? Here's some fancy FCC reverse engineering magic. (Delay is Not an Option: Low Latency Routing in Space, Murat)

Do you like this sort of Stuff? Please support me on Patreon. I'd really appreciate it. Know anyone looking for a simple book explaining the cloud? Then please recommend my well reviewed (30 reviews on Amazon and 72 on Goodreads!) book: Explain the Cloud Like I'm 10. They'll love it and you'll be their hero forever.

- 5: ios 12 jailbreaks; ~43 million: dataset of atomic wikipedia edits; 1.88 exaops: peak throughput Summit supercomputer; 2 million: ESPN subscribers lost to cord cutters; 302: neurons show simple behavior of learning and memory; 224: seconds to train a ResNet-50 on ImageNet to an accuracy of approximately 75%; 120 milliseconds: space shuttle tolerances; $1 billion: Airbnb revenue; 900+ mph: tip of a whip;

- Quotable Quotes:

- @JeffDean: This thread is important. Bringing the best and brightest students from around the world to further their studies in the U.S. has been a key to success in many technological endeavors and to lead in many scientific domains.

- @davidgerard: > crypto has a provably lower carbon footprint than fiat actually, this is trivially false bitcoin: 0.1% of electricity, for 7tps THE WHOLE REST OF CIVILISATION: 99.9% of electricity, for more than 7000tps

- Paris Martineau: Lotti recalls the investor saying that if she wanted Lashify to succeed, quality didn’t matter, nor did customer satisfaction—only influencers. And they didn’t come cheap. She was told to expect to shell out $50,000 to $70,000 per influencer just to make her company’s name known, an insane amount for a new startup. There was no way around it; that’s just how things worked.

- @KevinBankston: Ford's CEO just said on NPR that the future of profitability for the company is all the data from its 100 million vehicles (and the people in them) they'll be able to monetize. Capitalism & surveillance capitalism are becoming increasingly indistinguishable (and frightening).

- Waqas Dhillon: The goal of in-database machine learning is to bring popular machine learning algorithms and advanced analytical functions directly to the data, where it most commonly resides – either in a data warehouse or a data lake. While machine learning is a common mechanism used to develop insights across a variety use cases, the growing volume of data has increased the complexity of building predictive models, since few tools are capable of processing these massive datasets. As a result, most organizations are down-sampling, which can impact the accuracy of machine models and created unnecessary steps to the predictive analytics process.

- combatentropy: Some day I would like a powwow with all you hackers about whether 99% of apps need more than a $5 droplet from Digital Ocean, set up the old-fashioned way, LAMP --- though feel free to switch out the letters: BSD instead of Linux, Nginx instead of Apache, PostgreSQL instead of MySQL, Ruby or Python instead of PHP.

- Adam Cheyer~ AI breaks down to knowing and doing. Knowing companies want to aggregate all the information. Doing companies the data used to accomplish a task are accessed over webservices and APIs. The actual live data resides behind the firewalls and I want the assistant to mediate the access to all those distributed data sources.

- @anchor: Spotify now accounts for 19% of podcast listening.

- @codinghorror: “when a travel company surveyed 1,000 kids between the ages of six and 17 about what they wanted to be when they grew up, 75 percent said they’d like to be a YouTuber or vlogger”

- Geoffrey A. Fowler: “Batteries improve at a very slow pace, about 5 percent per year,” says Nadim Maluf, the CEO of a Silicon Valley firm called Qnovo that helps optimize batteries. “But phone power consumption is growing up faster than 5 percent.” Blame it on the demands of high-resolution screens, more complicated apps and, most of all, our seeming inability to put the darn phone down.

- kjw: A move that was necessary. Diane wasn't able to shift the existing Google culture to one where the enterprise customer's needs came first. (Observable as reflected in their pricing, sales, and support challenges.) Thomas now has a similarly tough job navigating the organ rejection risk of transplanting anything that looks like Oracle culture and practices.

- manigandham: It's not just about scaling. That seems to be the only thing people talk about because it sounds sexy but the reality is about operations. Kubernetes makes deployments, rolling upgrades, monitoring, load balancing, logging, restarts, and other ops very easy. It can be as simple as a 1-line command to run a container or several YAML files to run complex applications and even databases. Once you become familiar with the options, you tend to think of running all software that way and it becomes really easy to deploy and test anything.

- Ed Sperling: Multiple reports and analyses point to 3nm design costs topping $1 billion, and while the math is still speculative, there is no doubt that the cost per transistor or per watt is going up at each new node. That makes it hard for fabless chip companies to compete, but it’s less of an issue for systems companies such as Apple, Google and Amazon, all of which are now developing their own chips. So rather than amortizing cost across billions of units, which chip companies do, these companies can bury the development costs in the price of a system.

- @GossiTheDog: This is amazing - a dude in Norway unplugging the internet in 1988 to stop spread of the Morris network worm. At the time it all routed through one building.

- AWS: Today we are making Auto Scaling even more powerful with the addition of predictive scaling. Using data collected from your actual EC2 usage and further informed by billions of data points drawn from our own observations, we use well-trained Machine Learning models to predict your expected traffic (and EC2 usage) including daily and weekly patterns. The model needs at least one day’s of historical data to start making predictions; it is re-evaluated every 24 hours to create a forecast for the next 48 hours.

- @ben11kehoe: This is HUGE: you can now execute queries against Aurora through HTTP, basically eliminating the friction of using it with Lambda. This opens up a whole new world of serverless data modeling on AWS

- Geoff Tate~ 10% FPGA sales in aerospace. 30-100x faster using an FPGA. They are reconfigurable, so not hardwired like ASICs. Cutting weight is critical. Size and power are related to weight. Bigger circuit board requires more of both. Integration of components let's you decrease size, power and weight. Can embed FPGA on ASIC which cuts out SerDes and reduces power.

- Matthew Hutson: In the merge scenarios, replacing 10% of the regular cars with self-driving cars also increased overall traffic flow, in some cases doubling the average car speed. The self-driving cars sped up traffic in part by keeping a buffer between themselves and the cars in front of them, forcing them to brake less often. Giving the algorithm control over traffic lights in a Manhattan-style traffic grid increased the number of cars passing through by 7%.

- @fogus: You want to stay relevant as a software developer for the next 10 years? These are 3 major things you should focus on: - ActiveX - OLE - ATL (c) 1998

- @jeremy_daly: 11 KB packet size to return 5 rows of data with only 5 columns (an id, varchar, and three timestamps) from #Aurora #Serverless with the HTTP API. Yeesh. 😬 And it took 228ms. 🤦♂️ #stillinbeta

- danijelb: So at this point it seems we are going back to how software was developed before web. Clients interact directly with database through a query language. In this case GraphQL is an equivalent of SQL and "custom GraphQL resolvers" are an equivalent of stored procedures, triggers and other business logic. I wonder if we will see databases expose GraphQL access layers directly.

- meritt: As someone who runs a very successful data business on a simple stack (php, cron, redis, mariadb), I definitely agree. We've avoided the latest trends/tools and just keep humming along while outperforming and outdelivering our competitors. We're also revenue-funded so no outside VC pushing us to be flashy, but I will definitely admit it makes hiring difficult. Candidates see our stack as boring and bland, which we make up for that in comp, but for a lot of people that's not enough. If you want to run a reliable, simple, and profitable business, keep your tech stack equally simple. If you want to appeal to VCs and have an easy time recruiting, you need to be cargo cult, even if it's not technically necessary.

- @jasongorman: 1998: "You should build systems out of single-purpose loosely-coupled components 2008: "You should build systems out of single-purpose loosely-coupled components" 2018: "You should build systems out of single-purpose loosely-coupled components" Technology changes so fast!

- @yoshihirok: Azure Cosmos DB by Mark Russinovich - Multi-master - 99.999 SLA < 10 ms at 99th percentile ... #Azure #MSIgnite Great, Great, and Great

- @DanielJonesEB: AWS instance type availability is not uniform across AZs. AWS assign AZs randomly: your zone A is not the same as mine. AWS do not provide an API to discover which instance types are available in an AZ. Only way to find out is to create an instance and have it fail. *facepalm*

- @JohnTreadway: When people tell me their private cloud is cheaper than AWS my response is IDGAF. It's never been about cost. Never. Did you hear me? Never! Does your cloud have 1/10th of the services of AWS, Azure, or Google? No? Private cloud is fine - but it's not the apples-to-apples.

- mistralol: The New Linus: "I really like you as a personal and all. But that code will only get committed over my dead body"

- APS: The researchers found two types of waves affect the structure of a peloton. First, the researchers found a wave that moves back and forth along the peloton, usually due to a rider suddenly hitting the brakes and others slowing to avoid a collision. The other type of wave is a transverse wave caused when riders move to the left or right to avoid an obstacle or to gain an advantageous position.

- Computing History at Bell Labs: My favorite parts of the talk are the description of the bi-quinary decimal relay calculator and the description of a team that spent over a year tracking down a race condition bug in a missile detector (reliability was king: today you'd just stamp “cannot reproduce” and send the report back). But the whole thing contains many fantastic stories. It's well worth the read or listen. I also like his recollection of programming using cards: “It's the kind of thing you can be nostalgic about, but it wasn't actually fun.”

- nic: Basically the high performance exhibited by Julia is made possible by the LLVM project. LLVM is a technology suite that can be used for building optimizing compilers, and is notably used in Clang, a C and C++ compiler.

- Tim Bray: It seems inevitable to me that, particularly in the world of high-throughput high-elasticity cloud-native apps, we’re going to see a steady increase in reliance on persistent connections, orchestration, and message/event-based logic. If you’re not using that stuff already, now would be a good time to start learning. But I bet that for the foreseeable future, a high proportion of all requests to services are going to have (approximately) HTTP semantics, and that for most control planes and quite a few data planes, REST still provides a good clean way to decompose complicated problems, and its extreme simplicity and resilience will mean that if you want to design networked apps, you’re still going to have to learn that way of thinking about things.

- @allspaw: A signal of this time in the industry is to disregard *what* people are building in favor of assuming they’re building it too slowly. Related: I worked at Friendster.

- Benedict Evans: Ecommerce is still only a small fraction of retail spending, and many other areas that will be transformed by software and the internet in the next decade or two have barely been touched. Global retail is perhaps $25 trillion dollars, after all.

- Netflix: We conducted experiments to determine when to load images and how many to load. We found the best performance by simply rendering only the first few rows of DOM and lazy loading the rest as the member scrolled. This resulted in a decreased load time for members who don’t scroll as far, with a tradeoff of slightly increased rendering time for those who do scroll. The overall result was faster start times for our video previews and full-screen playback.

-

Murat: Since LEO satellites are close to Earth, this makes their communication latency low. Furthermore, if we take into account that the speed of light in vacuum is 1.5 times faster than in fiber/glass, communicating over the LEO satellites becomes a viable alternative to communicating over fiber, especially for reducing latency in WAN deployments. When this gets built, it will change Internet: in some accounts up to 50% traffic may take this route in the future.

- @techmilind: Great old episode of @adrianco talking scheduling on software engineering daily, reviewing the past of scheduling. Past has been with demand-side scheduling. Fixed resources, variable demand. The world today is variable demand, variable resources. 1/n@patio11: There are some really weird folk beliefs in programmer culture about which bits of infrastructure count as hard, like e.g. compilers (which are routinely scratchwritten by undergrads) and scheduling systems (again, undergrads) versus e.g. rendering engines.

- Unknown: There’s only one hard problem in computer science: recognising that cache invalidation errors are misnamed. They’re just off-by-one errors in the time domain.

- Murat: Routing over satellites multihop via laser 90ms latency is achievable, compared to 160ms over fiber communication. This is a big improvement, for which financial markets would pay good money for.

- Steven Johnson: A growing number of scholars, drawn from a wide swath of disciplines — neuroscience, philosophy, computer science — now argue that this aptitude for cognitive time travel, revealed by the discovery of the default network, may be the defining property of human intelligence.

- Scott Manley: Apollo 14 almost never made it to the lunar surface thanks to a hardware failure which caused a short circuit in the abort switch. With the computer seeing the abort switch enabled the software team back on earth had a limited amount of time to figure out how to make the computer ignore the erroneous signal while still performing the landing. This required tweaking program state in memory while the program was running, a delicate operation with dire consequences for failure. No pressure guys.

- Ann Steffora Mutschler: researchers imagine that eventually, thermal transistors could be arranged in circuits to compute using heat logic, much as semiconductor transistors compute using electricity. But while excited by the potential to control heat at the nanoscale, the team said this technology is comparable to where the first electronic transistors were some 70 years ago, when even the inventors couldn’t fully envision what they had made possible.

- Mark LaPedus: For NAND, “we do expect prices to flatten and decline in 2019, but haven’t seen a price collapse yet,” Feldhan said. “Semico believes that memory will see significant price declines in 2019, especially in the second half of 2019.” Handy, meanwhile, said: “Our outlook for 2019 is the same as it has been for a few years–we anticipate a complete absence of gross profits for NAND flash and DRAM for the entire year. These chips will sell at cost.”

- Werner Vogels: Each week, the team's job is to find something that shifts the durations left and aggregate time down by looking at query shapes to find the largest opportunities for improvement. Doing so has yielded impressive results over the past year. On a fleet-wide basis, repetitive queries are 17x faster, deletes are 10x faster, single-row inserts are 3x faster, and commits are 2x faster. I picked these examples because they aren't operations that show up in standard data warehousing benchmarks, yet are meaningful parts of customer workloads.

- The original Siri app was far more powerful than what Apple later released. Why? Scaling a service to hundreds of millions of users requires compromise. Adam Cheyer Co-founder of Siri and Viv and Engineering Lead Behind Bixby 2.0.

- It took 18 months to commercialize the Siri app after acquisition. It had to be made to work in multiple languages. 15 new domains were built to represent all the apps on the phone like calendar. It used to use cloud services like Open Table, Yelp, Movie Tickets, etc. Now the endpoints are running on the phone. A new command system was needed in the cloud to operate and query data on the phone. Siri wasn't perfect, but it stimulated others to work on assistants. It showed people wanted this. It also shows the weakness of Apple's device centric approach. Imagine how much farther along we'd be if Apple stuck with an open services approach?

- You don't have to imagine. That would be Viv which is now the new Bixby on Samsung phones. Bixby acts as the glue orchestrating between different services. You don't have to remember how each skill works. Bixby's AI will dynamically autogenerate code to link services into a pipeline based on a catalogue of your concepts and actions. This adaptation code is written anew for each interaction.

- Bixby is a cloud service, so it's the same service regardless of how you access it. Developers get the exact same platform API as they use. Bixby turns natural language input into a structured intent. Bixby's planner takes the intent and builds an execution graph out of your models. It learns from your interactions. You like aisle seats? It learns your preferences.

- In a surprise it was mentioned Bixby can and will expand beyond Samsung devices. Clearly this is to entice developers to take the risk.

- Since Bixby uses other service providers (called Capsules), how do those services make money? What's the ecosystem play here for map providers, weather providers, etc?

- Trail of Bits @ Devcon IV Recap. Topics include: Using Manticore and Symbolic Execution to Find Smart Contract Bugsl; Jay Little recovered and analyzed 30,000 self-destructed contracts, and identified possible attacks hidden among them.

- So Amazon now has 3 HQs. Does the number 3 ring a bell? The magic number when building fault tolerant systems is usually 3, based on the idea of Triple modular redundancy (TMR) . You need three datacenters. Highly available storage systems keep three copies of a file in three different locations. Three nodes in a cluster. The minimum number of drives for RAID 4 is three. SpaceX uses a triple-redundant design in the Merlin flight computers. And don't forget The Three Musketeers or the Charmed Ones with their power of three. What does it mean? Amazon built a fault tolerant HQ architecture.

- LISA videos are now available. Topics include: Meltdown and Spectre; The Beginning, Present, and Future of Sysadmins; Introducing Reliability Toolkit: Easy-to-Use Monitoring and Alerting; Incident Management at Netflix Velocity; Designing for Failure: How to Manage Thousands of Hosts Through Automation.

- Performance or security? Choose one. Linus Torvalds: STIBP by default.. Revert?: When performance goes down by 50% on some loads, people need to start asking themselves whether it was worth it. It's apparently better to just disable SMT entirely, which is what security-conscious people do anyway. So why do that STIBP slow-down by default when the people who *really* care already disabled SMT?I think we should use the same logic as for L1TF: we default to something that doesn't kill performance. Warn once about it, and let the crazy people say "I'd rather take a 50% performance hit than worry about a theoretical issue".

- These two go well together. Know anyone who needs to grok AWS fast? Send them to My mental model of AWS. And Amazon has updated their AWS Well-Architected framework document: The AWS Well-Architected Framework provides architectural best practices across the five pillars for designing and operating reliable, secure, efficient, and cost-effective systems in the cloud. The Framework provides a set of questions that allows you to review an existing or proposed architecture. It also provides a set of AWS best practices for each pillar. Using the Framework in your architecture will help you produce stable and efficient systems, which allow you to focus on your functional requirements.

- Amazon's mis-ad-ventures has turned it from a superior content discovery engine to a pay-to-play rent seeking ad platform. You may not have noticed that amazon has replaced also boughts with paid ads. Authors report up to a 40% reduction in book sales. That's because also boughts have been a great way for customers to find new content and for sellers to generate sales. Amazon wants a taste. What are authors supposed to do? Buy more ads says amazon. So not only does amazon want their 30% off the top when you sell a book, they want you to pay even more in advertising fees. The harder amazon makes it to find content the more pressure there is on authors (and other sellers) to advertise just to stay even. Amazon is not alone in this. This is what Facebook did. This is also what Apple is doing with their app ad program. Both amazon and apple want to increase revenue from services. The problem is when that service is ads the incentives are misaligned for both sides of the market. Customers are exposed to inferior signals in the form of ads and sellers are strong armed into reducing revenues to pay for ads. The deeper problem is amazon's gluttony makes it even harder for independent content producers to stay independent. Once again when an aggregator achieve dominance they get greedy and turn a once vibrant ecosystem into a winner-take-all killing field.

- Curious about HTTP/3? Errata Security with a great breakdown. There's some subtlety here that may not be readily apparent: The problem with TCP, especially on the server, is that TCP connections are handled by the operating system kernel, while the service itself runs in usermode. Moving things across the kernel/usermode boundary causes performance issues...In my own tests, you are limited to about 500,000 UDP packets/second using the typical recv() function, but with recvmmsg() and some other optimizations (multicore using RSS), you can get to 5,000,000 UDP packets/second on a low-end quad-core server...Another cool solution in QUIC is mobile support. As you move around with your notebook computer to different WiFI networks, or move around with your mobile phone, your IP address can change. The operating system and protocols don't gracefully close the old connections and open new ones. With QUIC, however, the identifier for a connection is not the traditional concept of a "socket" (the source/destination port/address protocol combination), but a 64-bit identifier assigned to the connection...With QUIC/HTTP/3, we no longer have an operating-system transport-layer API... It's actually surprising TCP has lasted this long, and this well, without an upgrade.

- What would a message-oriented programming language look like? Erlang is Kaminski's answer, but Serverless seems like a better answer these days. A service consuming, language agnostic, event distribution layer, running on an infinite highly available resource pool, more accurately reflects the modern definition of message oriented.

- Humans built machines long before mastering metal or electrons. For example, did you know those quaint villages dotting the English country-side were purpose built as panopticons so thanes could control workers and as machines to produce more agriculture for trade? The British History Podcast - 298 – Uptown Ceorl. Also, Surveillance Kills Freedom by Killing Experimentation.

- This is counter-intuitive. Scientists improve smart phone battery life by up to 60-percent by using a code-offloading technique so that the ‘power hungry’ parts of the mobile-cloud hybrid application are first identified, and then offloaded to the cloud and executed there, instead of on the device itself. As they execute on the cloud instead of the mobile device, the device’s own components are not used, power is saved which prolongs the battery life. “On one, our results showed that battery consumption could be reduced by over 60%, at an additional cost of just over 1 MB of network usage. On the second app, the app used 35% less power, at a cost of less than 4 KB additional data”.

- There are already 1.19 cars or light trucks for every licensed driver in the US, and 1.9 vehicles per household. Which explains why Ford isn't so much a car company as a finance company and future data broker. Transportation is just a mechanism. Data could be what Ford sells next as it looks for new revenue: “We have 100 million people in vehicles today that are sitting in Ford blue-oval vehicles. That’s the case for monetizing opportunity versus an upstart who maybe has, I don’t know, what, they got 120, or 200,000 vehicles in place now. And so just compare the two stacks: Which one would you like to have the data from?”

- Buy or build? Cloud or on-prem? Auto Trader chose cloud. They found the overall benefits of freeing up developer and operational resources, managing the maintenance and hardware development and (above all) elastic provisioning vastly out-weigh any additional costs. Lessons from the data lake, part 1: Architectural decisions. Cluster computing on-premises is hard and expensive. The cloud is easier. They upgraded their old data analytics platform, based on a warehouse model, into something more Agile and responsive to business needs by building a data lake on S3 storage and distributed computation with Apache Spark delivered on Amazon’s EMR solution, which is elastic, configurable and flexible. Infrastructure is configured as code using Terraform, giving the added benefit of version control. How did they impose some structure on their S3 lake? Data is confined into five ‘zones’ - in practice, five S3 buckets - named transient, raw, refined, user and trusted. All data enters the lake via the transient zone. It arrives by an automated ingestion process—principally via Kafka sinks or Apache Sqoop for ingestion from relational databases—in a variety of data formats and at irregular intervals. An automated ETL process (written in Spark) then moves the data to the raw zone. Crucially, there is no human interaction with the transient zone.

- Gmail slow for you too? Here's why: Peeking under the hood of redesigned Gmail. In short: ontogeny recapitulates phylogeny.

- There's a language for programming sound called SOUL. Explained in ADC'18 Day 1 - Track 1. Soul is not a general purpose language. It's meant to augment existing languages, not replace them. It's the audio equivalent of the Opengl shader language used just for the little real-time bit of an app.

- What’s a GPU? A heterogeneous computing chip, highly tuned for graphics. How does a GPU shader core work? Lots of juicy details. Inside every GPU there are (many) small CPUs. They can do instructions (math, branches, load/store, ...). They have registers to hold values/variables. Tons of non-CPU-like hardware for graphics.

- There's life beyond distributed transactions. Nice overview. An Uncoordinated Approach To Scaling (Almost) Infinitely. Reduce the coordination between systems so computers can run independently and in parallel.

- Querying 8.66 Billion Records - a Performance and Cost Comparison between Starburst Presto and Redshift: Performance between Redshift and Starburst Presto is comparable. Both Starburst Presto and Redshift (with the local SSD storage) outperform Redshift Spectrum significantly; Performance between Redshift and Starburst Presto is comparable. Both Starburst Presto and Redshift (with the local SSD storage) outperform Redshift Spectrum significantly.

- Does load testing happend before new code is put in production any more? For Azure downtime problems came in a cascade of threes: The first root cause manifested as latency issue in the MFA frontend’s communication to its cache services. This issue began under high load once a certain traffic threshold was reached. Once the MFA services experienced this first issue, they became more likely to trigger second root cause; The second root cause is a race condition in processing responses from the MFA backend server that led to recycles of the MFA frontend server processes which can trigger additional latency and the third root cause (below) on the MFA backend; The third identified root cause, was previously undetected issue in the backend MFA server that was triggered by the second root cause. This issue causes accumulation of processes on the MFA backend leading to resource exhaustion on the backend at which point it was unable to process any further requests from the MFA frontend while otherwise appearing healthy in our monitoring.

- Time isn't just one damn thing after another. Time is Partial, or: why do distributed consistency models and weak memory models look so similar, anyway?: Even though time naturally feels like a total order, studying distributed systems or weak memory exposes you, head on, to how it isn’t. And that’s precisely because these are both cases where our standard over-approximation of time being total limits performance—which we obviously can’t have. Then, after we accept that time is partial, there are many small but important distinctions between the various ways it can be partial. Even these two fields, which look so much alike at first glance, have careful, subtle differences in what kinds of events they treat as impacting each other. We needed to dig into the technical definitions of various properties, after someone else has already done the work of translating one field into another’s language already. Time is partial. Maybe it’s time we got used to it.

- Amazing technical chops: Reverse Engineering Pokémon GO Plus and Electroluminescent paint and multi-channel control circuit.