Stuff The Internet Says On Scalability For July 6th, 2018

Hey, it's HighScalability time:

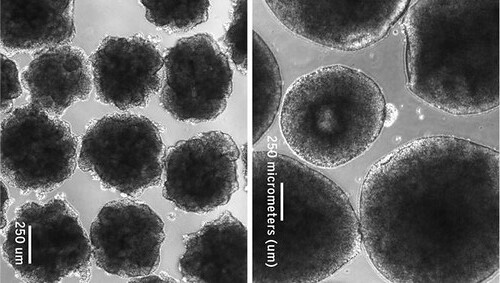

Could RAINB (Redundant Array of Independent Neanderthal ‘minibrains’) replace TPUs as the future AI core?

Do you like this sort of Stuff? Please lend me your support on Patreon. It would mean a great deal to me. And if you know anyone looking for a simple book that uses lots of pictures and lots of examples to explain the cloud, then please recommend my new book: Explain the Cloud Like I'm 10. They'll love you even more.

- $100m: Fortnite iOS revenue in 90 days; $2.5 Billion: SUSE Linux acquisition; 500,000: different orgs on Slack; 6.1%: chance of breaking change in each library; 10: years of the Apple app store; 2021: Japan goes exascale;

- Quoteable Quotes:

- jedberg: > Yes, that's how Amazon creates lock-in.

That is the cynical way to look at it. It also creates value because it lets you do more with what you already have. - Paul Ingles: We didn’t change our organisation because we wanted to use Kubernetes, we used Kubernetes because we wanted to change our organisation.

- ThousandEyes: The Internet is made up of thousands of autonomous networks that are interdependent on one another to deliver traffic from point to point across the globe. The lesson to be learned from this outage is that the Internet is made up of many hidden dependencies, any of which can impact your ability to connect to sites and services—even if you don’t have a direct relationship with an affected ISP.

-

tyingq: This is why AWS and Azure continue to gain market share in cloud, while Google remains relativity stagnant, despite (in many cases) superior technology. Their sales staff is arrogant and has no idea how to sell into F500 type companies. Source: 10+ meetings, with different clients, I attended where the Google sales pitch was basically "we are smarter than you, and you will succumb". The Borg approach. Someone needs to revamp the G sales and support approach if they want to grow in the cloud space.

- Google: We introduce adversarial attacks that instead reprogram the target model to perform a task chosen by the attacker—without the attacker needing to specify or compute the desired output for each test-time input. This attack is accomplished by optimizing for a single adversarial perturbation, of unrestricted magnitude, that can be added to all test-time inputs to a machine learning model in order to cause the model to perform a task chosen by the adversary when processing these inputs—even if the model was not trained to do this task. These perturbations can be thus considered a program for the new task.

- Where Wizards Stay Up Late: There is no small irony in the fact that the first program used over the network was one that made the distant computer masquerade as a terminal. All that work to get two computers talking to each other and they ended up in the very same master-slave situation the network was supposed to eliminate. Then again, technological advances often begin with attempts to do something familiar.

- voidmain: No, "exponentially faster" means exponentially faster with exponentially more hardware. Specifically, N processors can solve a problem of size N in O(log N) wall clock time, where the previous (serial) algorithm used O(N) wall clock time. Most programmers would say "more scalable" or "more parallel" rather than "faster", but the terminology makes sense and is standard in the context of the PRAM model of parallel computation.

- Josh Marshall: In January 2017 Slate had 28.33 million referrals from Facebook to Slate. By last month that number had dropped to 3.63 million. In other words, a near total collapse. The first should be obvious: you can’t build businesses around a company as unreliable and poorly run as Facebook

- Tim Berners-Lee: I was devastated. Actually, physically—my mind and body were in a different state.

- Daniel Lemire: To put it otherwise, I would say that while it is possible to design functions that stream through data in such a way that they are memory-bound over large inputs (e.g., copying data), these functions need to be able to eat through several bytes of data per cycle. Because our processors are vectorized and superscalar, it is certainly in the realm of possibilities, but harder than you might think.

- @copyconstruct: Golang fans: Go is a great language for writing distributed systems. Me: A number of outages in some very popular distributed systems (or worse, in their clients) can be root caused to goroutine leaks. The Go community could benefit from a leak detector.

- Pier Bover: This trend of using chats for questions is plaguing open source projects and I think it needs to end. There is no collective learning anymore.

- Brian Hare: The answer is that there’s selection for friendliness late in human evolution. Just like dogs, bonobos, the selection for friendliness led to expanded windows of development and led to changes in morphology, physiology, and psychology. It’s the answer for our species, too.

- Matthew Hutson: My guess is that we will go toward greater diversity and yet greater interoperability. I think that’s kind of the tendency. We want all of our systems to interoperate. If you look into big cities, you’re getting more and more ability to bridge languages, to bridge cultures. I think that will also be true for species.

- Anush Mohandass: the next generation of high-performance of FPGAs are expected to contain hard NoCs built into the chip because they are getting to the point where the data flow is at such a high rate—especially when you have 100-gigabit SerDes and HBM2, where trying to pipe a terabit or two per channel through soft logic essentially uses all the soft logic and you’ve got nothing left to be processing with.

- bitwize: Sorry old timer, servers are cattle now. Actually more like Shmoos: so abundant as to be almost free. And if a server is 90% reliable, each additional server adds an additional 9 of reliability. So it always makes sense to plan for more than one, or even two, servers.

- David Brooks: the semiconductor industry has become an unreliable partner for the broader tech industry. This means that domain specific accelerators become necessary and this will cause a breakdown of computing abstractions. The end of Moore’s Law will create a democratization of technology and lead to more innovation.

- @swardley: The original purpose was to create a competitive market of public providers to compete against AWS ... not to end up selling private clouds (a space with little future) on differentiated products. It's such a shame.

- Walton: If you look at something and think, ‘that seems interesting, that could be an area I could make a contribution in,’ you then invest yourself in it. You take some time to do it, you encounter challenges, over time you build that commitment.

- Krste Asanovic: With chips costing $300M, you need 30M units before manufacturing equals NRE. The only path forward is through specialization, which adds value. We need a new law where the NRE/transistor drops by a factor of 2 every 12 months. This means we have to reduce choice and stop over-optimization.

- Memory Guy: LAYERS OF LAYERS OF LAYERS: Bringing this around to the title of the post, we can see that all three of these technologies can be used together. If you start with a chip that has 64 billion bit cells, this can be built either on a planar NAND flash process or on a 3D NAND process. If it’s built as 3D NAND it will have a smaller die size and cost a lot less. The more layers of 3D NAND you use, the smaller then chip, and the cheaper the cost. Now you can decide to put more than one bit on each of those 64 billion bit cells. With MLC it will contain twice as many bits – 128 billion – and with TLC it contains three times as many, or 192 billion. With QLC the number reaches 256 billion bits, but the cost of the chip is the same no matter which you use: one bit, or two, or three, or four, so it’s more economical to use as many bits per cell as you can manage.

- jedberg: > Yes, that's how Amazon creates lock-in.

- You might be surprised who runs Kubernetes at the Edge on 6000 devices in 2000 stores at a cost of $900 per cluster. It's Chick-fil-A! Bare Metal K8s Clustering at Chick-fil-A Scale. Why? zbentley: It's important to understand their use case: they needed to basically ship something with the reliability equivalent of a Comcast modem (totally nontechnical users unboxed it, plugged it in, turned it on, and their restaurant worked) to extremely poorly-provisioned spaces (not server rooms) in very unreliable network environments. For them, k8s is an (important) implementation detail. It lets them get close to the substrate-level reliability of a much more expensive industrial control system in their sites (with clustering/reset/making sure everything is containerized and therefore less likely to totally break a host), while also letting them deploy/iterate/manage/experiment with much more confidence and flexibility than such systems provides.

- Biology handles feature flags differently than software. Radiolab X&Y. Primordial germ cells are in an embryo, waiting to become something. Iif the SRY gene turns it starts a cascade of gene activity that all say you're male (remember, by default we're all female). SRY stays on for about a day. What the flipped on genes do is create chemicals that send out signals that start forming and shaping the testes. As soon as the testes develop DMRT1 turns on and doesn't go away, it stays active—forever. Turn DMRT1 off and the cell will change sex. So cells are always trying to go back to their default configuration. Only by the eternal vigilance of DMRT1 do males stay male. The pathways for becoming female are always there. That pathway is being repressed your entire life. Ovaries have a similar set of genes. Humans can't revert sex, but the bluehead wrasse can. Bluehead swim in small groups with one male and a harem of females. If the male dies within minutes to hours a quorum decides who will become the new male. The chosen one transforms into the new male for the group. Now that's high availability! It's not just blueheads. It's many other kinds of fish, shrimp, lobsters, turtles, alligators, and so on. Software has a ways to go.

- Old school: every once-in-a-while manually tune by setting parameters in configuration files. New school: tune parameters in real-time using machine learning. Google pioneered this space. Facebook is new school. Spiral: Self-tuning services via real-time machine learning. The driver? The pace of change. When you are continually releasing software there's no time for a human to manually tune a system. Tuning must be automated. For example: Unlike hard-coded heuristics, Spiral-based heuristics can adapt to changing conditions. In the case of a cache admission policy, for example, if certain types of items are requested less frequently, the feedback will retrain the classifier to reduce the likelihood of admitting such items without any need for human intervention.

- Here's a short series of videos on the early days of Reddit's Architecture. For the first 6 months it ran on one rented machine. Everything, including the PostgreSQL database, ran on that one machine. It was a simple program written in Lisp. Reddit didn't even have comments. No fancy caching. Lots of joins. The database was moved to a second machine, which increased speed 4x. Now they have an App Server and Database Server. Switched from Lisp to Python for performance reasons. They added a second python process and load balanced between them. To mask software failures processes were automatically restarted when they died. Next, they added another database server. Replication lag became a popular so they added a cache using spread to keep them in sync. A second app server was added that also used spread to sync the app server cache. Spread didn't scale well as more app servers were added. Eventually moved to memcache. They added comments and moved to a more flexible database structure to simplify schema changes. Also, The Evolution of Reddit.com's Architecture and Reddit: Lessons Learned From Mistakes Made Scaling To 1 Billion Pageviews A Month.

- Who said bitching on the internet is like pissing into the wind? The article Why you should not use Google Cloud caused a big kerfuffle. Google being Google, without notice, automatically shutdown someone's account AND deleted all their data. A lot of heated discussion on hacker news and on reddit. General consensus was Google support sucks because they replace common sense with machine driven terminators. There's no justification to have this policy for a customer who has any kind of history of good behaviour. Apparently Google agrees. They changed their policy...we'll see.

- QCon New York 2018 talk summaries are becoming available.

- So here's the hard facts - I'm dipping into my pocket every week to the tune of... $7.40 for you guys to do 54M searches against a repository of half a billion passwords. Troy Hunt: Crunch time: Pwned Passwords is getting big so I have to look at costs. Over the last week, I've served over 54M requests to the service from a rapidly growing number of consumers. However, @Cloudflare has fielded 92% of those for me and @AzureFunctions has only had to process just over 4M of them. It's done that in an average time under 30ms and a 50th percentile of 22ms. There have been no failures. Over that week, the service consumed 84B function execution units measured in MB/ms which is about 82K GB/s. There's also the 4M executions count. Then there's the 67GB worth of blob storage (which I could reduce by cleaning up some of the migration data), and I'm reading it on each function call which is 4M times per week (you pay for blob reads). Plus, there's the bandwidth - I need to pay for everything egress from @Azure to @Cloudflare and with the average response size being 14KB, that mounts up when it's called 4M times a week.

- Native language speakers don't like Esperanto. React Native: A retrospective from the mobile-engineering team at Udacity. Why did we stop investing in React Native for new features? A decrease in the number of features being built on both platforms at the same time; An increase in Android-specific product requests; Frustration over long-term maintenance costs; The Android team’s reluctance to continue using React Native.

- Lambda is not the only game in town. Serverless Performance: Cloudflare Workers, Lambda and Lambda@Edge.

- At the 95th percentile, Workers is 441% faster than a Lambda function, and 192% faster than Lambda@Edge. Workers is built on V8 isolates, which are significantly faster to spin up (under 5ms) than a full NodeJS process and have a tenth the memory overhead. Workers has also been carefully architected to avoid moving memory and blocking when it could be avoided, complete with our own optimized implementations of the Javascript APIs. Finally, Workers runs on the same thousands of machines which serve Cloudflare traffic around the world.

- jhgg: For what it's worth at work (Discord) we serve our marketing page/app/etc... entirely from the edge using Cloudflare workers. We also have a non-trivial amount of logic that runs on the edge, such as locale detection to serve the correct pre-rendered sites, and more powerful developer only features, like "build overrides" that let us override the build artifacts served to the browser on a per-client basis. This is really useful to test new features before they land in master and are deployed out - we actually have tooling that lets you specify a branch you want to try out, and you can quickly have your client pull built assets from that branch - and even share a link that someone can click on to test out their client on a certain build. Just the other week, we shipped some non-obvious bug, and I was able to bisect the troubled commit using our build override stuff. The worker stuff is completely integrated into our CI pipeline, and on a usual day, we're pushing a bunch of worker updates to our different release channels. The backing-store for all assets and pre-rendered content sits in a multi-regional GCS bucket that the worker signs + proxies + caches requests to. We build the worker's JS using webpack, and our CI toolchain snapshots each worker deploy, and allows us to roll back the worker to any point in time with ease.

- Cut some fiber and we all bleed. Good insight into how the internet sausage is made. Comcast Fiber Outage Rips the Internet. Comcast suffered a three hour network outage on its backbone. The complex interconnected nature of all the peering relationships that make up the internet meant a lot of other networks were taken down with them. Doesn't the internet route around failure? Yes, but that's not as great as you might think: "It’s generally a good idea to announce fewer aggregated IP prefixes to the Internet, in order to keep the size of the global routing table manageable. In the case of Comcast’s Xfinity service, the data centers were reachable via two /13 subnets—68.80.0.0/13 and 96.112.0.0/13—which are originated by AS7922, and widely announced to all of Comcast’s peers. While this helps with controlling the route table size, the challenge with large subnet blocks is that it limits an ISPs ability to steer specific traffic flows. Any routing policy changes will affect all of the traffic to and from the ISP. Another approach to rerouting traffic is to break down address blocks into smaller subnets, which can be individually steered toward specific regions of the backbone. This is the approach Xfinity took when at approximately 11:00 AM PT they announced a more specific subnet (68.87.32.0/20) originating from AS36733, which is also owned by Comcast, and the service was restored."

- QCon NY: Matt Klein on Lyft Embracing Service Mesh Architecture: Lyft's current application architecture is based on every service communicating with other services through Envoy. The idea behind a service mesh architecture is that the network is fully abstracted from the services. It is also abstracted from the developers; a sidecar proxy is colocated with each service running on localhost. A service will talk to its sidecar, which in turn talks to the sidecar of another service (instead of directly calling the second service) in order to perform service discovery, fault tolerance and implement tracing. Envoy is an out of process architecture supporting the following capablities: L3/L4 filter architecture like a TCP/IP proxy; HTTP/2 based L7 filter architecture; Service discovery and active/passive health checking; Load balancing; Authentication and authorization; Observability.

- How do you create an 8-sided dice with quantum bits? dice = Program(H(0), H(1), H(2)). Here we are using the the H gate, or Hadamard gate. First, we pass in a single qubit : H(0). We repeat this for two more qubits: H(1), H(2). Now we have three qubits—each in superposition, and each with a random probability to return either 0 or 1 when they are measured. This gives us 8 total possible outcomes (2 * 2 * 2 = 2³), which will represent each side for our quantum dice. Quantum computing power scales exponentially with qubits (i.e. N qubits = 2^N bits). We only needed three qubits to generate 8 potential outcomes. Also, Quantum Computing Expert Explains One Concept in 5 Levels of Difficulty.

- Growing 100x may be a good problem to have, but when you hit your limits, making a change is not easy. From Zero to 40 Billion Links: Our Journey Migrating from Aerospike to DynamoDB: Our Aerospike cluster was reaching the maximum node count, and we had already gone through an iteration of upsizing our EC2 instances. Upsizing involved swapping out one node at a time, and letting the data rebalance, which had both a heavy operational cost and some risk...One area where we could make some trade-offs was in latency. Aerospike was providing consistent sub-millisecond latency, which was great, but faster than we actually needed...After we had migrated all of the data, and checked and double checked our processes and our data, we were ready for the point of no return: stopping the dual writes...We need to be more careful about read/write capacity in DynamoDB, as we don’t have nearly as much headroom as we did with Aerospike...One clear mistake was our initial attempt to run the entire migration through DAX...We ended up moving from a Global Secondary Index to a separate lookup table to enforce a unique constraint. Result: Costs about ⅓ as much as the legacy system; Has 3x replication in multiple Availability Zones; Can (and has) scaled I/O throughput up 40x+ when necessary in a matter of minutes; Takes advantage of DynamoDB’s auto-scaling feature; Gives us a very predictable runway for at least another 10x growth cycle; Runs as a managed service, freeing up many cycles for our operations team; Provides consistent ~2-3ms read latency, with sub-millisecond latency for new and popular items in the DAX cache.

- On the other hand, I'm still shocked that we were so reckless in the launch itself. Digg's v4 launch: an optimism born of necessity: the path to Digg v4 had been clearly established several years earlier, when Digg had been devastated by Google's Panda algorithm update. As that search update took a leisurely month to soak into effect, our fortunes reversed like we'd spat on the gods: we fell from our first--and only--profitable month, and kept falling until our monthly traffic was severed in half. One month, a company culminating a five year path to profitability, the next a company in freefall and about to fundraise from a position of weakness...The operations team rushed out a maintenance page and we collected ourselves around our handsome wooden table, expensive chairs and gnawing sense of dread. This was not going well. We didn't have a rollback plan...We had successfully provisioned the new site, but it was still staggering under load, with most pages failing to load. The primary bottleneck was our Cassandra cluster. Rich and I broke off to a conference room and expanded our use of memcache as a write-through-cache shielding Cassandra; a few hours later much of the site started to load for logged out users...Once again, Rich and I sequestered ourselves in a conference room, this time with the goal of rewriting our MyNews implementation from scratch. The current version wrote into Cassandra, and its load was crushing the clusters, breaking the social functionality, and degrading all other functionality around it. We decided to rewrite to store the data in Redis, but there was too much data to store in any server, so we would need to rollout a new implementation, a new sharding strategy, and the tooling to manage that tooling...Over the next two days, we implemented a sharded Redis cluster and migrated over to it successfully

- Software Design for Persistent Memory Systems: Persistent RAM is approaching price parity with regular DRAM, will be more common soon; Current OS support is primitive and needs further improvement; If you enjoy low level programming, the design constraints of writing an always-consistent data structure may be interesting to explore; Otherwise, just use LMDB and don't worry about it.

- What's the big deal difference now that Lambda can be triggered by SQS instead of SNS? Jeremy Daly with a great answer: The difference between SQS and SNS comes down to retry behavior (more info here). SNS events are not stream-based and are invoked asynchronously. This means that after 3 attempts, the message will fail and either be discarded or sent to a Dead Letter Queue. If you are setting concurrency limits on Lambdas that are processing SNS messages, it is possible that high volumes could cause messages to fail 3 times and therefore be discarded. SQS, on the other hand, is a poll-based event source that is not stream-based. This means it will continue to poll the SQS queue with the resources (number of functions) available to it. Even if you set a concurrency limit to your functions, the messages will simply remain in the queue until there is a function available to process it. This is different from stream-based events, since those are BLOCKING, whereas SQS polling is not. According to the updated documentation: “If you don’t require ordered processing of events, the advantage of using Amazon SQS queues is that AWS Lambda will continue to process new messages, regardless of a failed invocation of a previous message. In other words, processing of new messages will not be blocked.” Another important factor is the retry intervals. If an SNS message fails due to concurrency issues, the message is tried again “with delays between retries”. This means that your throttling could significantly delay an SNS message getting processed by your function. SQS would just keep on chugging through the queue as soon as it had capacity to handle more messages.

- Gives a whole new meaning to data lake. NIST Researchers Simulate Simple Logic for Nanofluidic Computing: Invigorating the idea of computers based on fluids instead of silicon, researchers at the National Institute of Standards and Technology (NIST) have shown how computational logic operations could be performed in a liquid medium by simulating the trapping of ions (charged atoms) in graphene (a sheet of carbon atoms) floating in saline solution. The scheme might also be used in applications such as water filtration, energy storage or sensor technology.

- It's counterintuitive, but Apple has a plan for maintaining privacy while still crowdsourcing map data. Apple is rebuilding Maps from the ground up: “We specifically don’t collect data, even from point A to point B,” notes Cue. “We collect data — when we do it — in an anonymous fashion, in subsections of the whole, so we couldn’t even say that there is a person that went from point A to point B. The segments that he is referring to are sliced out of any given person’s navigation session. Neither the beginning or the end of any trip is ever transmitted to Apple. Rotating identifiers, not personal information, are assigned to any data or requests sent to Apple and it augments the “ground truth” data provided by its own mapping vehicles with this “probe data” sent back from iPhones. Because only random segments of any person’s drive is ever sent and that data is completely anonymized, there is never a way to tell if any trip was ever a single individual. Any personalization or Siri requests are all handled on-board by the iOS device’s processor. So if you get a drive notification that tells you it’s time to leave for your commute, that’s learned, remembered and delivered locally, not from Apple’s servers.

- Are GPU accelerated databases any good? Depends on the type of data. GPU-Accelerated Databases: Without doubt, the GPU databases are lightning quick in some scenarios. Queries 1,2 and 6 are benchmark queries which focus on the performance of arithmetic functions and very simple joins. This reveals a sweet-spot for the GPU databases where the massively parallel architecture with these solutions helps them produce significant results, and shows that these database type operations can be easily optimised for GPUs. MapD can be up to 30x faster than the competition, and typically 5x faster. Many of the GPU benchmarks available on the web use this type of query structure, and this is where we see the performance gain over more traditional technologies.

- Good explanation of the differences. Dynamic Programming vs Divide-and-Conquer: We’ve found out that dynamic programing is based on divide and conquer principle and may be applied only if the problem has overlapping sub-problems and optimal substructure (like in Levenshtein distance case). Dynamic programming then is using memoization or tabulation technique to store solutions of overlapping sub-problems for later usage.

- Herb Sutter with a trip report on the Summer ISO C++ standards meeting. Looks like C++20 will add contracts. Nice. Better than macros, for sure.

- MySQL Setup at Hostinger Explained: One of our best friends is BGP and BGP protocol, which is aged enough to buy its own beer, hence we use it a lot. This implementation also uses BGP as the underlying protocol and helps to point to the real master node. To run BGP protocol we use the ExaBGP service and announce VIP address as anycast from both master nodes. You should be asking: but how are you sure MySQL queries go to the one and the same instance instead of hitting both? We use Zookeeper’s ephemeral nodes to acquire the lock as mutually exclusive. Zookeeper acts like a circuit breaker between BGP speakers and the MySQL clients. If the lock is acquired we announce the VIP from the master node and applications send the queries toward this path. If the lock is released, another node can take it over and announce the VIP, so the application will send the queries without any efforts.

- amark/gun: GUN is a realtime, distributed, offline-first, graph database engine. Doing 20M+ ops/sec in just ~9KB gzipped.

- Solid: Solid is an exciting new project led by Prof. Tim Berners-Lee, inventor of the World Wide Web, taking place at MIT. Solid (derived from "social linked data") is a proposed set of conventions and tools for building decentralized social applications based on Linked Data principles. Solid is modular and extensible and it relies as much as possible on existing W3C standards and protocols.

- Exploiting a Natural Network Effect for Scalable, Fine-grained Clock Synchronization: In this paper, we present HUYGENS, a software clock synchronization system that uses a synchronization network and leverages three key ideas. First, coded probes identify and reject impure probe data—data captured by probes which suffer queuing delays, random jitter, and NIC timestamp noise. Next, HUYGENS processes the purified data with Support Vector Machines, a widely-used and powerful classifier, to accurately estimate one-way propagation times and achieve clock synchronization to within 100 nanoseconds. Finally, HUYGENS exploits a natural network effect—the idea that a group of pair-wise synchronized clocks must be transitively synchronized—to detect and correct synchronization errors even further

- Scaling up molecular pattern recognition with DNA-based winner-take-all neural networks (description): We show that with this extended seesaw motif DNA-based neural networks can classify patterns into up to nine categories. Each of these patterns consists of 20 distinct DNA molecules chosen from the set of 100 that represents the 100 bits in 10 × 10 patterns, with the 20 DNA molecules selected tracing one of the handwritten digits ‘1’ to ‘9’. The network successfully classified test patterns with up to 30 of the 100 bits flipped relative to the digit patterns ‘remembered’ during training, suggesting that molecular circuits can robustly accomplish the sophisticated task of classifying highly complex and noisy information on the basis of similarity to a memory.