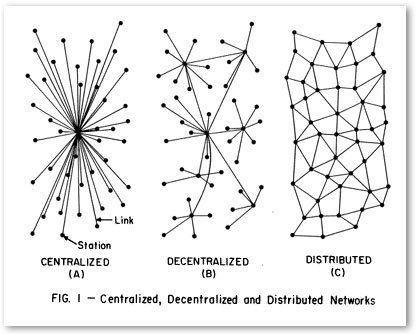

What do you believe now that you didn't five years ago? Centralized wins. Decentralized loses.

Decentralized systems will continue to lose to centralized systems until there's a driver requiring decentralization to deliver a clearly superior consumer experience. Unfortunately, that may not happen for quite some time.

I say unfortunately because ten years ago, even five years ago, I still believed decentralization would win. Why? For all the idealistic technical reasons I laid out long ago in Building Super Scalable Systems: Blade Runner Meets Autonomic Computing In The Ambient Cloud.

While the internet and the web are inherently decentralized, mainstream applications built on top do not have to be. Typically, applications today—Facebook, Salesforce, Google, Spotify, etc.—are all centralized.

That wasn't always the case. In the early days of the internet the internet was protocol driven, decentralized, and often distributed—FTP (1971), Telnet (<1973), FINGER (1971/1977), TCP/IP (1974), UUCP (late 1970s) NNTP (1986), DNS (1983), SMTP (1982), IRC(1988), HTTP(1990), Tor (mid-1990s), Napster(1999), XMPP(1999), and SETI@home(1999).

We do have new decentalized services: Bitcoin(2009), Minecraft(2009), Ethereum(2014), IPFS(2015), Mastadon(2016), Dat (2018), and PeerTube(2018). We're still waiting on Pied Piper to deliver the decentralized internet.

On an evolutionary timeline decentralized systems are neanderthals; centralized systems are the humans. Neanderthals came first. Humans may have interbred with neanderthals, humans may have even killed off the neanderthals, but there's no doubt humans outlasted the neanderthals.

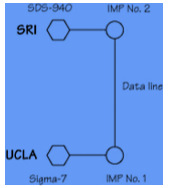

The reason why decentralization came first is clear from a picture of the very first ARPA (Advanced Research Projects Agency) network, which later evolved into the internet we know and sometimes love today:

Where Wizards Stay Up Late

Everyone had a vision of the potential for intercomputer communication, but no one had ever sat down to construct protocols that could actually be used. It wasn’t BBN’s job to worry about that problem. The only promise anyone from BBN had made about the planned-for subnetwork of IMPs was that it would move packets back and forth, and make sure they got to their destination. It was entirely up to the host computer to figure out how to communicate with another host computer or what to do with the messages once it received them. This was called the “host-to-host” protocol.

-- Where Wizards Stay Up Late

All that existed were hosts talking directly to each other over a primitive network. Centralization didn't exist. TCP/IP didn't exist. Nothing we take for granted today existed.

This fit the design goals. The early internet was all about sharing data:

Taylor had been the young director of the office within the Defense Department’s Advanced Research Projects Agency overseeing computer research, and he was the one who had started the ARPANET. The project had embodied the most peaceful intentions—to link computers at scientific laboratories across the country so that researchers might share computer resources.

...

Building a network as an end in itself wasn’t Taylor’s principal objective. He was trying to solve a problem he had seen grow worse with each round of funding. Researchers were duplicating, and isolating, costly computing resources. Not only were the scientists at each site engaging in more, and more diverse, computer research, but their demands for computer resources were growing faster than Taylor’s budget. Every new project required setting up a new and costly computing operation.

...

And none of the resources or results was easily shared. If the scientists doing graphics in Salt Lake City wanted to use the programs developed by the people at Lincoln Lab, they had to fly to Boston.

-- Where Wizards Stay Up Late

Back in those days of high adventure hosts were far more than mere pets, they were golden temples where crusaders came to worship speaking prayers of code.

Today, servers aren't even cattle, servers are insects connected over fast networks. Centralization is not only possible now, it's economical, it's practical, it's controlable, it's governable, it's economies of scalable, it's reliable, it's walled gardenable, it's monetizable, it's affordable, it's performance tunable, it's scalable, it's cacheable, it's securable, it's defensible, it's brandable, it's ownable, it's right to be forgetable, it's fast releasable, it's debuggable, it's auditable, it's iterable, it's easier to usable, it's easier to onboardable, it's copyright checkable, it's GDPRable, it's safe for China searchable, it's machine learnable, it's monitorable, it's spam filterable, it's value addable.

Depending on your point of view, decentralization is few of those things. And many of those "features" are exactly why we like decentralization in the first place.

What's more, consumers simply do not care. Users use. Only a small percentage have the technical sophistication to understand why they may want to preferentially use decentralized applications for technical reasons. Saying "It's like X, but decentralized", does not resonate, especially when the services are not as good. We had decentralized Slack way before Slack...yet there's Slack. You know it's bad when GitHub managed to recentralize an inherently distributed system like git.

There are certainly niche reasons to use decentralized systems, permissionless anonymity being the primary use case. Can you trust the likes of Facebook or Google? History says absolutely not. But most people don't care.

What might constitute a turning point back to decentralization? I can think of several:

- Complete deterioration of trust such that avoiding the centralization of power becomes a necessity.

- Radically cheaper cost basis.

- It becomes fashionable.

- The decentralization community manages to create clearly superior applications as convenient and reliable as centralized providers.

- Geographical isolation.

- Neanderthals live alongside humans. Parallel, separate, not worrying about who's equal.

(1) Seems more possible than I'd like to admit. See China's Digital Dystopia.

(2) Still on the horizon. Cloud computing will follow the same downward cost curves as everything else.

(3) We'll have to get the Kardashians on that.

(4) Will be difficult. By their very nature iterably improving decentralized applications is like herding cats. It's much easier to add features to centralized applications. Sure, Napster was a great way to share music, but isn't Spotify simply better? Yes, I know, Spotify can shutdown tomorrow and then where are we? I'm with you. But most aren't.

(5) The problem is the earth is too small. Global centralized applications are buildable today. Something I missed on completely. When we go to space that won't be the case. Applications in the space age will have to redecentralize...at least until the ansible is invented.

(6) We have genetic material from the neanderthals. We can rebuild them. We have the technology. This time maybe it's enough that neanderthals survive alongside humans, not going extinct, ready for the time when humans need a fresh infusion of genetic material...or when neanderthals become alpha.

So that's what I believe now that I didn't five years ago. How about you?

Related Articles

- On Hacker News. It wasn't my intention to spark a decentralization vs. centralization debate. I was actually interested in what people no longer believe. Anyone?

- Centralized Vs Distributed Systems Publishing And Owning Our Own Content.

- ssbc/patchwork

- Google Finds: Centralized Control, Distributed Data Architectures Work Better Than Fully Decentralized Architectures

- Definitions of centralized, decentralized, distributed, federated are here.