Stuff The Internet Says On Scalability For March 29th, 2019

Wake up! It's HighScalability time:

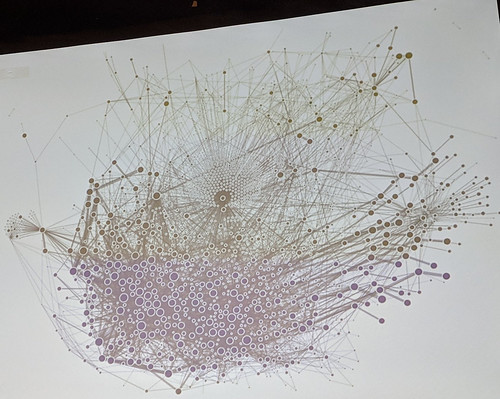

Uber's microservice Graph. Thousands of microservices. Crazy like a fox? Or just crazy? (@msuriar)

Do you like this sort of Stuff? I'd greatly appreciate your support on Patreon. I wrote Explain the Cloud Like I'm 10 for people who need to understand the cloud. And who doesn't these days? On Amazon it has 42 mostly 5 star reviews (100 on Goodreads). They'll learn a lot and love you for the hookup.

- 1.5 billion: monthly What's App users; 80 billion: docker downloads in 6 years; 1 billion: players on the App Store. 300,000 games; 13.5 billion: Voyager 1 miles from earth; 11 years: Teeny-Tiny Bluetooth Transmitter; 500 million: Airbnb guests; 12.5 million bits: information learned by average adult; 7.7%: Amazon's share of US retail sales; 100 million: Stack Overflow monthly visitors; $156B: Consumer spending in apps across iOS and Google Play by 2023;

- Quotable Quotes:

- John C. Lilly: When I say we may be our programs, nothing more, nothing less, I mean the substrate, the basic substratum under all else of our metaprograms is our programs. All we are as humans is what is built-in, what has been acquired, and what we make of both of these. So we are the result of the program substrate—the self-metaprogrammer.

- @andrewhurstdog: I caused a Gmail outage so big it made the national news, by forgetting to dereference a pointer...Right, this comes from blameless postmortems. Firing a person doesn't fix the problem, it removes a person that understands the problem.

- Sean Michael Kerner: At the 2018 Dockercon conference in San Francisco, NASA engineers discussed the Double Asteroid Redirection Test (DART) mission. DART is a spacecraft that will deploy a kinetic-impact technique to deflect an asteroid that could potentially end all life on Earth. At the core of DART is a software stack that is built using Docker.

- @bwest: Apple's new credit card should've been called Hypercard and anyone that disagrees is wrong

- Maxim Fedorov: Testing never replaces trouble shooting.

- William R. Kerr: My analysis of employee-level U.S. Census Bureau data and qualitative interviews show that U.S. tech workers over age 40 have good reasons to be concerned about how globalization affects their career longevity. In addition to competing with greater numbers of skilled foreign workers, older tech workers are now also more likely than younger workers to lose their jobs when technical work moves overseas.5

- @funcOfJoe: 25yrs ago: COM (focus on your biz logic) 20yrs ago: Java (focus on your biz logic) 15yrs ago: .NET (focus on your biz logic) 10yrs ago: Dynamic langs (focus on your biz logic) 5yrs ago: Microservices (focus on your biz logic) 0yrs ago: Serverless (focus on your biz logic)

- @devfacet: Agree. We built managed database services top of Kubernetes using statefulsets. That being said I wouldn’t recommend to anyone. Operational cost is high, versioning/upgrades are painful, multi-region is hard, backups are tricky. And it’s a distraction against your real focus.

- realusername: Android is becoming more and more useless every year, we could have a powerful computer in our pocket to do everything we want and instead we have a dumb device with a clunky system which is just good for running chat apps and small games.

- Shoshana Zuboff: Surveillance capitalism operates through unprecedented asymmetries in knowledge and the power that accrues to knowledge. Surveillance capitalists know everything about us, whereas their operations are designed to be unknowable to us. They accumulate vast domains of new knowledge from us, but not for us. They predict our futures for the sake of others’ gain, not ours.

- @mikeal: I always feel like Kubernetes wants me to be a cloud provider, and I'm like "Hey, aren't I paying someone else to be the cloud provider?"

- Lana Chan: What will be interesting to see in the upcoming months is how NVMe-TCP and computational storage will play out. NVMe-TCP is the latest transport added to NVMe; PCIe, RDMA and FC. NVMe-TCP promises to allow data centers to use their existing Ethernet infrastructure. Overhauling existing infrastructure has been cited as potential impediments for other NVMe-oF options. This may be the key to achieving wide adoption in the enterprise space. Finally, with computational storage in its infancy, there is a promise to bring computation to the data. The conversation about real-time big data analytics will change dramatically if this gets off the ground.

- @caitie: Scaling any kind of cluster membership protocol beyond single digit thousands is currently a hard problem with Cluster Membership protocols we have today. Even in the single digit thousands 1k-5k you are going to have to have a team of folks that meticulously attend to your ETCD/ZK/cluster membership service. inally discussions of wanting to go beyond this size, rarely talk about failure domains. Do you really want failure domains of 50k nodes, probably not.

- @MarcJBrooker: This is a good thread. My experience has been that autonomous (i.e. hosts joining themselves) approaches stop scaling in the low thousands. Above that, having a dedicate stateful host management plane is the only successful approach I’ve seen over the long term. I’ve also found it important to separate discovery from failure detection. Discovery is a relatively slow moving property that changes intentionally (and scales O(dN/dt)). Failure detection can change results really fast, especially in the face of partitions. 2/

- @ianmiell: My original thesis was that AWS is the new Windows to Kubernetes’ Linux. If that’s the case, the industry better hurry up with its distro management if it’s not going to go the way of OpenStack. Or to put it another way: where is the data centre’s Debian? Ubuntu?

- Alex Guarnaschelli: This is about choices, not ability.

- @asymco: Taiwan’s two largest bike makers report doubling ebike shipments. Giant shipped about 385,000 e-bikes in 2018; close to doubling the number recorded a year earlier. Merida more than doubled its e-bike shipments to 143,000.

- Doc Searls: —yet things are worse. Yet I remain optimistic. Because Cluetrain was early by (it turns out) at least two decades. And mainstream media are starting to get the clues. I know that because last week I heard from The New York Times, The Wall Street Journal, AP and an HBO show. I normally hear from none of those (or maybe one, a time or two per year).

- Something is in the water. It’s us, and the water is still the Internet.

- @hawkinjs: I love graphql but this is the problem - you probably don’t need it. And if you do, you probably already know it and don’t need Twitter hype to tell you. Keep it simple, until you can’t. Then think hard.

- maxxxxx: that's how I feel. Since working in a cube farm I am totally shot when I come home. The noise, lack of daylight and visual distractions suck all energy out of me. There is no escape from the stress while I am at work.

- Marek Majkowski: If you are piping data between TCP sockets, you should definitely take a look at SOCKMAP. While our benchmarks show it's not ready for prime time yet, with poor performance, high jitter and a couple of bugs, it's very promising. We are very excited about it. It's the first technology on Linux that truly allows the user-space process to offload TCP splicing to the kernel. It also has potential for much better performance than other approaches, ticking all the boxes of being async, kernel-only and totally avoiding needless copying of data.

- Joel Hruska: Samsung’s memory business is under heavy pressure from declines in the DRAM and NAND market. After a burst of data center building last year, a number of semiconductor firms have predicted a relatively weak first half. But Samsung is hit coming and going by these kinds of problems. It counts companies like Apple among its major display customers, which means any slowdown in iPhone demand will hit that business. Its memory business is similarly exposed. DRAM prices are in freefall and NAND prices have dropped significantly.

- John Hagel: in a rapidly changing world filled with uncertainty, I question whether routine tasks are even feasible, much less helpful. To the extent that routine tasks are necessary, I've made the case that they will be quickly taken by robots and AI – they shouldn’t be done by humans. My belief is that all workers should be focused on addressing unseen problems and opportunities to create more value.

- @ben11kehoe: Met someone who has a rule: every container image gets rebuilt every 24 hours even if source is unchanged, and every running container gets recycled in at most 24 hours. Brilliant to link those together. Because it keeps all your dependencies fresh. Think about all the things that Lambda updates continually under your zip file. You don’t normally get that with containers. infosec is the primary reason here!

- @Vaelec: I would say I don't get why anybody would have disagreed with you here but I've had the same arguments as well on other projects and was told I simply didn't understand things. Surprise, when we implemented multi-threading we saw an increase of 20x or more in some cases.

- @troutman: Waiting outside a data center building in Portland, ME for the last 2.5 hours. Customer’s servers are down. Access code for door doesn’t work. Customer escalated to DC owner engineers, tried multiple door codes. Dispatching a tech to drive an hour to let us in. Sigh.

- Pascal Luban: The Games-on-Demand business model is interesting mainly for publishers that already own a large catalog of titles, including older ones that nobody buys anymore. Each title generates little revenue but it is their number that make it worth. And this model features another handicap: Studios cannot complement their revenue with in-app purchases or ads; Apple Arcade forbids them.

- B-Barrington: I consider PayPal the best of the worst choices.

- @rossalexwilson: I thought I wanted infrastructure as code, but maybe I don’t actually care about my infrastructure. I just have some code, that serves some business value, and I just want it to run somewhere. Along with being scalable and resilient to underlying failure.

- @halvarflake: Strange question: Almost everybody we talk to has plans to move to k8s, but I have yet to personally meet someone from a company that runs a 1000+ node cluster for production. Are there any blogs/talks by people that do? The discrepancy is striking.

- @tmclaughbos: I just had one of those "I get serverless" moments: Don't code if you don't have to. I had a simple task, check the state of a resource and send an alert if the value changed. We've all done this before. I started building a Lambda function to check the resource state and then alert Pagerduty if the state changed. For the pagerduty part, I immediately went to the API documentation and then looked at the Python module. I was all set to write some code. When I looked at the integration setup, I noticed I could just use AWS cloudwatch. I read some more documentation and realized I didn't need to write code to alert pagerduty. All I needed to do was create a cloudwatch alarm or cloudwatch event rule, and send events to an SNS topic with an endpoint, given to me by pagerduty, subscribed to it. My code is so simple now, it just records a state change to cloudwatch. And the event generated by that just travels through resources I set up in cloudformation, all the way to pagerduty where it alerts me.

- Tired: webhooks. Wired: all-in.

- @tmclaughbos: I also wonder how many companies should be offering more than just a webhook URL and also an SNS topic subscription endpoint. What I’m realizing today is I’ve still been in the regular mindset of building serverless apps similarly to how I would have built microservices. I 1:1 map old patterns to new services.

- What do you give up? Any idea of abstraction, that layer of an indirection that allows you to transparently change implementations. You're all in as must be all the tools you want to use.

- ranman/awesome-sns: A curated list of useful SNS topics.

- Tired: REST. Wired: GraphQL

- @JoeEmison: if we use GraphQL instead of REST (or SOAP), we can represent our API calls in the exact same way we handle state/data/objects in code. Adding fields, subobjects, etc can be done in one place and everything else just works....except in the RDBM. Once we shift to a data store that similarly stores data in the way it exists in code and also in our GraphQL calls, we truly can define data structure in one place (the GraphQL schema) and everywhere in our application/systems will always reference it the same way. It massively reduces complexity, cost of changes, interdependencies, regressions, etc.

- Why do you give up? Simplicity. GraphQL cuts a complex vertical slice through your system. New APIs, new descriptors, new tooling, new ways of thinking, new lots of things.

- Google is once again on the right side of a growth curve. This time it's bandwidth instead of web pages. Stadia is Google's new streaming video service. It will consume a hefty 25 Mbps of bandwidth to deliver 1080p at 60 FPS. Impressive, but global bandwidth is still under 20 Mbps. Remember when Netflix jumped from delivering DVDs to streaming video? People said it wouldn't work. The network couldn't handle it. In short order bandwidth increased and Netflix rode the bandwidth curve to success. Sound familiar?

- Build platform advantage. Bundle. Unbundle. Bundle. Unbundle. Extend platform advantage. That's the new cycle of content in the age of platforms. All the New Services Apple Announced.

- Tired of the simplistic OOP vs functional way of looking at the world? You'll love Overwatch Gameplay Architecture and Netcode. It does a deep dive on a sophisticated modeling concept that takes years of experience to develop and truly appreciate. Overwatch is organized around an ECS architecture: Entity, Component, System. A world is a collection of systems and entities. An entity is an ID that corresponds to a collection of components. Components store game state and have no behaviours. Systems have no behaviours and store no game state. The breakthrough is realizing identity is primary and is fundamentally relational—separate from both state and behaviour. State and behaviour serve identity, not the other way around. In a game this separation becomes clear whereas in typical software it's hard to disentangle. The example is imagine a cherry tree in your front yard. A tree means something subjectively different to you as the owner, to a bird, a gardener, a property assessor, or a termite. Each observer sees different behaviour in the state that describes the tree. The tree is a subject that is dealt with differently by various observers. Boom. That's all of software. You model behaviours through subjective experiences, yet still relate them all together by the concept of identity.

- If a picture is worth a thousand words then Jerry Hargrove's awesome diagram of AWS App Mesh will save a lot of reading. Need more? Read Werner Vogels classic new service style post: Redefining application communications with AWS App Mesh. There's still not a lot of comments on this service yet. It appeals to organizations far up the microservices adoption path, so it may take a while. shubha-aws: we built App Mesh to enable customers to use microservices in any compute service in AWS - be it ECS, Fargate, EKS or even directly on EC2. You configure capabilities using APIs and App Mesh configures Envoy proxies deployed with your pods. @nathankpeck: ECS Service Discovery + Cloud Map is basically the underlying foundation. It gives you the list of other container to connect to but it stops there, and you have to implement the rest of the client side load balancing, retries, SSL in transit, etc yourself. App Mesh is the layer that adds the extra intelligence on top, such as ability to detect a failed request and retry it, distribute requests according to your desired rules/patterns, and its the layer where we will be implementing other features. In general I'd say use the raw underlying ECS service discovery and cloud map if you want to build your own service mesh logic, but use App Mesh if you just want a service mesh that works out of the box and lets you focus on your own application

- When a limit of 1.8 million new connections per hour per region is a deal breaker you know "at scale" is no lie. Is AWS ready to provide serverless WebSockets at scale? AppSync: limitations on authentication creates a security risk and the requirement for two requests prevents caching. Finally, using the preferred database DynamoDB presents further complexity and the solution is too expensive to use at scale. Websockets: doesn’t requirements for scale or broadcasting to millions of clients. The websocket limitation is an interesting one, you often want to use pub/sub as a command and control bus, so you really want to send a message to everyone with one simple call. That should be doable. So they are building their own.

- How do you transition from mass marketing to mass personalization? To do that, you’ve really got to unlock the data within that ecosystem in a way that’s useful to a customer. McDonald's Acquires Machine-Learning Startup Dynamic Yield for $300 million. Weird fit or just the future arriving right on time?

- jhayward: It's mentioned in the article, but much of what drives McDonald's profitability is their supply chain and logistics, and r&d around food prep/meal production. There are huge dollar volumes there at very low margins and it is an area of great advantage (not only in cost, but in what it's possible to do product-wise) if done well. It's a perfect area for data science / ML type applications.

- pionar: For companies like these, that operate in 10's of thousands of franchisee-owned locations, the number of products and the combination of configurations is not merely a function of the things you see on the menu. It's all of those products, with their different combination of parts (beef patties, lettuce, etc.), and then franchisee and regional variations (In some countries, you can't tell a franchisee what they can or can't sell, etc.) Add on top of that customer modifications to the product in their order (extra pickles, no onions, etc.) Why does this matter? It drives lots of stuff - food costs, inventory, inventory & sales forecasting (how many pickles do I need this week?) new item research, profit margins, etc. So you take this, multiply it by 10,000, 15,000, or, in McD's case, 36,000 stores across 100+ countries (half both those numbers in my company's case) and you're talking about vast amounts of information across millions of transactions every day, and that is in fact Big Data.

-

Domino's generates over 60% of sales via digital channels. berbec: It was a nightmare. We all had a deadline to book these installers for the Cisco VPNs and VM servers. IIRC IBM wrote the code, but Domino's retained the rights. It was a great time for the company. Total 180 on quality, investing heavily in the right tech. 3 years after we installed the server & thin clients all around, 33% of orders and 50% of revenue was online. Online sales drove order frequency, ticket price and customer satisfaction while lowering costs. It was such a genius move. Source: I was a Domino's GM and franchise for 17 years and saw this transition.

- The most interesting part of this story is how the change to serverless was made incrementally. They were able to replace parts of their system over time and learn along the way. The Journey to 90% Serverless at Comic Relief. When a lot of people were let go they needed a simpler architecture, so serverless made sense. They also had spikey load patterns. So do you really want to keep a fleet of varnish servers to handle the occasional load of 10's of thousands of requests a second? Of course not. Serverless again makes sense. They had outsourced part of the donation system and now were ablet to bring it back in-house: "Users would trigger deltas as they passed through the donation steps on the platform, these would go into an SQS queue, and then an SQS fan out on the backend would read the number of messages in the queue and trigger enough lambda’s to consume the message, but most importantly not overwhelm the backend services/database. The API would load balance the payment service providers (Stripe, Worldpay, Braintree & Paypal), allowing us to gain redundancy and reach the required 150 donations per second that would safely get us through the night of TV (it can handle much more than this)." A combination of Sentry IOPipe provided a 360 view of errors. A regional AWS Web Application Firewall (WAF) was added to all endpoints, introducing some basic protections before API Gateway was even touched.

- The great migrations are no longer geographical, they are from platform to platform. Warning: expect projectile emoji vomiting. Why we migrated 😼 inboxkitten (77 million serverless API requests) from 🔥 Firebase to ☁️ Cloudflare workers & 🐑 CommonsHost: What if it bills only per request, or only for the amount of CPU and ram used. Cloudflare worker, which is part of the growing trend of "edge" serverless computing. Also happens to simplify serverless billing down to a single metric. Turning what was previously convoluted, and hard to decipher bills from GCP...Into something much easily understood, and cheaper overall per request ...😸 [Bill went from $112 to $39] That's enough net savings for 7 more $9.99 games during summer sale! And has a bonus benefit of lower latency edge computing! Each request is limited to < 5ms of CPU time. Incompatibility with express.js (as it uses web workers pattern). Another thing to note is that Cloudflare workers are based on the web workers model, where it hooks onto the "fetch" event in cloudflare like an interceptor function. One script limitation per domain (unless you are on enterprise). While not a deal breaker for inboxkitten, this can be one for many commercial / production workload. Because Cloudflare serverless packages cannot be broken down into smaller packages for individual subdomains and/or URI routes. This greatly complicates things, making it impossible to have testing and production code separated on a single domain, among many more complicated setups.

- Ids should never be 32 bits. They wrap. And you won't handle the wrapping properly. Really. What We Learned from the Recent Mandrill Outage: In practice it’s more complicated, but the important detail is that the XID is a globally incrementing counter critical to the operation of the database. The feature that Postgres uses to combat this issue is a daemonized process called auto_vacuum which runs periodically and clears out old XIDs, protecting against wraparound. Tuning this is important, as there can be significant performance

- That's the promise of software, software can always get better...or worse. @bensprecher: What other car company on Earth says, 6 months after you bought a car, "oh, hey, our engineers figured out how to squeeze 5% more performance out of the *existing vehicle* you own, here's a free OTA software update for that"?

- The China Study and longevity. The important part here isn't about the conclusion, the important part is the power of open data. Trial data from studies should have their data open so anyone can perform an analysis. For example, much of the advice about statins is from studies where the data is not available. Global policies are being made impacting millions. Are we just supposed to trust the people who won't release the data they base their often self-serving conclusions? No. All data used to create public policy should be public. An even bigger question: should the data used by tech to manipulate us be kept private?

- Great picture illustrating how a monoculture fails. Your blast radius has no containment. Fleet of Southwest 737 jets spotted in Victorville after FAA grounded planes following two deadly crashes.

- Who would design a system with a single point of failure? Indeed. A person would not, but somehow organizations do. Software Won’t Fix Boeing’s ‘Faulty’ Airframe. But this is BS: "Ultimately, Travis also bemoans what he calls “cultural laziness” within the software development community that is creeping into mission-critical systems like flight computers. By laziness, I mean that less and less thought is being given to getting a design correct, and simple – up-front” The design goal wasn't to create a safe plane. The design goal was to create the lowest cost option that would pass certification. Do you think programmers came up with that goal? Do you think programmers let it pass? Do you think programmers chose to make the second MCAS optional? Do you think programmers chose not to have a third MCAS for triple modular redundancy?

- @awscloud: Application Load Balancers now support advanced request routing based on standard or custom HTTP headers & methods, query parameters & source IP addresses. @rchrdbyd: AWS is soooo close to bringing cell-based architectures to the masses. Cell Architectures.

- The problem with a saying like "Security needs to become everyone’s job” is it is verbless. There's no action. There's no story of what it means or how to do it. It's like saying "Being good is everyone's job." What does it mean to be good? How does one become good? How do you know you are good? Until security becomes a verb we will not be secure...or good.

- 2019 SRE Report. 49% worked on incident last week with 4% working on over 10 incidents a week, 92% work on less than five incidents a week. 79% report having stress. I tend to think 21% are lying, but maybe they have an advanced meditation practice. 69% don't think their company cares about their stress. 30% of work is maintenance tasks. Only 10% strongly agree automation has been used to reduce toil.

- ilhaan/kubeCDN (article): A self-hosted content delivery network based on Kubernetes. Easily setup Kubernetes clusters in multiple AWS regions and deploy resilient and reliable services to a global user base within minutes.

- comicrelief/lambda-wrapper: When writing Serverless endpoints, we have found ourselves replicating a lot of boiler plate code to do basic actions, such as reading request variables or writing to SQS. The aim of this package is to provide a wrapper for our lambda functions, to provide some level of dependency and configuration injection and to reduce time spent on project setup.

- infinimesh/infinimesh: an opinionated Platform to connect IoT devices securely. It exposes simple to consume RESTful & gRPC APIs with both high-level (e.g. device shadow) and low-level (sending messages) concepts. Infinimesh Platform is open source and fully cloud native. No vendor lock-in - run it yourself on Kubernetes or use our SaaS offering (TBA).