Stuff The Internet Says On Scalability For August 16th, 2019

Wake up! It's HighScalability time:

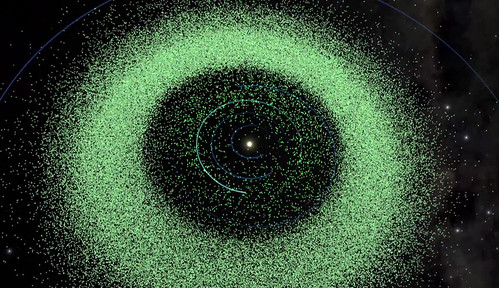

Asteroids in our solar system. Only a .001% chance a kilometer-size asteroid destroys humanity. (B612)

Do you like this sort of Stuff? I'd love your support on Patreon. I wrote Explain the Cloud Like I'm 10 for people who need to understand the cloud. And who doesn't these days? On Amazon it has 53 mostly 5 star reviews (124 on Goodreads). They'll learn a lot and likely add you to their will.

Number Stuff:

- $1 million: Apple finally using their wealth to improve security through bigger bug bounties.

- $4B: Alibaba cloud service yearly run rate, growth of 66%. Says they'll overtake Amazon in 4 years.

- 200 billion: Pinterest pins pinned across more than 4 billion boards by 300 million users.

- 21: technology startups took in mega-rounds of $100 million or more.

- 3%: of users pass their queries through resolvers that actively work to minimize the extent of leakage of superfluous information in DNS queries.

- < 50%: Google searches result in a click. SEO dies under walled garden shade.

- 4 million: DDoS attacks in the last 6 months, frequency grew by 39 percent in the first half of 2019. IoT devices are under attack within minutes. Rapid weaponization of vulnerable services continued.

- 200: distributed microservices in S3, up from 8 when it started 13 years ago.

- 50%: cumulative improvement to Instagram.com's feed page load time.

- $318 million: Fortnite monthly revenue, likely had more than six consecutive months with at least one million concurrent active users.

- $18,000: in fines because you just had to have the license plate NULL.

- $6.1 billion: Uber created Dutch weapon to avoid paying taxes.

- 14.5%: drop in 1H19 global semiconductor sales.

- 13%: fall in ad revenue for newspapers.

Quotable Stuff:

- Donald Hoffman: That is what evolution has done. It has endowed us with senses that hide the truth and display the simple icons we need to survive long enough to raise offspring. Space, as you perceive it when you look around, is just your desktop—a 3D desktop. Apples, snakes, and other physical objects are simply icons in your 3D desktop. These icons are useful, in part, because they hide the complex truth about objective reality.

- rule11: First lesson of security: there is (almost) always a back door.

- Paul Ormerod: A key discovery in the maths of how things spread across networks is that in any networked system, any shock, no matter how small, has the potential to create a cascade across the system as a whole. Watts coined the phrase “robust yet fragile” to describe this phenomenon. Most of the time, a network is robust when it is given a small shock. But a shock of the same size can, from time to time, percolate through the system. I collaborated with Colbaugh on this seeming paradox. We showed that it is in fact an inherent property of networked systems. Increasing the number of connections causes an improvement in the performance of the system, yet at the same time, it makes it more vulnerable to catastrophic failures on a system-wide scale.

- @jeremiahg: InfoSec is ~$127B industry, yet there’s no price tags on any vendor website. For some reason it’s easier to find out what a private plane costs than a ‘next-gen’ security product. Oh yah, and let’s not forget the lack of warranties.

- Hall’s Law: the maximum complexity of artifacts that can be manufactured at scales limited only by resource availability doubles every 10 years.

- YouTube~ Our responsibility was never to the creators or to the users," one former moderator told the Post. "It was to the advertisers."

- reaperducer: It's for this reason that's I've stopped embedding micro data in the HTML I write. Micro data only serves Google. Not my clients. Not my sites. Just Google. Every month or so I get an e-mail from a Google bot warning me that my site's micro data is incomplete. Tough. If Google wants to use my content, then Google can pay me. If Google wants to go back to being a search engine instead of a content thief and aggregator, then I'm on board.

- Maxime Puteaux: The small satellite launch market has grown to account for “69% of the satellites launched last year in number of satellites but only 4% of the total mass launched (i.e 372 tons). … The smallsat market experienced a 23% compound annual growth rate (CAGR) from 2009 to 2018” with even greater growth expected in the future, dominated by the launch needs of constellations.

- @Electric_Genie: San Diego has a huge, machine-intelligence-powered smart streetlight network that monitors traffic to time traffic signals. Now, they've added ability to detect pedestrians and cyclists

- Simon Wardley: How to create a map? Well, I start off with a systems diagram, I give it an anchor at the top. In this case, I put customer and then I describe position through a value chain. A customer wants online photo storage, which needs website, which needs platform, which needs computer, which needs power, and of course, the stuff at the bottom is less visible to the customer than the stuff at the top.

- Charity Majors: When we blew up the monolith into many services, we lost the ability to step through our code with a debugger: it now hops the network. Our tools are still coming to grips with this seismic shift.

- Livia Gershon: According to McLaren, from 1884 to 1895, the Matrimonial Herald and Fashionable Marriage Gazette promised to provide “HIGH CLASS MATCHES” to U.K. men and women looking for wives and husbands. Prospective spouses could place ads in the paper or work directly with staff of the associated Word’s Great Marriage Association to privately make a connection.

- @KarlBode: There is absolutely ZERO technical justification for bandwidth caps and overage fees on cable networks. Zero. It's a glorified price hike on captive US customers who already pay more for bandwidth than most developed nations due to limited competition.

- Fowler: That's the other piece of app trackers, is that they do a whole bunch of bad things for our phone. Over the course of a week, I found 5,400 different trackers activated on my iPhone. Yours might be different. I may have more apps than you. But that's still quite a lot. If you multiplied that out by an entire month, it would have taken up 1.5 gigabytes of data just going to trackers from my phone. To put that in some context, the basic data plan from AT&T is only 3 gigabyte

- Kate Green: Starshot is straightforward, at least in theory. First, build an enormous array of moderately powerful lasers. Yoke them together—what’s called “phase lock”—to create a single beam with up to 100 gigawatts of power. Direct the beam onto highly reflective light sails attached to spacecraft weighing less than a gram and already in orbit. Turn the beam on for a few minutes, and the photon pressure blasts the spacecraft to relativistic speeds.

- Markham Heid: Beeman says activities that are too demanding of our brain or attention — checking email, reading the news, watching TV, listening to podcasts, texting a friend, etc. — tend to stifle the kind of background thinking or mind-wandering that leads to creative inspiration.

- @ben11kehoe: Aurora never downsizes storage. Continue to pay at the highest roll you've ever made.

- John Allspaw: Resilience is not preventative design, it is not fault-tolerance, it is not redundancy. If you want to say fault-tolerance, just say fault-tolerance. If you want to say redundancy, just say redundancy. You don't have to say resilience just because, you can, and you absolutely are able to. I wish you wouldn't, but you absolutely can, and that'll be fine as well.

- Matthew Ball: But, again, lucrative “free-to-play” games have been around for more than a decade. In fact, it turns out the most effective way to generate billions of dollars is to not require a player spend a single one (all of the aforementioned billion-dollar titles are also free-to-play).

- TrailofBits: Smart contract vulnerabilities are more like vulnerabilities in other systems than the literature would suggest. A large portion (about 78%) of the most important flaws (those with severe consequences that are also easy to exploit) could probably by detected using automated static or dynamic analysis tools.

- @sfiscience: 1/2"Once you induce [auto safety] regulatory protection, there is a decline in the number of highway deaths. And then in 3-4 years, it goes right up to where it was before the safety regulation is imposed." 2/2 There's a kind of "risk homeostasis" with regulation: as people feel safer, they take more risks (eg, seatbelts led to faster driving and more pedestrian deaths). One exception: @NASCAR deaths went UP with safety innovations. "People are not dumb, but they're not rational-expectations-efficient either."

- Michael F. Cohen: It may be hard to believe, but only a few years ago we debated when the first computer graphics would appear in a movie such that you could not tell if what you were looking at was real or CG. Of course, now this question seems silly, as almost everything we see in action movies is CG and you have no chance of knowing what is real or not.

- Dropbox: Much like our data storage goals, the actual cost savings of switching to SMR (Shingled Magnetic Recording) have met our expectations. We’re able to store roughly 10 to 20 percent more data on an SMR drive than on a PMR drive of the same capacity at little to no cost difference. But we also found that moving to the high-capacity SMR drives we’re using now has resulted in more than a 20% percent savings overall compared to the last generation storage design.

- Riot Games: The patch size was 68 MB for RADS and 83 MB for the new patcher. Despite the larger download size, the average player was able to update the game in less than 40 seconds, compared to over 8 minutes with the old patcher.

- @grossdm: For a decade, VCs have been subsidizing the below-market provision of services to urban-dwellers: transport, food delivery, office space. Now the baton is being passed to public shareholders, who will likely have less patience. 20 years ago, public investors very quickly walked away from the below-market provision of e-commerce and delivery services -- i.e. Webvan.

- Julia Grace: Looking back, I should have done a lot more reorgs [at Slack] and I should've broken up a lot more parts of the organization so that they could have more specialization, but instead, it was working so we kept it all together.

- Thomas Claburn: "No iCloud subscriber bargained for or agreed to have Apple turn his or her data – whether encrypted or not – to others for storage," the complaint says. "...The subscribers bargained for, agreed, and paid to have Apple – an entity they trusted – store their data. Instead, without their knowledge or consent, these iCloud subscribers had their data turned over by Apple to third-parties for these third-parties to store the data in a manner completely unknown to the subscribers."

- @glitchx86: Some merit to TM: it solves the problem of the correctness of lock-based concurrent programs. TM hides all the complexity of verifying deadlock-free software .. and it isn't an easy task

- @narayanarjun: We were experiencing 40ms latency spikes on queries at @MaterializeInc and @nikhilbenesch tracked it down to TCP NODELAY, and his PR just cracks me up. The canonical cite is a hacker news comment ((link: https://news.ycombinator.com/item?id=10608356) news.ycombinator.com/item?id=106083…) signed by John Nagle himself, and I can't even.

- Donald Hoffman: Perhaps the universe itself is a massive social network of conscious agents that experience, decide, and act. If so, consciousness does not arise from matter; this is a big claim that we will explore in detail. Instead, matter and spacetime arise from consciousness—as a perceptual interface.

- MacCárthaigh: From the very beginning at AWS, we were building for internet scale. AWS came out of amazon.com and had to support amazon.com as an early customer, which is audacious and ambitious. They're a pretty tough customer, as you can imagine, one of the busiest websites on Earth. At internet scale, it's almost all uncoordinated. If you think about CDNs, they're just distributed caches, and everything's eventually consistent, and that's handling the vast majority of things.

- Jack Clark: Being able to measure all the ways in which AI systems fail is a superpower, because such measurements can highlight the ways existing systems break and point researchers towards problems that can be worked on.

- Google: We investigated the remote attack surface of the iPhone, and reviewed SMS, MMS, VVM, Email and iMessage. Several tools which can be used to further test these attack surfaces were released. We reported a total of 10 vulnerabilities, all of which have since been fixed. The majority of vulnerabilities occurred in iMessage due to its broad and difficult to enumerate attack surface. Most of this attack surface is not part of normal use, and does not have any benefit to users. Visual Voicemail also had a large and unintuitive attack surface that likely led to a single serious vulnerability being reported in it. Overall, the number and severity of the remote vulnerabilities we found was substantial. Reducing the remote attack surface of the iPhone would likely improve its security.

- sleepydog: I work in GCP support. I think you would be surprised. Of course Linux is more common, but we still support a lot of customers who use Windows Server, SQL Server, and .NET for production.

- Laurence Tratt: performance nondeterminism increasingly worries me, because even a cursory glance at computing history over the last 20 years suggests that both hardware (mostly for performance) and software (mostly for security) will gradually increase the levels of performance nondeterminism over time. In other words, using the minimum time of a benchmark is likely to become more inaccurate and misleading in the future...

- Geoff Tate: A year ago, if you talked to 10 automotive customers, they all had the same plan. Everyone was going straight to fully autonomous, 7nm, and they needed boatloads of inference throughput. They wanted to license IP that they would integrate into a full ADAS chip they would design themselves. They didn’t want to buy chips. That story has backpedaled big time. Now they’re probably going to buy off-the-shelf silicon, stitch it together to do what they want, and they’re going to take baby steps rather than go to Level 5 right away.

- Ann Steffora Mutschler: In discussions with one of the Tier 0.5 suppliers about whether sensor fusion is the way to go or if it makes better sense to do more of the computation at the sensor itself, one CTO remarked that certain types of sensor data are better handled centrally, while other types of sensor data are better handled at the edge of the car, namely the sensor, Fritz said.

- Dai Zovi: A software engineering team would write security features, then actively go to the security team to talk about it and for advice. We want to develop generative cultures, where risk is shared. It’s everyone’s concern. If you build security responsibility into every team, you can scale much more powerfully than if security is only the security staff’s responsibility.

- Nitasha Tiku: But that didn't mean things would go back to normal at Google. Over the past three years, the structures that once allowed executives and internal activists to hash out tensions had badly eroded. In their place was a new machinery that the company's activists on the left had built up, one that skillfully leveraged media attention and drew on traditional organizing tactics. Dissent was no longer a family affair. And on the right, meanwhile, the pipeline of leaks running through Google's walls was still going as strong as ever.

- Graham Allan: There’s another bottleneck that SoC designers are starting to struggle with, and it’s not just about bandwidth. It’s bandwidth per millimeter of die etch. So if you have a bandwidth budget that you need for your SoC, a very easy exercise is to look at all the major technologies you can find. If you have HBM2E, you can get on the order of 60+ gigabytes per second per millimeter of die edge. You can only get about a sixth of that for GDDR6. And I can only get about a tenth of that with LPDDR5.

- Brian Bailey: If the industry is willing to give von Neumann the boot, it should perhaps go the whole way and stop considering memory to be something shared between instructions and data and start thinking about it as an accelerator. Viewed that way, it no longer has to be compared against logic or memory, but should be judged on its own merits. If it accelerates the task and uses less power, then it is a purely economic decision if the area used is worth it, which is the same as every other accelerator.

- Barbara Tversky: This brings us to our First Law of Cognition: There are no benefits without costs. Searching through many possibilities to find the best can be time consuming and exhausting. Typically, we simply don’t have enough time or energy to search and consider all the possibilities. The evidence on action is sufficient to declare the Second Law of Cognition: Action molds perception. There are those who go farther and declare that perception is for action. Yes, perception serves action, but perception serves so much more.

- Jez Humble: testing is for known knowns, monitoring is for known unknowns, observability is for unknown unknowns

- @briankrebs: Being in infosec for so long takes its toll. I've come to the conclusion that if you give a data point to a company, they will eventually sell it, leak it, lose it or get hacked and relieved of it. There really don't seem to be any exceptions, and it gets depressing

- Brendon Foye: The hyperscale giant today released a new co-branding guide (pdf), instructing partners in the AWS Partner Network (APN) how to position their marketing material when going to market with AWS. Among the guidelines, AWS said it won’t approve the use of terms like “multi-cloud,” “cross cloud,” “any cloud,” “every cloud,” “or any other language that implies designing or supporting more than one cloud provider.” The hyperscale giant today released a new co-branding guide (pdf), instructing partners in the AWS Partner Network (APN) how to position their marketing material when going to market with AWS. Among the guidelines, AWS said it won’t approve the use of terms like “multi-cloud,” “cross cloud,” “any cloud,” “every cloud,” “or any other language that implies designing or supporting more than one cloud provider.

- Newley Purnell: Startup Engineer.ai says it uses artificial-intelligence technology to largely automate the development of mobile apps, but several current and former employees say the company exaggerates its AI capabilities to attract customers and investors.

- George Dyson: If you look at the most interesting computation being done on the Internet, most of it now is analog computing, analog in the sense of computing with continuous functions rather than discrete strings of code. The meaning is not in the sequence of bits; the meaning is just relative. Von Neumann very clearly said that relative frequency was how the brain does its computing. It's pulse frequency coded, not digitally coded. There is no digital code.

- Brendon Dixon: Because they’ve chosen to not deeply learn their deep learning systems—continuing to believe in the “magic”—the limitations of the systems elude them. Failures “are seen as merely the result of too little training data rather than existential limitations of their correlative approach” (Leetaru). This widespread lack of understanding leads to misuse and abuse of what can be, in the right venue, a useful technology.

- Ewan Valentine: I could be completely wrong on this, but over the years, I've found that OO is great for mapping concepts, domain models together, and holding state. Therefor I tend to use classes to give a name to a concept and map data to it. For example, entities, repositories, and services, things which deal with data and state, I tend to create classes for. Whereas deliveries and use cases, I tend to treat functionally. The way this ends up looking, I have functions, which have instances of classes, injected through a higher-order function. The functional code then interacts with the various objects and classes passes into it, in a functional manor. I may fetch a list of items from a repository class, map through them, filter them, and pass the results into another class which will store them somewhere, or put them in a bucket.

- Timothy Morgan: But what we do know is that the [Cray] machine will weigh in at around 30 megawatts of power consumption, which means it will have more than 10X the sustained performance of the current Sierra system on DOE applications and around 4X the performance per watt. This is a lot better energy efficiency than many might have been expecting – a few years back there was talk of exascale systems requiring as much as 80 megawatts of juice, which would have been very rough to pay for at a $1 per kilowatt per year. With those power consumption numbers, it would have cost $500 million to build El Capitan but it would have cost around $400 million to power it for five years; at 30 megawatts, you are in the range of $150 million, which is a hell of a lot more feasible even if it is an absolutely huge electric bill by any measure.

- Timothy Prickett Morgan: All of us armchair architecture quarterbacks have been thinking the CPU of the future looks like a GPU card, with some sort of high bandwidth memory that’s really close.

- Garrett Heinlen (Netflix): I believe GraphQL also goes a step further beyond REST and it helps an entire organization of teams communicate in a much more efficient way. It really does change the paradigm of how we build systems and interact with other teams, and that's where the power truly lies. Instead of the back end dictating, "Here are the APIs you receive and here's the shape in the format you're going to get," they express what's possible to access. The clients have all the power between pulling in the data just what they need. The schema is the API contract between all teams and it's a living evolving source of truth for your organization. Gone are the days of people throwing code over the wall thing like, "Good luck, it's done." Instead, GraphQL promotes more of a uniform working experience amongst front end and back end, and I would go further to say even product and designer could have been involved in this process as well to understand the business domain that you're all working within.

Useful Stuff:

- Fun thread. @jessfraz: Tell me about the weirdest bug you had that caused a datacenter outage, can be anywhere in the stack including human error. @dormando: one day all the sun servers fired temp alarms and shut off. thought AC had died or there was a fire. Turns out cleaners had wedged the DC door open, causing a rapid humidity shift, tricking the sensors. @ewindisch: connection pool leak in a distributed message queue I wrote caused the cascade failure of a datacenter's network switches. This brought offlin a large independent cloud provider around 2013. @davidbrunelle: Unexpected network latency caused TCP sockets to stay open indefinitely on a fleet of servers running an application. This eventually led to PAT exhaustion causing around ~50% of outbound calls from the datacenter to fail causing a DC-wide brownout.

- What happens when you go from LAMP to serverless: case study of externals.io. 90% of the requests are below 100ms. $17.37/month. Generally low effort migration.

- By continuously monitoring increases in spend, we end up building scalable, secure and resilient Lambda based solutions while maintaining maximum cost-effectiveness. How We Reduced Lambda Functions Costs by Thousands of Dollars: In the last 7 months, we started using Lambda based functions heavily in production. It allowed us to scale quickly and brought agility to our development activities...We were serving +80M Lambda invocations per day across multiple AWS regions with an unpleasant surprise in the form of a significant bill...once we start running heavy workloads in production, the cost become significant and we spent thousands of dollars daily...to reduce AWS Lambda costs, we monitored Lambda memory usage and execution time based on logs stored in CloudWatch...we created dynamic visualizations on Grafana based on metrics available in the timeseries database and we were able to monitor in near real-time Lambda runtime usage...we gain insights into the right sizing of each Lambda function deployed in our AWS account and we avoided excessive over-allocation of memory. Hence, significantly reduced the Lambda’s cost...To gather more insights and uncover hidden costs, we had to identify the most expensive functions. Thats where Lambda Tags comes into the play. We leveraged those metadata to breakdown the cost per Stack...By reducing the invocation frequency (control concurrency with SQS), we reduced the cost up to 99%...we’re evaluating alternative services like Spot Instances & Batch Jobs to run heavy non-critical workloads considering the hidden costs of Serverless...we were using SNS and we had issues with handling errors and Lambda timeout, so we changed our architecture to use instead SQS and we configured a dead letter queue to reduce the number of times the same message can be handled by the Lambda function (avoir recursion). Hence, reducing the number of invocations.

- Six Shades of Coupling: Content Coupling, Common Coupling, External Coupling, Control Coupling, Stamp Coupling and Data Coupling.

- When does redundancy actually help availability?: The complexity added by introducing redundancy mustn't cost more availability than it adds. The system must be able to run in degraded mode. The system must reliably detect which of the redundant components are healthy and which are unhealthy. The system must be able to return to fully redundant mode.

- AI Algorithms Need FDA-Style Drug Trials. The problem with this idea is molecules do not change whereas software continuously changes and learning software by definition changes reactively. No static proces like a one and done drug trial will yield meaningful results. We need a different approach that considers the unique nature software plays in systems. Certainly vendors can't be trusted. Any AI will tell you that. Perhaps create a set of test courses that platforms can be continuously tested and fuzzed against?

- AWS Lambda is not ready to replace convenctional EC2. Why we didn’t brew our Chai on AWS Lambda: Chai Point, India’s largest organized Chai retailer, with over 150+ stores and over 1000+ boxC(IoT Enabled Chai and Coffee vending machines) are designed for corporate which serves approximately 250k cups of chai per day from all the channels...Most of the Chai Point’s stores and boxC machines typically run between 7 AM to 9 PM...[Lambda cold start is] one of the most critical and deciding factors for us to move back the Shark infrastructure to EC2...AWS Lambda has a limit of 50 MB as the maximum deployment package...it takes a delay of 1–2 minutes for logs to appear in the CloudWatch which makes it difficult for immediate debugging in a test environment...when it comes to deploying it in enterprise solutions where there are inter-services dependencies I think there is still time especially for languages like Java.

- Facebook Performance @Scale 2019 recap videos are now available.

- Sharing is caring until it becomes overbearing. Dropbox no longer shares code between platforms. Their policy now is to use the native language on each platform. It is simply easier and quicker to write code twice. And you don't have to train people on using a custom stack. The tools are native. So when people move on you have not lost critical expertise. The one codebase to rule them all dream dies hard. No doubt it will be back in short order, filtered through some other promising stack.

- Everyone these days wants your functions. Oracle Functions Now Generally Available. It's built on the Apache 2.0 licensed Fn Project. Didn't see much in the way of reviews or on costs.

- On LeanXcale database. Interview with Patrick Valduriez and Ricardo Jimenez-Peris: There is a class of new NewSQL databases in the market, called Hybrid Transaction and Analytics Processing (HTAP). NewSQL is a recent class of DBMS that seeks to combine the scalability of NoSQL systems with the strong consistency and usability of RDBMSs. LeanXcale’s architecture is based on three layers that scale out independently, 1) KiVi, the storage layer that is a relational key-value data store, 2) the distributed transactional manager that provides ultra-scalable transactions, and 3) the distributed query engine that enables to scale out both OLTP and OLAP workloads. he storage layer, it is a proprietary relational key-value data store, called KiVi, which we have developed. Unlike traditional key-value data stores, KiVi is not schemaless, but relational. Thus, KiVi tables have a relational schema, but can also have a part that is schemaless. The relational part enabled us to enrich KiVi with predicate filtering, aggregation, grouping, and sorting. As a result, we can push down all algebraic operators below a join to KiVi and execute them in parallel, thus saving the movement of a very large fraction of rows between the storage layer and they query engine layer.

- Apollo Day New York City 2019 Recap:

- During his keynote, DeBergalis announced one of Apollo’s most anticipated innovations, Federation, which utilizes the idea of a new layer in the data stack to directly meet developers’ needs for a more scalable, reliable, and structured solution to a centralized data graph.

- Federation paired with existing features of Apollo’s platform like schema change validation listing creates a flow where teams can independently push updates to product microservices. This triggers re-computation of the whole graph, which is validated and then pushed into the gateway. Once completed, all applications contain changes in the part of the graph that is available to them. These events happen independently, so there is a way to operate, which allows each team to be responsible solely for its piece.

- Another key concept that DeBergalis detailed was the idea that a “three-legged” stack is emerging in front-end development. The “legs” of this new “stool” that form the basis of this stack are React, Apollo, and Typescript. React provides developers with a system for managing user components, Apollo provides developers a system for managing data, and Typescript provides a foundation underneath that provides static typing end-to-end through the stack.

- Lesson: sticker shock—in Google Cloud everything costs more you think it will, but it's still worth it. Etsy's Big Data Cloud Migration. Etsy generates a terabyte of data a day, they run hundreds of Hadoop workflows and thousands of jobs daily. Started out on prem. They migrated to the cloud over a year and half ago, driven by needing both the machine and people resources required to keep up with machine leaning and data processing tasks. Moving into the cloud decoupled systems so groups can operate independently. With their on prem system they didn’t worry about optimization, but on the cloud you must because the cloud will do whatever you tell it do—at a price. In the cloud there’s a business case for making things more efficient. They rearchitected as they moved over. Managed services were a huge win. As they grew bigger they simply didn't have the resources and the expertise to run all the needed infrastructure. That's now Google's job. This allowed having more generalized teams. It would be impossible for their team of 4 to manage all the things they use in GCP. Specialization is not required run things. If you need it you just turn it on. That includes services like BigTable, k8s, Cloud Pub/Sub, Cloud Dataflow, and AI. It allows Etsy to punch above their weight class. They have a high level of support, with Google employees embedded on their team. Etsy didn’t lift and shift,they remade the platform as they moved over. If they had to do it over again they might have tried for a middle road, changing things before the migration.

- Facebook Systems @Scale 2019 recap videos are now available.

- The human skills we need an an unpredictable world. Efficiency and robustness trade off against each other. The more efficient something is the less slack there is to handle the unexpected. When you target efficiency you may be making yourself more vulnerable to shocks.

- The lesson is, you can't wait around for Netflix or anyone else to promote your show. It's up to you to create the buzz. How a Norwegian Viking Comedy Producer Hacked Netflix's Algorithm: The key to landing on Netflix's radar, he knew, would be to hack its recommendation engine: get enough people interested in the show early...Three weeks before launch, he set up a campaign on Facebook, paying for targeted posts and Facebook promotions. The posts were fairly simple — most included one of six short (20- to 25-second) clips of the show and a link, either to the show's webpage or to media coverage. They used so-called A/B testing — showing two versions of a campaign to different audiences and selecting the most successful — to fine-tune. The U.S. campaign didn't cost much — $18,500, which Tangen and his production partners put up themselves — and it was extremely precise. In just 28 days, the Norsemen campaign reached 5.5 million Facebook users, generating 2 million video views and some 6,000 followers for the show. Netflix noticed. "Three weeks after we launched, Netflix called me: 'You need to come to L.A., your show is exploding,'" Tangen recalls. Tangen invested a further $15,000 to promote the show on Facebook worldwide, using what he had learned during the initial U.S. campaign.

- How did NASA Steer the Saturn V? Triply redundant in logic. Doubly redundant in memory. Two outputs are compared to make sure they're getting the same answer. If the same numbers aren't returned then a subroutine is called to determine at this point in the flight which number makes the most sense. During all Saturn flights they had less than 10 miscompares. Debugging was a nightmare. More components mean less reliability. Never had catastrophic failure. Biggest problem is vibration.

- Interesting idea, instead of interviews use how well a candidate performs on training software to determine how well they know a set of skills. The Cloudcast - Cloud Computing. Role of generalist is gone. Pick a problem people are struggling with and become an expert at solving that problem and market yourself as person who has the skill of solving the problem.

- The end state for every application is to write its own scheduler. Making Instagram.com faster: Part 1. Use preload tags to start fetching resources as soon as possible. You can even preload GraphQL requests to get a head start on those long queries. Preloads have a higher network priority. Preload tag for all script resources and to place them in the order that they would be needed. Load in new batches before the user hits the end of their current feed. A prioritized task abstraction that handles queueing of asynchronous work (in this case, a prefetch for the next batch of feed posts). If the user scrolls close enough to the end of the current feed, we increase the priority of this prefetch task to ‘high’ by cancelling the pending idle callback and thus firing off the prefetch immediately. Once the JSON data for the next batch of posts arrives, we queue a sequential background prefetch of all the images in that preloaded batch of posts. We prefetch these sequentially in the order the posts are displayed in the feed rather than in parallel, so that we can prioritize the download and display of images in posts closest to the user’s viewport. Also Preemption in Nomad — a greedy algorithm that scales.

- Native lazy loading has arrived! Adding the loading attribute to the images decreased the load time on a fast network connection by ~50% — it went from ~1 second to < 0.5 seconds, as well as saving up to 40 requests to the server 🎊. All of those performance enhancements just from adding one attribute to a bunch of images!

- Maybe it should just be simpler to create APIs? Simple Two-way Messaging using the Amazon SQS Temporary Queue Client. Seems a lot of people use queues for front-end back-end communication because it's simpler to setup and easier to secure than createing an HTTP endpoint. So AWS came up with a virtual queue that let's you multiplex many virtual queues over a physical queue. No extra cost. It's all done on the client. A clever tag based heartbeat mechanism is used to garbage collect queues.

-

Monolith to Microservices to Serverless — Our journey: A large part of our technology stack at that time comprised of a Spring based application and a MySQL database running on VMs in a data centre...The application was working for our thousands of customers, day in, day out, with little to no downtime. But it couldn’t be denied that new features were becoming difficult to build and the underlying infrastructure was beginning to struggle to scale as we continued to grow as a business...We needed a drastic rethink of our infrastructure and that came in the shape of Docker containers and Kubernetes...We took a long hard look at our codebase and with the ‘independent loosely coupled services’ mantra at the forefront of our minds we were quickly able to break off large parts of the monolith into smaller much more manageable services. New functionality was designed and built in the same way and we were quickly up to a 2 node K8s cluster with over 35 running pods....Fast forward to Today and we have now been using AWS for well over 2 years, we have migrated the core parts of our reporting suite into the cloud and where appropriate all new functionality is built using serverless AWS services. Our ‘serverless first’ ethos allows us to build highly performant and highly scaleable systems that are quick to provision and easy to manage.

- This is Crypto 101. Security Now 727 BlackHat & DefCon. Steve Gibson details how electronic hotel locks can protect themselves against replay attacks: All that's needed to prevent this is for the door, when challenged to unlock, to provide a nonce for the phone to sign and return. The door contains a software ratchet. This is a counter which feeds a secretly-keyed AES symmetric cipher. Each door lock is configured with its own secret key which is never exposed. The AES cipher which encrypts a counter, produces a public elliptic key which is used to verify signatures. So the door lock first checks the key that it is currently valid for and has been using. If that fails, it checks ahead to the next public key to see whether that one can verify the returned signature. If not, it ignores the request. But if the next key does successfully verify the request's signature it makes the next key permanent, ratcheting forward and forgetting the previous no-longer-valid key. This means that the door locks do not need to communicate with the hotel. Each door lock is able to operate autonomously with its own secret key which determines the sequence of its public keys. The hotel system knows each room’s secret key so it's able to issue the proper private signing key to each guest for the proper room. If that system is designed correctly, no one with a copy of the Mobile Key software, and the ability to eavesdrop on the conversation, is able to gain any advantage from doing so.

- Trip report: Summer ISO C++ standards meeting (Cologne). Reddit trip report. C++20 is now feature complete. Added: modules, coroutines, concepts including in the standard library via ranges, <=> spaceship including in the standard library, broad use of normal C++ for direct compile-time programming, ranges, calendars and time zones, text formatting, span, and lots more. Contracts were moved to C++21.

- Ingesting data at “Bharat” Scale: Initially, we considered Redis for our failover store, but with serving an average ingestion rate of 250K events per second, we would end up needing large Redis clusters just to support minutes worth of panic of our message bus. Finally, we decided to use a failover log producer that writes logs locally to disk. This periodically rotates & uploads to S3...We’ve seen outages, where our origin crashes & as it tries to recover, it is inundated with client retries & pending requests in the surge queue. That’s a recipe for cascading failure...We want to continue to serve the requests we can sustain, for anything over that, sorry, no entry. So we added a rate-limit to each of our API servers. We arrived at this configuration after a series of simulations & load-tests, to truly understand at what RPS our boxes will not sustain the load. We use nginx to control the number of requests per second using a leaky bucket algorithm. The target tracking scaling trigger is 3/4th of the rate-limit, to allow for the room to scale; but there are still occasions where large surges are too quick for target-tracking scaling to react.

Soft Stuff:

- jedisct1/libsodium: Sodium is a new, easy-to-use software library for encryption, decryption, signatures, password hashing and more. It is a portable, cross-compilable, installable, packageable fork of NaCl, with a compatible API, and an extended API to improve usability even further. Its goal is to provide all of the core operations needed to build higher-level cryptographic tools.

- amejiarosario/dsa.js-data-structures-algorithms-javascript: In this repository, you can find the implementation of algorithms and data structures in JavaScript. This material can be used as a reference manual for developers, or you can refresh specific topics before an interview. Also, you can find ideas to solve problems more efficiently.

- linkedin/brooklin: Brooklin is a distributed system intended for streaming data between various heterogeneous source and destination systems with high reliability and throughput at scale. Designed for multitenancy, Brooklin can simultaneously power hundreds of data pipelines across different systems and can easily be extended to support new sources and destinations.

- gojekfarm/hospital: Hospital is an autonomous healing system for any System. Any failure or faults occurred in the system will be resolved automatically according to given run-book by the Hospital without manual intervention.

- BlazingDB/pyBlazing: BlazingSQL is a GPU accelerated SQL engine built on top of the RAPIDS ecosystem. RAPIDS is based on the Apache Arrow columnar memory format, and cuDF is a GPU DataFrame library for loading, joining, aggregating, filtering, and otherwise manipulating data.

- serverless/components: Forget infrastructure — Serverless Components enables you to deploy entire serverless use-cases, like a blog, a user registration system, a payment system or an entire application — without managing complex cloud infrastructure configurations.

Pub Stuff:

- Zooming in on Wide-area Latencies to a Global Cloud Provider: The network communications between the cloud and the client have become the weak link for global cloud services that aim to provide low latency services to their clients. In this paper, we first characterize WAN latency from the viewpoint of a large cloud provider Azure, whose network edges serve hundreds of billions of TCP connections a day across hundreds of locations worldwide.

- What is Applied Category Theory? Two themes that appear over and over (and over and over and over) in applied category theory are functorial semantics and compositionality.

- ML can never be fair. On Fairness and Calibration: In this paper, we investigate the tension between minimizing error disparity across different population groups while maintaining calibrated probability estimates. We show that calibration is compatible only with a single error constraint (i.e. equal false-negatives rates across groups), and show that any algorithm that satisfies this relaxation is no better than randomizing a percentage of predictions for an existing classifier. These unsettling findings, which extend and generalize existing results, are empirically confirmed on several datasets.