Stuff The Internet Says On Scalability For February 28th, 2020

Wake up! It's HighScalability time:

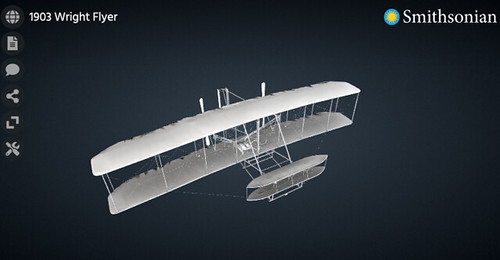

The Smithsonian has million of pieces of delicious open access content. I ate up this 3D representation of the 1903 Wright Flyer.

Do you like this sort of Stuff? Without your support on Patreon this kind of Stuff won't happen. That's how important you are to the fate of the world.

Need to understand the cloud? Know someone who does? I wrote Explain the Cloud Like I'm 10 just for you...and them. On Amazon it has 98 mostly 5 star reviews. Here's a recent authentic unfaked review:

Number Stuff:

- 60%: of world's trees connected by a world wide web of fungi.

- $900 billion: spent by Alphabet, Amazon, Microsoft, Facebook, and Apple over the last decade in R&D and CapEx. $184 billion in 2019 alone.

- 17%: saved on AWS Lambda by making a 3 year commitment.

- 30%: of Amazon reviews are fake. 52% on Walmart.com.

- 2000: year old Judean date palm seeds have been sprouted. The plant has spoken and has asked everyone just to get along. "I really thought things would be better by now," the plant was quoted as saying.

- 40,000: number of friends-of-friends (FoFs) for the typical Facebook user. A power user with thousands of friends might have 800,000 FoFs.

- $3.50: what US users want from Facebook to share their contact information. Germans drive at harder bargain at $8 a month. Doesn't Facebook make considerably over $100 per user per year?

- $10 million: amount a Microsoft employee, who helped test Microsoft’s online retail sales platform, stole from MS. Just in case you've thought aboud this kind of scam, think about 20 years in prison.

- $10 billion: Google's spend on datacenters and offices this year.

- 8: bit cloud from BBC Micro Bot

- 82k: times Microsoft deploys each day.

- 11: asteroids that could potentially destroy the earth and that were missed by NASA's software. But does it really matter?

- 25%: of all tweets about climate crisis produced by bots.

- ~2x: increase in DDoS attacks, leveraging non-standard protocols for amplification attacks.

- 36+ trillion: Google font API calls.

- 26: average tenure in months for Chief Information Security Officers. Burnout isn't just for programmers.

- zilch: amount made by the guy behind TikTok’s biggest song that sparked 18+ million videos.

- 23,000: YouTube videos reinstated from over 109,000 appeals. 5M videos and 2M+ channels were removed between October and December.

- 1 billion: certs issued by Let's Encrypt. A free product that forces you to renew every 3 months.

- 50 billion: files restored by Backblaze. About 50/50 Mac/Windows. 1.06% of restores were > 500 GB.

Quotable Stuff:

- @EvanVolgas: Laziness is reliable & it scales

- Steven Levy: We [Mark Zuckerberg] don’t view your experience with the product as a single-player game,” he says. Yes, in the short run, some users might benefit more than others from PYMK [People You May Know] friending. But, he contends, all users will benefit if everyone they know winds up on Facebook. We should think of PYMK as kind of a “community tax policy,” he says. Or a redistribution of wealth. “If you’re ramped up and having a good life, then you’re going to pay a little bit more in order to make sure that everyone else in the community can get ramped up. I actually think that that approach to building a community is part of why [we have] succeeded and is modeled in a lot of aspects of our society.”

- Jill Tarter: We need to stop projecting what we think on to what we don’t yet know...we need to distinguish between what we know and we think is, but have not yet verified.\

- Gary Marcus: In my judgement, we are unlikely to resolve any of our greatest immediate concerns about AI if we don’t change course. The current paradigm—long on data, but short on knowledge, reasoning and cognitive models—simply isn’t getting us to AI we can trust (Marcus & Davis, 2019). Whether we want to build general purpose robots that live with us in our homes, or autonomous vehicles that drive us around in unpredictable places, or medical diagnosis systems that work as well for rare diseases as for common ones, we need systems that do more than dredge immense datasets for subtler and subtler correlations. In order to do better, and to achieve safety and reliability, we need systems with a rich causal understanding of the world, and that needs to start with an increased focus on how to represent, acquire, and reason with abstract, causal knowledge and detailed internal cognitive models.

- Cloudflare: We recently turned up our tenth generation of servers, “Gen X”, already deployed across major US cities, and in the process of being shipped worldwide. Compared with our prior server (Gen 9), it processes as much as 36% more requests while costing substantially less. Additionally, it enables a ~50% decrease in L3 cache miss rate and up to 50% decrease in NGINX p99 latency, powered by a CPU rated at 25% lower TDP (thermal design power) per core. Notably, for the first time, Intel is not inside. This time, AMD is inside. We were particularly impressed by the 2nd Gen AMD EPYC processors because they proved to be far more efficient for our customers’ workloads.

- @karpathy: New antibiotic ("halicin") discovered by training a neural net f: [molecule -> P(growth inhibition of E. coli)], then ranking ~100M other molecules https://cell.com/cell/fulltext/S0092-8674(20)30102-1 Sounds great! Slightly surprised: 1) training set of 2,335 sufficed, 2) wide structural distance of halicin

- @davecheney: Software is never finished, only abandoned.

- @alexbdebrie: DynamoDB Performance Test Results Key takeaways: - Batch writes aren't that much slower than single writes (~35%, but still sub-20ms round trip) - Transactions 3-4x slower than batch writes - Batch size is a big factor in batch writes & transactions

- @rts_rob: Prediction: By 2025 Amazon EventBridge will form the backbone of #serverless apps built on #AWS. 1) Business event goes on the bus 2) AWS Step Functions execute the business process - largely through service integrations 3) Outputs go back on the bus as events

- @asymco: PC shipments will be down between 10.1% to 20.6% in Q1 2020. In the best case scenario, the outbreak would mean 382 million units will ship in 2020, down 3.4% from 396 million last year. -Canalys

- @mweagle: “By infusing microservices architecture gratuitously, you’re just going to turn your bad code into a bad infrastructure.”

- @nakul: 10 years ago, conventional wisdom in B2B software was: SMB sucks, go midmarket / enterprise ASAP. The biggest B2B hits of the last decade (Shopify, Square, Zoom, Slack) turned out to be SMB/freemium first. Whats conventional wisdom in B2B today that’ll be wrong the next decade?

- @johnauthers: Yikes. Exclude the big 5 (Microsoft, Apple, Alphabet, Amazon and Facebook) and U.S. earnings were down 7.5% in the 4th quarter. H/t @AndrewLapthorne of SocGen

- Erin Griffith:Over the past decade, technology start-ups grew so quickly that they couldn’t hire people fast enough. Now the layoffs have started coming in droves. Last month, the robot pizza start-up Zume and the car-sharing company Getaround slashed more than 500 jobs. Then the DNA testing company 23andMe, the logistics start-up Flexport, the Firefox maker Mozilla and the question-and-answer website Quora did their own cuts. “It feels like a reckoning is here,” said Josh Wolfe, a venture capitalist at Lux Capital in New York.

- Paul Brebner: This leads to my #1 Kafka rule: Kafka is easy to scale, when you use the smallest number of consumers possible.

- @MissAmyTobey: I'm trying really hard to be curious about the weird stuff that comes out of microservices thinking. For example, I'm curious about when the sidecar concept went from 'attachable small thing outside the hot path' to 'full-on complex system in its own right, tightly coupled'.

- Edsger W. Dijkstra: The question of whether a computer can think is no more interesting than the question of whether a submarine can swim.

- Crito: When you're aiming for 10/100/1000X improvements and/or radical changes in the way things are done, some of those bets don't pan out. The key is that if even 1% of them succeed, they can pay for all of the others. Google Brain was an X project and the head of X, Astro, has said it alone more than paid for all other X projects combined (admittedly this was back in 2015). The work they're doing here is riskier than venture capital invested in early-stage companies - failures should be expected. It's fair to critique a company for making poor long-term decisions to satisfy short-term shareholder demands, but this isn't one of those cases. It's perfectly reasonable for a firm to kill something when the question of "Can it be profitable?" goes from "Likely, in a few years" to, "Maybe, perhaps never, and even if it is, it won't be wildly so" - marginal benefits aren't moonshots.

- @pjreddie: I stopped doing CV research because I saw the impact my work was having. I loved the work but the military applications and privacy concerns eventually became impossible to ignore.

- @jeremy_daly: This is one of my favorite new #serverless patterns made possible by Lambda Destinations and EventBridge! No need to use the @awscloud SDK for transport between services (except for replay), optional delayed/throttled conditional replay, and *zero* error handling code needed. This is for event processing requests via EventBridge (e.g. an order is placed and some other process(es) needs to kick off). EB rules can be used to route errors based on type and # of retries. Replay requires a `putEvents` SDK call, but failures bubble up and are handle by SQS. Been using this for a few weeks now and it has been extremely effective. I'm working on a post that has more detail and some example code that I'll share soon.

- @StarsAtNightTX: 1) No job is worth your sanity. 2) Ask for more pay. 3) Learn to say no.4) Taking on more responsibility without getting adequately compensated will not help you advance; it will teach them how to treat you.

- Forrest Brazeal: What do the dead tree orchards, the asteroid farms, and the donut holes have in common? They’re all attempts to graft cloud onto an existing technical and organizational structure, without undertaking the messy, difficult work of rehabilitating the structure itself.

- Lauren Tan: I realized that what I miss most is being a maker. It’s hard to describe what being a maker is, but I can describe what it feels like. It’s that feeling you get when you turn a blank canvas or empty document into something. Whether it’s a painting, a sculpture, code, it all still feels like magic. If you’re as fortunate as I am, you might know this feeling too. It’s powerful stuff. When I was working as an engineer, going into work everyday made me giddy with excitement. It didn’t feel like work. I’m getting paid to do my hobby! As a manager, I still made things in my spare time, but it wasn’t quite the same.

- Frank Lloyd Wright: Our nature is so warped in so many directions, we are so conditioned by education, we have no longer any straight true reactions we can trust—and we have to be pretty wise

- @danielbryantuk: "There are no best practices in software architecture. Only tradeoffs"

- Cedric Chin: My closest cousin is a software engineer. Recently, his frontend engineering team hired a former musician: someone who had switched from piano to Javascript programming with the help of a bootcamp. He noticed immediately that her pursuit of skills (and the questions she asked) were sharper and more focused than the other engineers he had hired. He thought her prior experience with climbing the skill tree of music had something to do with it.

- @benbjohnson: Every time I hear someone say they need five 9's of uptime, I think of GitHub. Everybody relies on them, they have maybe 99.9% uptime, and they got bought for $7.5B by Microsoft. Unless you're making pacemakers, ease up on your crazy uptime requirements.

- JCharante: I wish the sibling comment wasn't dead so I could point out that Amazon's Choice for microwaves is one with Alexa integration. Amazon has already taken over the Smart Microwave market.

- Hugh Howey: I’m here because they ain’t made a computer yet that won’t do something stupid one time out of a hundred trillion. Seems like good odds, but when computers are doing trillions of things a day, that means a whole lot of stupid.

- XANi_: Splitting code on logical bundaries almost always makes sense even in monolith, and can always just deploy your monolith everywhere. Then dedicate some instances of it to doing a specific task, at that point you can optimize what kind of instance you are paying for for a given job. Then start refactoring and carving out that part so it is basically "an app within an app". Maybe even to a point that it is a library. You can start defining an API and instantly testing how well it works. At this point you can just have a team that takes care of that part and doesn't much care about the rest of the application. Then move all the data this part owns to it's own data store.

- @PeterVosshall: 2) So many things to be proud of: services-oriented architecture, real-time data propagation, saving Christmas in 2004 when Oracle RAC couldn't scale, blazing a trail for NoSQL with Dynamo and receiving the ACM Hall of Fame award for our paper at SOSP 2017, 3) becoming Amazon's first Distinguished Engineer in 2008, leading Amazon's Principal Engineer Community (the best in the tech world), building a cloud-accelerated browser for our tablets, leading the charge on scaling & blast radius reduction and cell-based architecture

- Malcolm Kendrick: I have virtually given up explaining to journalists that experts will always hold exactly the same view as each other. If they did not, they would – by definition – no longer be experts. A dissenting expert is a contradiction in terms. You are either with us, or against us. One might as well ask a Manchester City supporter if he knows of any other Manchester City supporters who support Manchester United.

- Geoffrey West: The battle to combat entropy by continually having to supply more energy for growth, innovation, maintenance, and repair, which becomes increasingly more challenging as the system ages, underlies any serious discussion of aging, mortality, resilience, and sustainability, whether for organisms, companies, or societies.

- Ashok Leyland~ Migrating to AWS, this automotive leader earned a host of business benefits, including cost reductions, a 300% improvement in data processing speed, a 40% uplift in KPI computation, and the ability for its data scientists to analyse telematics data much faster by using tools from AWS, thereby providing its end customers with deep insights. Compute was 30% faster.

- Tata Global Beverages~ Improvements from moving to the cloud. Final order settlement reduces from 4 hours to 20 minutes. Stock report run time reduces from 30 minutes to 5 minutes. 7% saving over in-house datacenter. 5%-8% savings on managed services contract. 2-3% savings on shutdown governance control and right-sizing. It as much as sales and marketing and supply change. Let AWS handle technology so IT focus on business needs.

- Andrew Dzurak: When the electrons in either a real atom or our artificial atoms form a complete shell, they align their poles in opposite directions so that the total spin of the system is zero, making them useless as a qubit. But when we add one more electron to start a new shell, this extra electron has a spin that we can now use as a qubit again

- @imrankhan: Me: "What do you mean? Aren't they all ubers and lyfts?" Him: "Yeah, but the drivers lease the cars off the same guy. I met him." (2/9) "He's from Virginia, and leases out thousands of cars to drivers who for financial or background reasons can't otherwise buy or rent a car that meets, say, Uber's rules." Me: "Oh, ok. Well, that makes sense."

- Marc Brooker: "A few years ago at AWS we started building Physalia, a specialized database we built to improve the availability and scalability of EBS. Physalia uses locality, based on knowledge of data center topology, to avoid making the hard

- 2J0: When Blackberry bought QNX I thought they would leverage their carrier network footprint to build a security managed turnkey/white box IoT business in controllers from white goods to plant. Everything I have since read about the major mobile hardware/phone companies taught me to stop over estimating the ability for organisations who are monomaniacally focussed on exploiting initial (fluke by statistical measures) in SF insights implemented by early engineering focus by arguably genius minds, have no ability whatsoever to understand how anything can interoperate or even coexist, because they are hell bent on protecting the boundaries of their product features and technology surface.

- tptacek: People have a weird mental model of how big-company bug bounty programs work. Paypal --- a big company for sure, with a large and talented application security team --- is not interested in stiffing researchers out of bounties. They have literally no incentive to do so. In fact: the people tasked with running the bounty probably have the opposite incentive: the program looks better when it is paying out bounties for strong findings.

- mrtksn: Not the Google Cloud but Firebase is by far the nicest experience I ever had with a "hosting" provider. It is a bit expensive but I think this proves that there are many low hanging fruits to gather out there. It's essentially an API to access a streamlined database, file hosting, access provider and so on. Everything integrated and can talk to each other.

- Backblaze: The total AFR [Annualized Failure Rate] for 2019 rose significantly in 2019. About 75% of the different drive models experienced a rise in AFR from 2018 to 2019. There are two primary drivers behind this rise. First, the 8 TB drives as a group seem to be having a mid-life crisis as they get older, with each model exhibiting their highest failure rates recorded. While none of the rates is cause for worry, they contribute roughly one fourth (1/4) of the drive days to the total, so any rise in their failure rate will affect the total. The second factor is the Seagate 12 TB drives, this issue is being aggressively addressed by the 12 TB migration project reported on previously.

- splatcollision: CouchDB is awesome, full stop. While it's missing some popularity from MongoDB and having wide adoption of things like mongoose in lots of open source CMS-type projects, it wins for the (i believe) unique take on map / reduce and writing custom javascript view functions that run on every document, letting you really customize the way you can query slice and access parts of your data...

- Legogris: For a startup, it can work out like this: Start out on AWS/GCP/Azure in the initial phase when you want to optimize for velocity in terms of pushing out new functionality and services. When you start to require several message queues, different data stores, dynamic provisioning and high availability, you save a lot on setup and maintenance - the initial cost of getting your private own cloud up and running, and doing so stably, is not to be underestimated. Especially when you're still exploring and haven't figured out the best technologies for you long-term. Then, at some point, that dynamic changes as you have a better understanding of your needs, the. I think building somewhat cloud-agnostic to ease friction of provider migration is good, regardless, but do so pragmatically and look at the APIs from a service perspective.

- Suphatra Rufo: No, I don't think it's a dying market [Oracle and Microsoft SQL Server]. I don't think people are migrating as fast as the hype makes you believe and you can kind of tell... At re:Invent last month, Andy sort of alluded to that. He was annoyed that people weren't migrating off of on-prem fast enough because it just takes time and people want to be smart and careful. And you would be surprised at how much both of those companies go through switchbacks, right?

- thu2111: Also: free cloud credits. Don't underestimate that. I know of two startups (one that I work at). Both are hooked on the cloud, albeit one has an insane cloud bill and one has a very reasonable bill. The reason in both cases is MSFT/AWS gave them massive up front "free" credits, which meant there was no incentive to impose internal controls on usage or put in place a culture of conservation. AWS doesn't think twice before dropping $20k on cloud credits to anyone who wrote a mobile app. At the company with the unreasonable bill, it's not even a SaaS provider. It literally just runs a few very low traffic websites for user manuals, some CI boxes and some marketing sites. The entire thing could be run off three or four dedicated boxes 95% of which would be CI, I'm sure of it. Yet this firm spends several tech salaries worth of money on Azure every quarter. The bill is eyebleeding.

- Adriene Mishler: Everything changed when we did our first “30 days of yoga” video [in 2015]. The idea is that we’re doing it with people live during January, all across the globe. And it’s free. No gimmicks, no catch. That’s when we really started to see the numbers move, and they have not stopped since then.

- com2kid: The behavior model itself is super useful for user journeys. My biggest take away is that when someone first downloads an application (or signs up on a website) they do so because they have a problem they want to solve, and at that moment there is a level of motivational energy that they are willing to spend. Be it adding pictures to a dating app or going through an onboarding process. If that first use is too complex, the user's motivation is exhausted and they'll drop out.

- Rick Beato~ Copyright claims might as well take a gun to your head and take your money. Getting paid like a collection agency makes a worse system even without a legitimate claim. Fair use. Disputing on YouTube gives you a copyright strike and three strikes they take your channel down. Fear is used to control YouTubers. Labels make so much money off of YouTube because YouTubers don't dare fight lest their channel be taken down. YouTube doesn't care about fair use. Labels don't care about fare use. They just want to stay out of it and let their content producers be screwed.

- Peter Palomaki: Within five years, it’s likely that we will have QD-based image sensors in our phones, enabling us to take better photos and videos in low light, improve facial recognition technology, and incorporate infrared photodetection into our daily lives in ways we can’t yet predict. And they will do all of that with smaller sensors that cost less than anything available today.

- Simone Kriz: Airship’s In-App Messaging capabilities power the onboarding flow for Caesar’s Rewards. New users receive automated welcome messages, encouraging them to register for exclusive features and personalized offers. This effort has resulted in a 350% higher three-month retention rate among users who opt in to push notifications. If someone changes their mind and opts out of push notifications, Airship is there to save the day, as well. Airship’s Custom Events feature triggers in-app messages to win back subscribers. “Close to 30% of users clicked the call-to-action button that directed them to opt back in,” Turner says.

- Bitbucket: We did indeed shift the majority of traffic to replicas while keeping added latency close to 10ms. Below is a snapshot of requests, grouped by the database that was used for reads, before our new router was introduced. As you can see most of the requests (the small squares) were using the primary database. Looking at the primary database on a typically busy Wednesday we can see a reduction in rows fetched of close to 50%.

- Michael Feldman: On Monday, while recapping the latest list announced at the ISC conference, TOP500 [supercomputers] author Erich Strohmaier offered his take on why this persistent slowdown is occurring. His explanation: the death of Dennard scaling. “There is no escaping Dennard Scaling anymore,” Strohmaier noted.

Useful Stuff:

- Part two of Jim Keller: Moore's Law, Microprocessors, Abstractions, and First Principles~

- What changes when you add more transistors? There are two constants: people aren’t getting smarter and teams can’t grow that much. We’re really good at teams of 10, up to a hundred, after that you have to divide and conquer. As designs get bigger you have to divide it into pieces. The power of abstraction layers is really high. We used to build computers out of transistors. Now we have a team that turns transistors into logic cells and another team that turns them into functional units and another team that turns them into computers.

- Simply using faster computers to build bigger computers doesn’t work. Some algorithms run twice as fast on new computers, but a lot of algorithms are N^2. A computer with twice as many transistors may take four times as long to run, so you have to refactor the software.

- Every 10x generates a new computation. Mainframe, minis, workstations, PC, mobile. Scalar, vector, matrix, topological computation. What’s next? The smart world where everything knows you. The transformations will be unpredictable.

- Is there a limit to calculation? I don’t think so.

- People tend to think of computers as a cost problem. Computers are made out of silicon and minor amounts of metals. None of those things cost any money. All the cost is in the equipment. The trend in the equipment is once you figure out how to build the equipment the trend of cost is zero. Elon said first you figure out what configuration you want the atoms in and then how to put them there.

- Elon’s great insight is people are how constrained. I have this thing and I know how it works and little tweaks to that will generate something, as opposed to what do I actually want, and then figure out how to build it. It’s a very different mindset and almost nobody has it.

- Progress disappoints in the short-run but surprises in the long-run.

- Most engineering is craftsman's work and humans really like to do that.

- There’s an expression called Complex Mastery Behaviour. When you’re learning something that’s fun because you're learning something. When you do something relatively simple it’s not that satisfying, but if the steps you have to do are complicated and you’re good at them it’s satisfying. And if you’re intrigued while you’re doing them you’ll sometimes learn new things that you can raise your game.

- Computers are always this weird set of abstraction layers of ideas and thinking that reduction to practice is transistors and wires, pretty basic stuff. That’s an interesting phenomenon

- Elon is good at taking everything apart. What’s the first deep principle? Then going deeper and asking what’s really the first deep principle? That ability to look at a problem without assumptions and how constraints is super wild. Imagine if 99% of your thought process is rejecting your self conceptions and 98% of that’s wrong. How do you think you’re feeling when you get to that 1% and you’re open and you have the ability to do something different?

- This takes pay to play to a new level. Live on the platform, die from the platform. Etsy sellers are furious over new mandatory ad fees: Etsy sellers are furious about the website's new advertising scheme, which will impose huge fees on some sales. What's worse, certain users aren't even allowed to opt out.

- mandelbrotwurst: The system has no opt-out if you've ever gone above $10K in rolling 12 month sales.

- MicahKV: I'm one of those sellers who is being forced into Etsy's new ad program. It is frustrating and obnoxious, but I can't say it is surprising given the policy changes they've been rolling out the past few months. It seems to me that online marketplaces like Etsy find their early success by bringing buyers and sellers together and just letting them do their thing, but as the marketplace becomes more established it's relationship with sellers inevitably turns adversarial.

- hoorayimhelping: I left Etsy as a member of the relatively new ads team in mid 2015. We generated a lot of revenue for the company in a very short period of time, but we made sure to make the whole thing feel like Etsy - homegrown, ethical, thoughtful, etc. We made the tools mentioned in this article - the ads console and search ads. It was basically first party promoted listings where you could bid on keywords in the internal site search. We also let sellers opt into ad campaigns run through google and facebook that we helped manage through a console. It was all optional, and it was a decent equalizer for smaller shops, although it was mostly used by large shops iirc. I have no idea what the hell they're thinking with this. This is downright hostile to sellers. I spoke to a nonzero amount of sellers who were against advertising as a concept - they didn't want to be part of that machine (I think quite a few of us here can relate). Now they have no choice but to pay Etsy for this. It's a huge middle finger to the sellers and that made Etsy rich and who Etsy continually takes advantage of. This is mafia-esque style extortion where they say they're doing this for you as they take your money.

- Here's how a modern version of being Slashdotted works in the cloud. It just works...after you make some smart choices. Handling Huge Traffic Spikes with Azure Functions and Cloudflare.

- The last time @MartinSLewis featured @haveibeenpwned on @itvMLshow, I lost a third of requests for a brief period due to the sudden influx of traffic.

- What I like most about the outcome of this experience is not that HIBP didn't drop any requests, but rather that I didn't need to do anything different to usual in order to achieve that. I don't even want to think about traffic volumes and that's the joyous reality that Azure Functions and Cloudflare brings to HIBP

- Last week, the show reached out again. "Round 2", I'm thinking, except this time I'm ready for crazy traffic. Over the last 3 and half years I've invested a heap of time both optimising things on the Cloudflare end (especially as it relates to caching and Workers) and most importantly, moving all critical APIs to Azure Functions which are "serverless"

- The thing that's most interesting about the traffic patterns driven by prime-time TV is the sudden increase in requests. Think about it - you've got some hundreds of thousands (or even millions) of people watching the show and the HIBP URL appears in front of them all at exactly the same time.

- Now we're at 2,533 requests per minute so the headline story here in terms of rate of change is traffic increasing 211 times over in a very short period of time. The last time that happened in 2016, the error rate peaked at about a third of all requests. This time, however, the failed request count was...zero

- That's a peak of 33,260 requests per minute or in other words, 554 requests per second searching 9.5 billion breach records and another 133 million pastes accounts at the same time. The especially cool thing about this chart is that there was no perceptible change to the duration of these requests during the period of peak load, in fact the only spikes were well outside that period. This feature is the real heart of HIBP so naturally, I was pretty eager to see what the failure rate looked like...Zero. Again.

- So onto the SQL database which manages things like the subscriptions for those who want to be notified of future breaches. (This runs in an RDBMS rather than in Table Storage as it's a much more efficient way of finding the intersection between subscribers and people in a new breach.) It peaks at about 40% utilisation which is something I probably need to be a little conscious of, but this is also only running an S2 instance at A$103 per month.

- That's 3.67M in the busiest 15 minutes so more than double the 2016 traffic. The big difference between the 2016 and 2020 experiences is that there's now much more aggressive caching on the Cloudflare end. As you can see from the respective graphs, previously the cache hit ratio was only about 76% whereas today it was all the way up at 96%. In fact, those 163.58k uncached requests is comprised almost entirely of hits to the API to search the DB, people signing up for notifications and the domain search feature so in other words, just the stuff that absolutely, positively needs to hit the origin.

- What would happen if a god used an app to carry out their archetypical function in an age of unbelievers? It might look something like this. The Beast, the Phantom and the Hunchback use a dating app to find love. LOL.

- How to run a startup for free ($6/yr)? Use this stack: 1. DynamoDB for database 2. AWS Lambda for backend 3. Netlify / Now / Surge for frontend 4. S3 for file/image hosting 5. Cloudinary for image hosting 6. IFTTT to webhook for cron 7. RedisLabs for queues, cache 8. Figma for designing and prototyping 9. Porkbun for $6 .com domains 10. Cloudflare for DNS

- A data driven list of the most recommended programming books of all-time:

- The Pragmatic Programmer by David Thomas & Andrew Hunt (67% recommended)

- Clean Code by Robert C. Martin (66% recommended)

- Code Complete by Steve McConnell (42% recommended)

- Refactoring by Martin Fowler (35% recommended)

- Head First Design Patterns by Eric Freeman / Bert Bates / Kathy Sierra / Elisabeth Robson (29.4% recommended)

- The Mythical Man-Month by Frederick P. Brooks Jr (27.9% recommended)

- The Clean Coder by Robert Martin (27.9% recommended)

- Working Effectively with Legacy Code by Michael Feathers (26.4% recommended)

- Design Patterns by by Erich Gamma / Richard Helm / Ralph Johnson / John Vlissides (25% recommended)

- Cracking the Coding Interview by Gayle Laakmann McDowell (22% recommended)

- With IoT we might see a lot more of this sort of thing because we need a way to take someone from a device to a landing page. Pernod Ricard: Shortening URLs on a Global Scale with Low Latency. Imagine a QR code or a NFC chip on a bottle or a short URL in a post. How do you resolve it? This is a use case for Lambda@Edge. A user sends an URL to Cloud Front. CF contacts Lambda@Edge which does a look up in the globally replicated DynamoDB to return data. Which DynamoDB is chosen is based on the region. This design gives low latency and scalability on a world wide scale. Costs were reduced 100x compared to their previous architecture. Plans are to move more to the edge in the future to send people to the right localized content.

- As a designer you have to make a decision what happens when your product fails. You can fail-safe or fail-f*cked. When your computer boots typically it boots with all the fans pegged high because it can't know the conditions the computer will be in and the computer hasn't booted far enough to make a smart decision. The default is to keep the computer cool. That's fail-safe. Here's fail-f*cked, because if it happens to you, you're f*cked, you could actually die. Driver stranded after connected rental car can’t call home. Horrible design.

- Nice social engineering. Jeffrey McGrew on the clever way they allocated expensive iPads on a construction job site. You can give everyone in the field an iPad, but they'll just break them. Since the project will take five years the iPads will have no value at the end. So they just gave the iPads to the workers and told them if you break it you rebuy it. Workers bought cases for their iPads and kept it safe because it was their iPad.

- Five years old but still fascinating. I'd love an update. Talking with Robots about Architecture

- Semi-automated and loosely connected. Keep a human in the loop because humans are very flexible, but at the same time have the human work side by side with technology so they help each other. You can have a machine do 80% of the work in 20% of the time and then humans can do the finishing work. A talented human can do better than a machine at finishing. Loosely connected means don't worry about having a single source of truth, let truths reside in a separate systems so you can be more productive. You can then cross-correlate to find deeper insights.

- Construction industry is 20 years away from their version of git. This as version of loosely connected.

- Successful architects use late binding. They figure out which problems they can ignore and solve at the last minute so they have more information to use to solve the problem.

- New technologies change what's easy. Robots operate as a force multiplier. You can do other things why automation is doing its thing. Tech getting cheaper also changes what can be done. You can afford to waste machines, just use them when you need them, they don't have to be continuously productive to make it cost effective.

- Labor because of robots is getting cheaper as materials are becoming more expensive. Now architects can (once again) interact directly with artisans to make stuff, they don't need a middle layer.

- I don't know about you, but my dog deserves a cloud based pet feeder that operates in at least 3 regions. Petnet goes offline for a week, can’t answer customers at all.

- Good advice. Ten rules for placing your Wi-Fi access points: Rule 1: No more than two rooms and two walls; Rule 2: Too much transmit power is a bug; Rule 3: Use spectrum wisely; Rule 4: Central placement is best; Rule 6: Cut distances in halves; Rule 7: Route around obstacles; Rule 8: It's all about the backhaul; Rule 9: It's (usually) not about throughput, it's about latency; Rule 10: Your Wi-Fi network is only as fast as its slowest connected device.

- Ah, the new algorithmic world is full of miracles. Suckers List: How Allstate’s Secret Auto Insurance Algorithm Squeezes Big Spenders: In this case, Allstate’s model seemed to determine how much a customer was willing to pay —or overpay—without defecting, based on how much he or she was already forking out for car insurance. And the harm would not have been equally distributed.

- Videos for Serverless: Zero to Paid Professional are now available.

- Libraries are a threat vector, so Mozilla wants to put every library in its own sandbox. Bold. Securing Firefox with WebAssembly (RLBox, code): The core implementation idea behind wasm sandboxing is that you can compile C/C++ into wasm code, and then you can compile that wasm code into native code for the machine your program actually runs on. These steps are similar to what you’d do to run C/C++ applications in the browser, but we’re performing the wasm to native code translation ahead of time, when Firefox itself is built. Each of these two steps rely on significant pieces of software in their own right, and we add a third step to make the sandboxing conversion more straightforward and less error prone.

- Reflections on software performance:

- I’ve really strongly come to believe that we underrate performance when designing and building software. We have become accustomed to casually giving up factors of two or ten or more with our choices of tools and libraries, without asking if the benefits are worth it.

- What is perhaps less apparent is that having faster tools changes how users use a tool or perform a task. Users almost always have multiple strategies available to pursue a goal — including deciding to work on something else entirely — and they will choose to use faster tools more and more frequently. Fast tools don’t just allow users to accomplish tasks faster; they allow users to accomplish entirely new types of tasks, in entirely new ways. I’ve seen this phenomenon clearly while working on both Sorbet and Livegrep

- Being fast also meant that users were (comparatively) more tolerant of false errors (Sorbet complaining about code that would not actually go wrong), as long as it was clear how to fix the error (e.g. adding a T.must).

- I’ve come to believe that the “performance last” model will rarely, if ever, produce truly fast software (and, as discussed above, I believe truly-fast software is a worthwhile target). I identify two main reasons why it doesn’t work

- The basic architecture of a system — the high-level structure, dataflow and organization — often has profound implications for performance. If you want to build truly performant software, you need to at least keep performance in mind as you make early design and architectural decisions, lest you paint yourself into awkward corners later on.

- One of my favorite performance anecdotes is the SQLite 3.8.7 release, which was 50% faster than the previous release in total, all by way of numerous stacked performance improvements, each gaining less than 1% individually. This example speaks to the benefit of worrying about small performance costs across the entire codebase; even if they are individually insignificant, they do add up.

- Another observation I’ve made is that starting with a performant core can ultimately drastically simplify the architecture of a software project, relative to a given level of functionality. There’s a general observation here: attempts to add performance to a slow system often add complexity, in the form of complex caching, distributed systems, or additional bookkeeping for fine-grained incremental recomputation. These features add complexity and new classes of bugs, and also add overhead and make straight-line performance even worse, further increasing the problem.

- I wonder if the perceived efficacy of frameworks is a product of the placebo effect?

- Systems Thinking and Quality. Uwe Friedrichsen glossing an insightful talk given by Dr. Russell Ackoff:

- The definition of quality has to do with meeting or exceeding the expectations of the customer or the consumer. Therefore, if their expectations are not met, it’s a failure.

- A system is a whole that consists of parts each of which can affect its behavior or its properties. Each part of the system when it affects the system is dependent for its effect on some other part. In other words: The parts are interdependent.

- No part of the system or a collection of parts of the system has an independent effect on it. Therefore a system as a whole cannot be divided into independent parts.

- The essential or defining properties of any system are properties of the whole which none of its parts have.

- The essential property of an automobile is that it can carry you from one place to another. No part of an automobile can do that.

- Therefore, when a system is taken apart it loses its essential properties. A system is not the sum of the behavior of its parts, it’s a product of their interactions.

- If we have a system of improvement that’s directed at improving the parts taken separately you can be absolutely sure that the performance of the whole will not be improved.

- The performance of a system depend on how the parts fit, not how they act taken separately.

- But (s)he will never modify the house to improve the quality of the room unless the quality of the house is simultaneously improved. That’s fundamentally the principle that ought to be used in continuous improvement.

- A defect is something that’s wrong. When you get rid of something that you don’t want you don’t necessarily get what you do want. And so, finding deficiencies and getting rid of them is not a way of improving the performance of a system.

- Basic principle: An improvement program must be directed at what you want, not at what you don’t want.

- Continuous improvement isn’t nearly as important as discontinuous improvement. […] One never becomes a leader 2 by continuously improving. That’s imitation of the leader. You never overcome a leader by imitating and improving slightly. You only become a leader by leapfrogging those who are ahead of you and that comes about through creativity.

- We frequently point to the Japanese and what they have done to the automobile: there is no doubt that they have improved the quality of the automobile. But it’s a wrong kind of quality.

- Peter Drucker made a very fundamental distinction between doing things right and doing the right thing. The Japanese are doing things right, but they are doing the wrong thing. Doing the wrong thing right is not nearly as good as doing the right thing wrong.

- It’s a wrong concept of quality. Quality ought to contain a notion of value, not merely efficiency. That’s a difference between efficiency and effectiveness. Quality ought to be directed at effectiveness.

- The difference between efficiency and effectiveness is a difference between knowledge and wisdom, and unfortunately we don’t have enough wisdom to go around. Until managers take into account the systemic nature of their organizations, most of their efforts to improve their performance are doomed to failure.

- Like most things that actually work for a broad user base in the real world, you hate all the compromises it makes and that fills you with the certainty of ignorance that you could do so much better. Then you try a rewrite from scratch and you find under the pressure of conforming forces that you need to make your own batch of compromises. All you've done really is move to a different point in the compromise space. That space may be much better for your needs, but you can bet some punk will come along, take a dump on all your hard work, and move the point somewhere else in the space. The cycle repeats. Never underestimate the power of worse is better and large working things start from small working things. Programmer's critique of missing structure of operating systems.

- I've never thought of security on the high seas before. Apparently it's no better than on land. Pen Testing Ships. A year in review:

- There is a distinct lack of understanding and interaction between IT and OT installers/engineers on board and in the yard.

- The OT systems are often accessible from the IT systems and vice versa, often through deliberate bypass of security features by those on board, or through poor design / poor password management / weak patch management.

- IT and bridge systems are often poorly configured or maintained.

- Maritime technology vendors have a ‘variable’ approach to security. A few offer reasonable security of their products. Most are terrible.

- We’ve even reviewed a maritime-specific security product that was vulnerable in itself, creating new security holes in a vessel rather than fixing them!

- Documentation of networks and systems often has little correlation with what is actually on board.

- Cruise ships add multiple new layers of technology, increasing the attack surface dramatically.

- Hotel systems (IT, booking systems, inventory, guest Wi-Fi, infotainment, CCTV, lighting, phones et al) plus the actual vessel itself (bridge systems, satcoms, navigation, ballast, engine management etc).

- The more vessels we review, the more we see that ship operators genuinely believe there is an air gap between the traditional IT systems and the on-board OT. That is almost never the case. On only one of the fifteen or so vessels I’ve be on was there a genuine air gap.

- One of the first things we do when we get onboard is to tap into the OT network and start sniffing the traffic. You would be shocked how often we find IP traffic that shouldn’t be there.

- We’ve also found TeamViewer installs on a number of occasions that the vessel owners and operators knew nothing about. Again these were vulnerable versions with really ridiculous credential choices.

- One of the biggest surprises (not that I should have been at all surprised in hindsight) is the number of installations we still find running default credentials – think admin/admin or blank/blank- even on public facing systems.

- Faster hamster, go faster, the wheel is eternal. Thoughts on non-linear growth in B2B startups:

- If you have a 10-year plan of how to get somewhere, you should ask yourself: why can’t you do this in 6 months?

- Once a company has product-market fit, how can it grow from $1m to $100m in revenues in one year? Why take 5–10 years?

- Go large enterprise on day 1

- my biggest takeaway has been: pursue distribution models with higher leverage per sales-and-marketing headcount. Ultimately, non-linearity comes from more leverage on the existing resources.

- 96-Core Processor Made of Chiplets: Already, some of the most of advanced processors, such as AMD’s Zen 2 processor family, are actually a collection of chiplets bound together by high-bandwidth connections within a single package. The CEA-Leti chip—for want of a better word—stacks six 16-core chiplets on top of a thin sliver of silicon, called an active interposer. The interposer contains both voltage regulation circuits and a network that links the various parts of the core’s on-chip memories together. Active interposers are the best way forward for chiplet technology if it is ever to allow for disparate technologies and multiple chiplet

- Keeping you engaged is a lot of work. How Spotify ran the largest Google Dataflow job ever for Wrapped 2019: For Wrapped 2019, which includes the annual and decadal lists, Spotify ran a job that was five times larger than in 2018 — but it did so at three-quarters of the cost. Singer attributes this to his team’s familiarity with the platform. “With this type of global scale, complexity is a natural consequence. By working closely with Google Cloud’s engineering teams and specialists and drawing learnings from previous years, we were able to run one of the most sophisticated Dataflow jobs ever written.”

- For a upcoming project we're using AWS Marketplace + Cloudformation in combination with the community edition of Chef Habitat to try to solve this problem. Once someone deploys the cloudformation template, it sets up:

- - Email (SES) - SSL certificates + renewal (ACM + lets encrypt) - Backup + Restore (RDS snapshots + AWS Backup for EFS) - ALB (if desired) - CDN (Cloudfront) - Firewall - Auto scaling database (RDS pgsql serverless) with automatic pausing - Auto scaling storage (EFS)

- You can adjust capacity by just dialing up or down the ASG, and our Elixir app auto-clusters using the Habitat ring for service discovery. Packages are upgraded when new versions are pushed to our package repository. All binaries run in jailed process environments scoped to Habitat packages, with configuration management and supervision handled by Habitat. This is a non-container approach, focusing on VMs. However, the theory is that this will be pretty much turnkey for a scalable self-hosted product on AWS, including software updates. It's hard to say how well the theory will fall out in practice, but I'm optimistic. Avoiding a mess of microservices was fairly important in making this kind of thing possible, we have a few services, with a dominant monolith in Elixir.

- Do you really think a version of an OS that will ship in two years on a new microcropressor should be developed on the trunk? No. Not everything is a website. You don’t need Feature Branches anymore.

Soft Stuff

- Netflix/dispatch (article): All of the ad-hoc things you’re doing to manage incidents today, done for you, and a bunch of other things you should've been doing, but have not had the time!

- m3db/m3: The fully open source metrics platform built on M3DB, a distributed timeseries database. M3, a metrics platform, and M3DB, a distributed time series database, were developed at Uber out of necessity. After using what was available as open source and finding we were unable to use them at our scale due to issues with their reliability, cost and operationally intensive nature we built our own metrics platform piece by piece. We used our experience to help us build a native distributed time series database, a highly dynamic and performant aggregation service, query engine and other supporting infrastructure.

- Ray: a high-performance distributed execution framework targeted at large-scale machine learning and reinforcement learning applications. It achieves scalability and fault tolerance by abstracting the control state of the system in a global control store and keeping all other components stateless. It uses a shared-memory distributed object store to efficiently handle large data through shared memory, and it uses a bottom-up hierarchical scheduling architecture to achieve low-latency and high-throughput scheduling. It uses a lightweight API based on dynamic task graphs and actors to express a wide range of applications in a flexible manner.

- linuxkit/linuxkit (podcast): A toolkit for building secure, portable and lean operating systems for containers

- wwwil/glb-demo (article): This is a demo of a container-native multi-cluster global load balancer, with Cloud Armor polices, for Google Cloud Platform (GCP) using Terraform.

Pub Stuff

- AppStreamer: Reducing Storage Requirements of Mobile Games through Predictive Streaming: AppStreamer using two popular games: Dead Effect 2, a 3D first-person shooter, and Fire Emblem Heroes, a 2D turnbased strategy role-playing game. Through a user study, 75% and 87% of the users respectively find that AppStreamer provides the same quality of user experience as the baseline where all files are stored on the device. AppStreamer cuts down the storage requirement by 87% for Dead Effect 2 and 86% for Fire Emblem Heroes.

- Challenges with distributed systems: Developing distributed utility computing services, such as reliable long-distance telephone networks, or Amazon Web Services (AWS) services, is hard. Distributed computing is also weirder and less intuitive than other forms of computing because of two interrelated problems. Independent failures and nondeterminism cause the most impactful issues in distributed systems. In addition to the typical computing failures most engineers are used to, failures in distributed systems can occur in many other ways. What’s worse, it’s impossible always to know whether something failed.

- Bio-neural gel packs? Establishing Cerebral Organoids as Models of Human-Specific Brain Evolution: Direct comparisons of human and non-human primate brains can reveal molecular pathways underlying remarkable specializations of the human brain. However, chimpanzee tissue is inaccessible during neocortical neurogenesis when differences in brain size first appear. To identify human-specific features of cortical development, we leveraged recent innovations that permit generating pluripotent stem cell-derived cerebral organoids from chimpanzee.

- From Spiral to Spline: Optimal Techniques in Interactive Curve Design: A central result of this thesis is that any spline sharing these properties also has the property that all segments between two control points are cut from a single, fixed generating curve. Thus, the problem of choosing an ideal spline is reduced to that of choosing the ideal generating curve. The Euler spiral has excellent all-around properties, and, for some applications, a log-aesthetic curve may be even better.

- cloud-hypervisor/cloud-hypervisor: Cloud Hypervisor is an open source Virtual Machine Monitor (VMM) that runs on top of KVM. The project focuses on exclusively running modern, cloud workloads, on top of a limited set of hardware architectures and platforms. Cloud workloads refers to those that are usually run by customers inside a cloud provider. For our purposes this means modern Linux* distributions with most I/O handled by paravirtualised devices (i.e. virtio), no requirement for legacy devices and recent CPUs and KVM.

- Spotify Guilds: Our study shows that maintaining successful large-scale distributed guilds and active engagement is indeed a challenge. We found that only 20% of the members regularly engage in the guild activities, while the majority merely subscribes to the latest news. In fact, organizational size and distribution became the source of multiple barriers for engagement. Having too many members, and especially temporal distance, means that scheduling joint meeting times is problematic. As a respondent noted: "Guilds seem bloated and diluted. There could be a need for a guild-like forum on a smaller scale." This is why regional sub-guilds emerged in response to the challenges of scale. At the same time, cross-site coordination meetings and larger socialization unconferences were recognized for their benefits. We therefore suggest that guilds in large-scale distributed environments offer both regional and cross-site activities.

- Millions of Tiny Databases (article): Physalia is a transactional key-value store, optimized for use in large-scale cloud control planes, which takes advantage of knowledge of transaction patterns and infrastructure design to offer both high availability and strong consistency to millions of clients. Physalia uses its knowledge of datacenter topology to place data where it is most likely to be available. Instead of being highly available for all keys to all clients, Physalia focuses on being extremely available for only the keys it knows each client needs, from the perspective of that client. This paper describes Physalia in context of Amazon EBS, and some other uses within Amazon Web Services. We believe that the same patterns, and approach to design, are widely applicable to distributed systems problems like control planes, configuration management, and service discovery